Ansh Radhakrishnan

51 posts

Ansh Radhakrishnan

@anshrad

Researcher @AnthropicAI

I'm excited to join @AnthropicAI to continue the superalignment mission! My new team will work on scalable oversight, weak-to-strong generalization, and automated alignment research. If you're interested in joining, my dms are open.

I'm excited to join @AnthropicAI to continue the superalignment mission! My new team will work on scalable oversight, weak-to-strong generalization, and automated alignment research. If you're interested in joining, my dms are open.

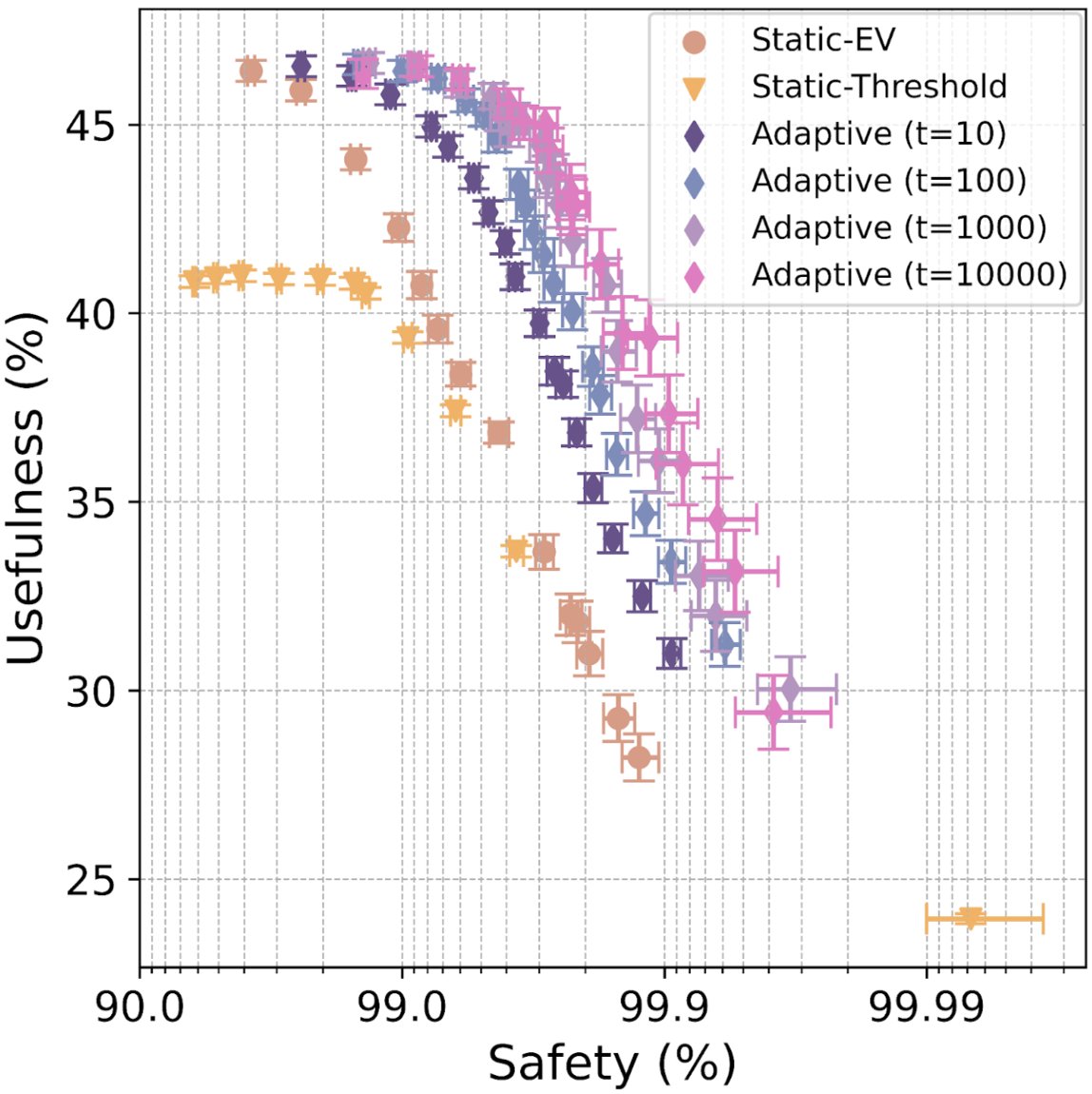

We’re hiring for the adversarial robustness team @AnthropicAI! As an Alignment subteam, we're making a big effort on red-teaming, test-time monitoring, and adversarial training. If you’re interested in these areas, let us know! (emails in 🧵)

GPQA is still very hard for new LLMs! sidenote: it's crazy that this is worth saying despite it coming out only three months ago x.com/idavidrein/sta…