Laura Ruis

1.4K posts

Laura Ruis

@LauraRuis

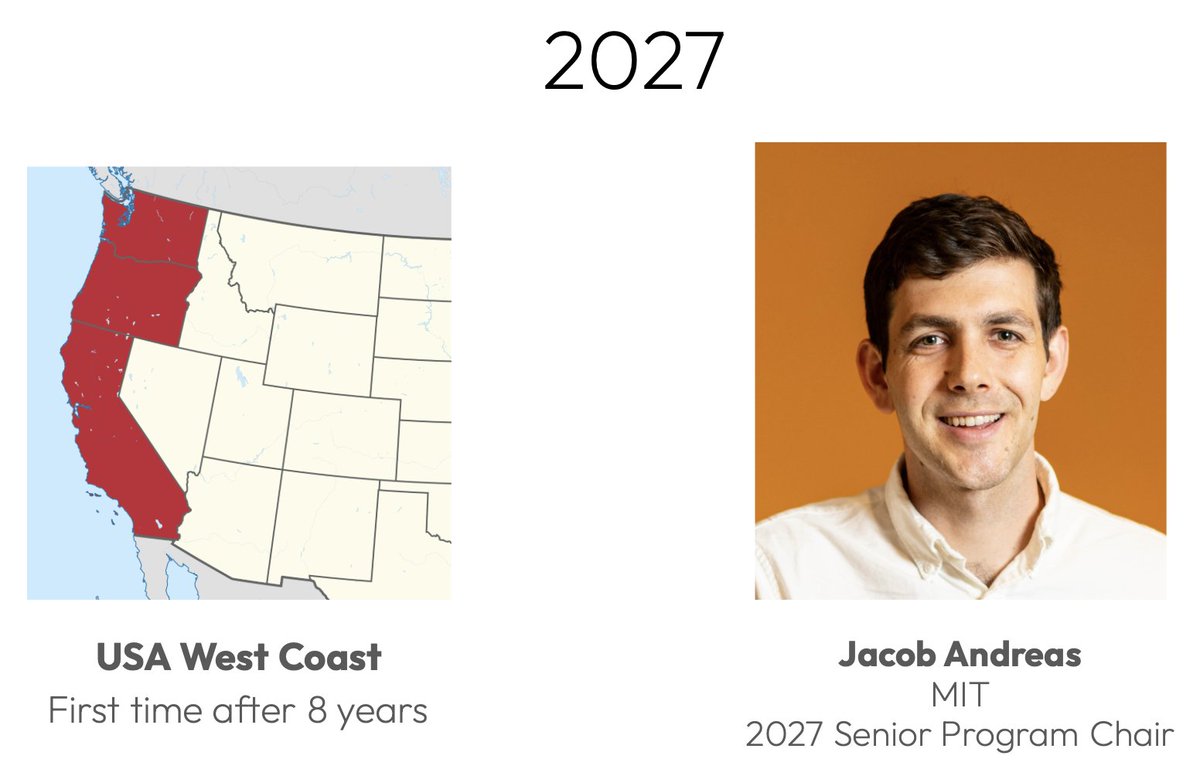

Postdoc with @jacobandreas @MIT_CSAIL. PhD from @ucl_dark with @_rockt and @egrefen. Anon feedback: https://t.co/sbebAl53tU

Your RL post-training may be sabotaging your LLM’s test-time scaling! Conventional RL pretends that you can collapse all reward signals *upfront* into a single *scalar reward*. We introduce Vector Policy Optimization (VPO), which natively maximizes *vector-valued* rewards, boosting test time search performance, even on the original scalar.

To focus on this, I’ve stepped away from running alignment at Anthropic. @EthanJPerez and @sprice354_ are leading the team going forward, and I’m confident they’ll do an amazing job.

Neural networks might speak English, but they think in shapes. Understanding their rich *neural geometry* is key to understanding how they work – and to debugging and controlling them with precision. Starting today, we’re releasing a series of posts on this research agenda. 🧵

There's a quadrillion-dollar question at the heart of AI: Why are humans so much more sample efficient compared to LLM? There are three possible answers: 1. Architecture and hyperparameters (aka transformer vs whatever ‘algo’ cortical columns are implementing) 2. Learning rule (backprop vs whatever brain is doing) 3. Reward function @AdamMarblestone believes the answer is the reward function. ML likes to use pretty simple loss functions, like cross-entropy. These are easy to work with. But they might be too simple for sample-efficient learning. Adam thinks that, in humans, the large number of highly specialised cells in the ‘lizard brain’ might actually be encoding information for sophisticated loss functions, used for ‘training’ in the more sophisticated areas like the cortex and amygdala. Like: the human genome is barely 3 gigabytes (compare that to the TBs of parameters that encode frontier LLM weights). So how can it include all the information necessary to build highly intelligent learners? Well, if the key to sample-efficient learning resides in the loss function, even very complicated loss functions can still be expressed in a couple hundred lines of Python code.

New paper w/ @AISecurityInst: AI writing assistance distorts how others perceive AI users and their opinions. Millions of people now use AI to help them write and communicate. In three large experiments (14k participants, 3m+ human ratings) we show that AI writing assistance systematically distorts writer personas – their perceived beliefs, personality, and identity. These distortions are consistent across AI models and persist even under realistic conditions of human oversight. 🧵