Alex Gajewski

98 posts

Alex Gajewski

@apagajewski

Building a preschool for robots @pantographPBC. Previously cofounder @sfcompute, @ExaAILabs

Pantograph is building robots that learn through self-supervised RL at unprecedented scale. We're hiring a software engineer to work on our core robot stack and testing infrastructure, including controller logic, component testing, and data collection pipelines. Rust and embedded software experience is a big plus, but mostly we're looking for a relentlessly curious and capable generalist who is excited to learn and get robots out into the world.

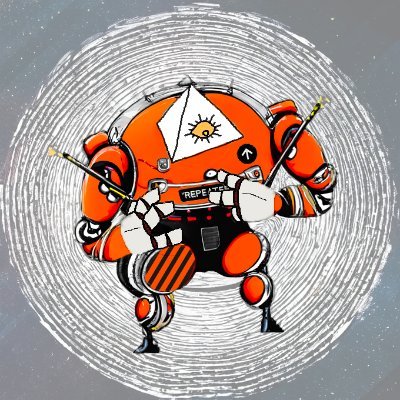

Today, we're sharing an early preview of our first generation hardware: treaded base, two six-degree-of-freedom arms, 1kg continuous payload each. We've put 10,000+ hours of stress and endurance testing into the critical components.

Extremely excited to show a preview of what we've been working on!

Devtools for AI Agents @dessaigne AI agents are the next wave: autonomous tools that reason, decide, and amplify human productivity. We’re funding startups building devtools for agents, whether you’re creating agent builders or building blocks to perform complex tasks.

Hey friends, we're excited to announce that an additional 2,000 H100s will be added @sfcompute's on-demand market. It's the largest* interconnected cluster, from any provider (including hyperscalers), that you can get on a per hour basis. You're not locked in with San Francisco Compute. If DeepSeek can compete with OpenAI using 2,000 H800s, you too can train a state of the art RL model without ever having to sign a long-term contract that you can't exit. You could have trained DeepSeek-v3 for $4.5m for 1.5mo on SFC or $35m if you could only buy a 1 year contract off market. This was the dream Alex & I had since our audio model company (Junelark) died because it couldn't procure enough GPUs, and it's what we've been working towards for nearly two years. Long-term contracts are a trap; they make it so only the biggest of the big can compete in AI. They force startup founders to raise at massive valuations pre-revenue, which dilutes founders and employees and sets them up to fail when they can't raise their next round. This cluster will roll out over the next few weeks as we scale our infrastructure. Soon you'll be able to access it via our managed Kubernetes service or by reaching out to set up a custom solution. We're also exploring other ways of partnering with service providers to let them offer GPU-based services, like workers and inference endpoints, without being forced into a long-term contract with a hyperscaler. You no longer need to bet your company on GPU prices to offer GPU-based services. * We think! If you know of a larger, please correct us!