aksh parekh

202 posts

aksh parekh

@aparekh02

18 | building cadenza | @stanford cs | reaching $10k MRR (1 month!)

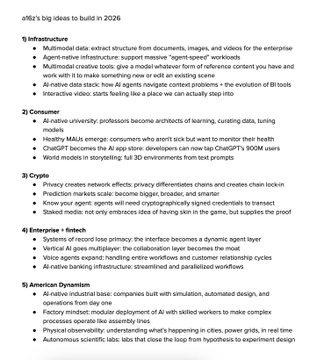

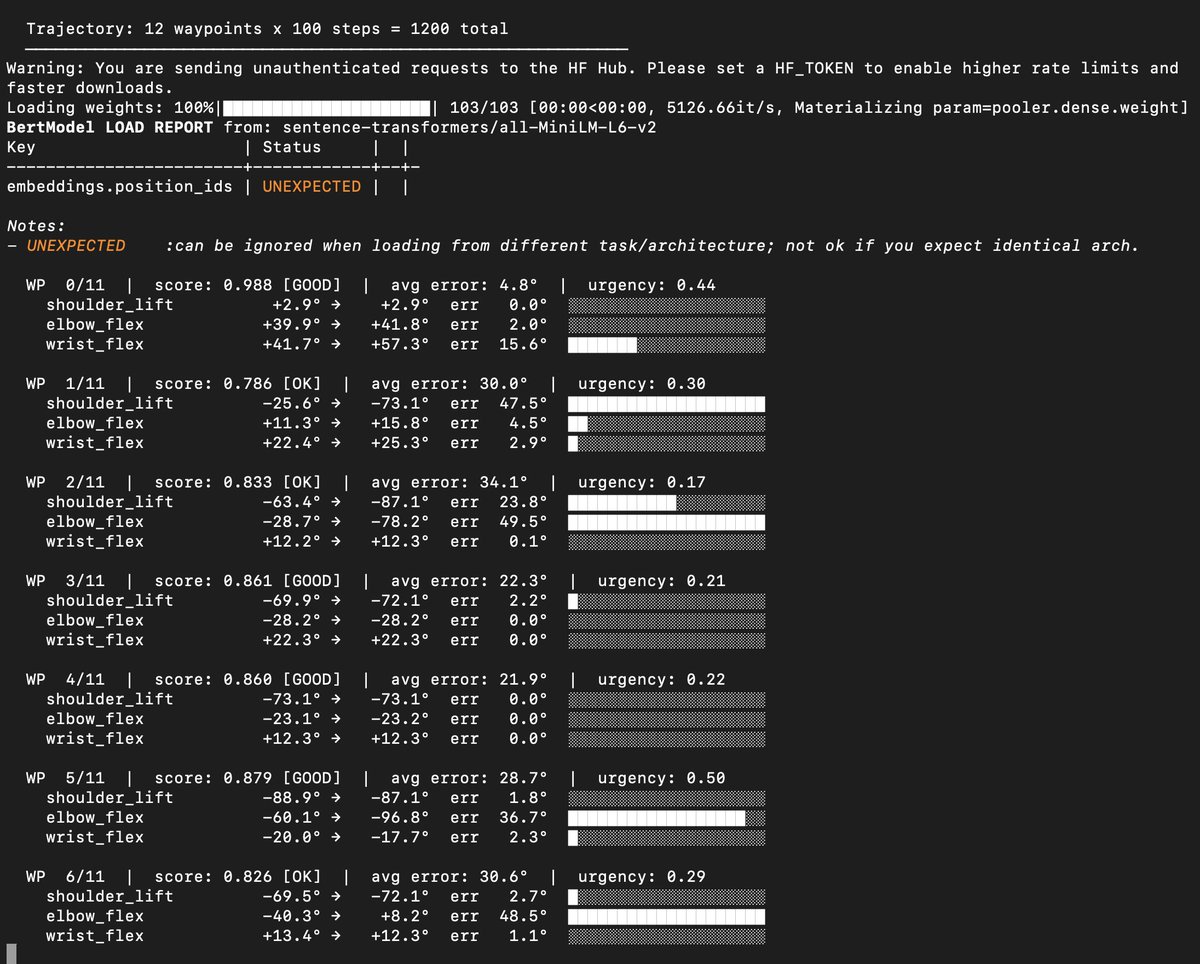

After 1 month of work while 18, I am proud to announce the first on-edge, memory-based, developer-focused robot OS: Cadenza! Check out the repo link below! I seek to share the value of optimized, intelligent OS to everyone! (The Go1 below is running ONLY memory on Cadenza)

bros really pulled up to watch jensen huang on a big screen

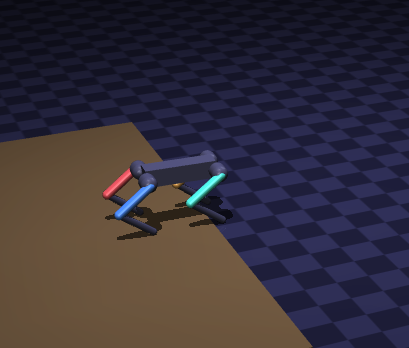

last week we got 1M views and 100s of death threats for giving Openclaw access to drones, humanoids, quadrupeds, and other physical hardware. Now we’re releasing EVERYTHING open-source. Dimensional gives agents access to the physical world. Join us. Repo 👇🏽👇🏽👇🏽

We won the SF OpenClaw Hackathon! 🏆🤖🦞 Now open-sourcing ROSClaw - connects @rosorg robots to @openclaw agents. Your AI agent can: ⊙ Discover robots/topics ⊙ Bridge from Linux or Mac mini ⊙ Connect ANYWHERE via WebRTC ⊙ Grasp/move in real world Agents escaped the screen!