Applied Compute

134 posts

Applied Compute

@appliedcompute

We build Specific Intelligence for enterprises.

Today we're releasing SWE-check, a specialized bug detection model we RL-trained with @appliedcompute that matches frontier performance on internal in-distribution evals and makes meaningful progress on out-of-distribution evals, all while running 10x faster.

Today we're releasing SWE-check, a specialized bug detection model we RL-trained with @appliedcompute that matches frontier performance on internal in-distribution evals and makes meaningful progress on out-of-distribution evals, all while running 10x faster.

There is a large delta between what models can do and what they deliver in company-specific workflows. We bridge that gap through forward deployment. In a given week, our engineers might build eval frameworks from scratch, deploy a large-scale context ingestion engine, and present results to F500 leadership. We fine-tune models on proprietary data no frontier lab has seen and optimize agent performance against real-world outcomes. We're excited by engineers with rigor, high customer empathy, and a bias toward action in ambiguity. appliedcompute.com/blog/unlocking…

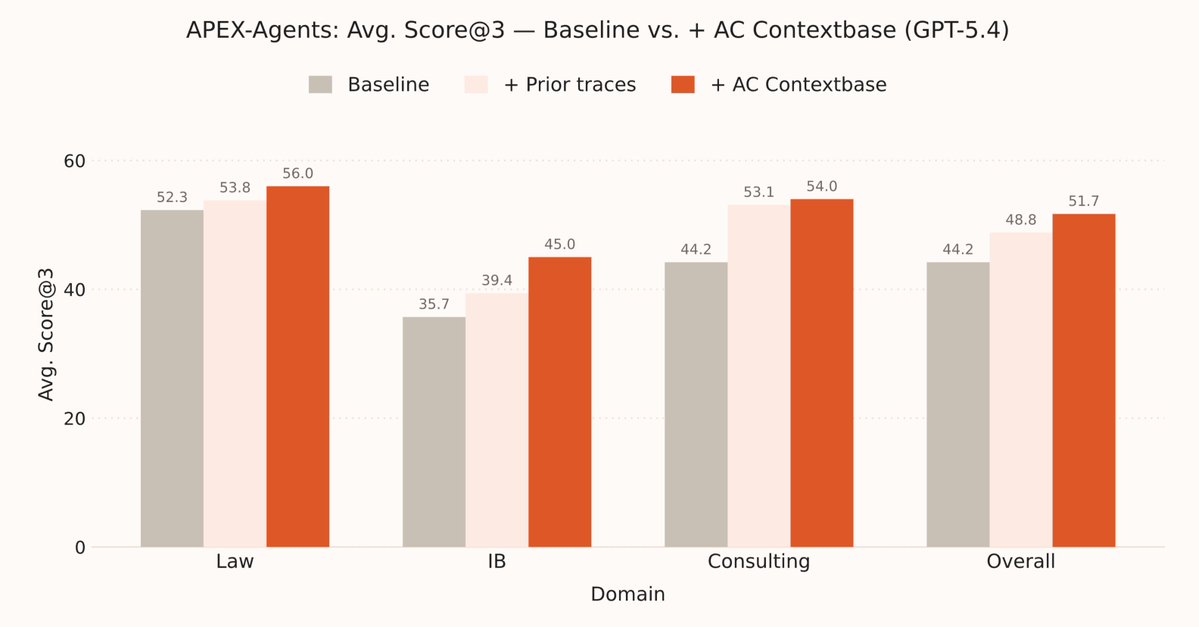

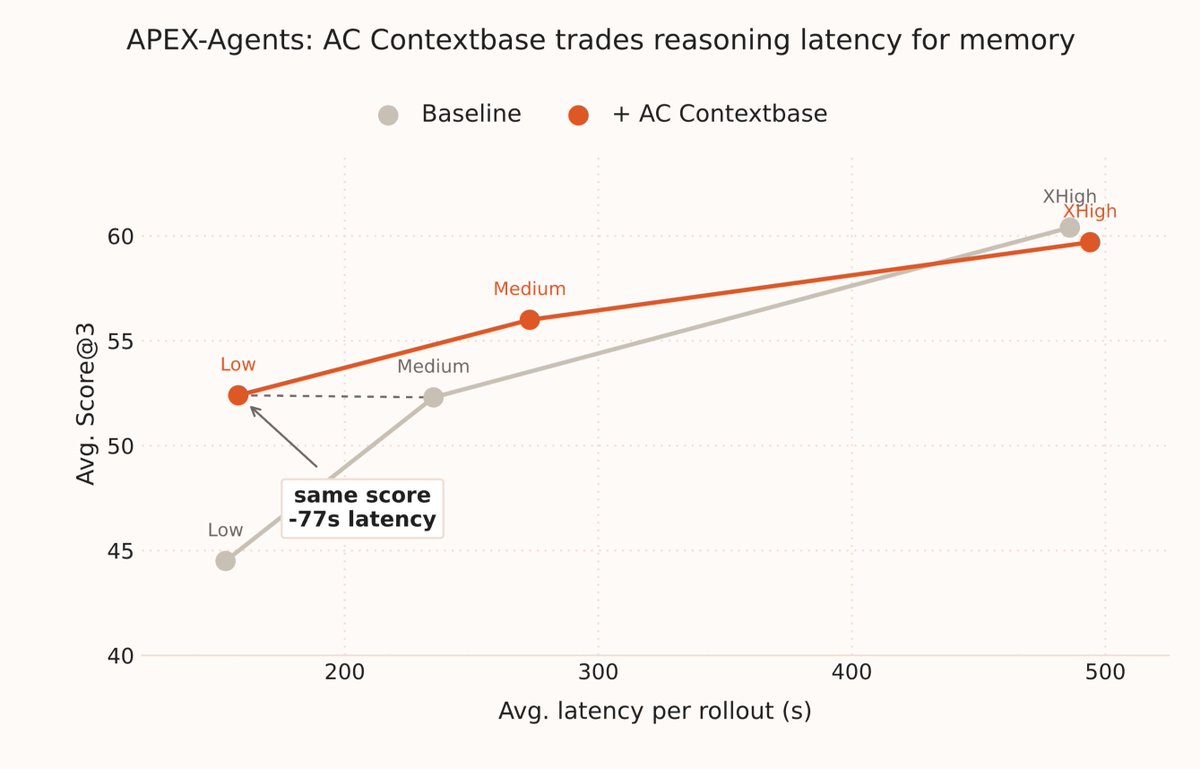

Does training on APEX-Agents dev set generalize beyond the benchmark? @appliedcompute post-trained GLM-4.7 on ~2,000 expert Mercor tasks and achieved state-of-the-art legal performance on APEX Agents. We then evaluated the model on other enterprise benchmarks. On GDPVal, AC-Small’s win+tie rate rose from 55.0% to 62.7% (+7.7pp), ranking 5th overall and ahead of Opus 4.5.