Sabitlenmiş Tweet

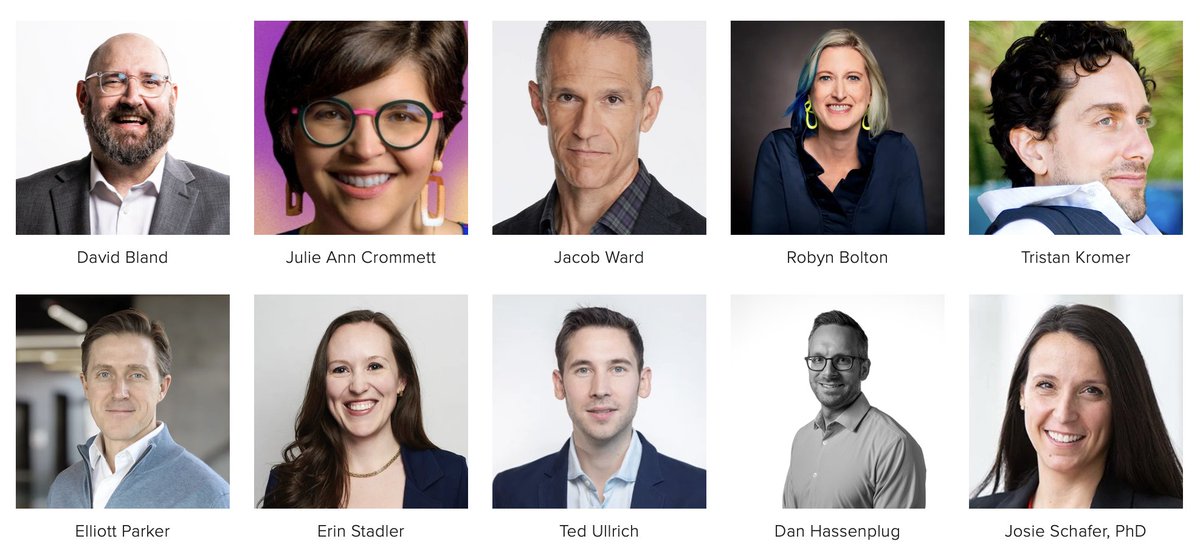

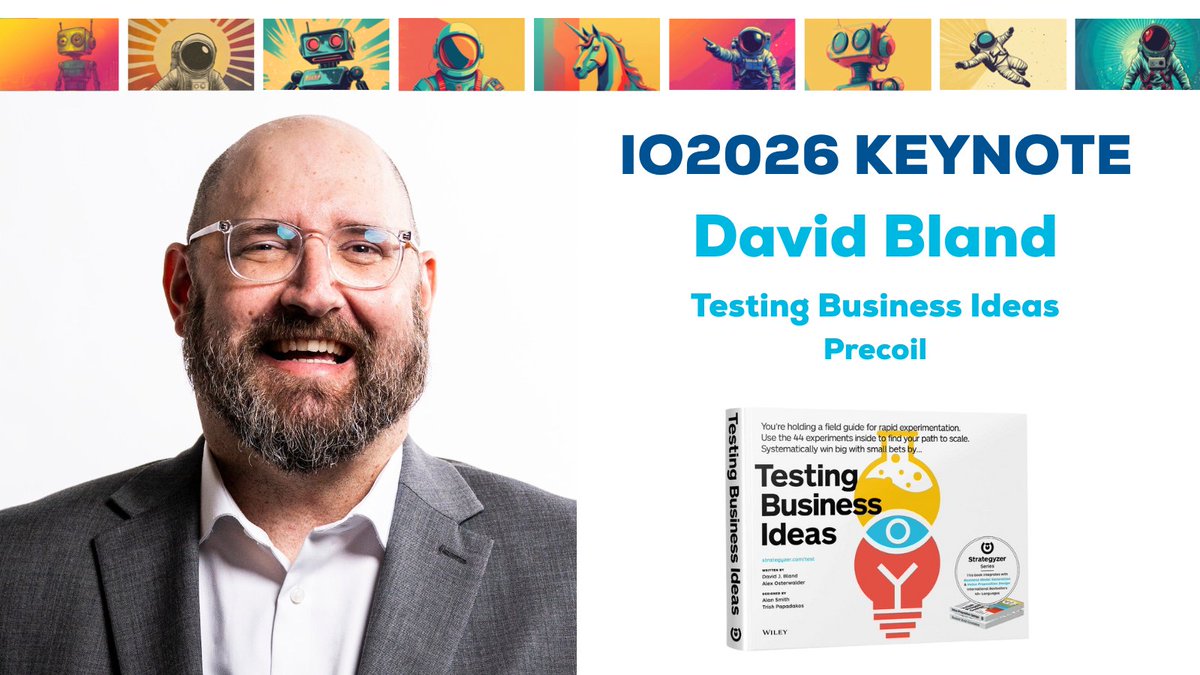

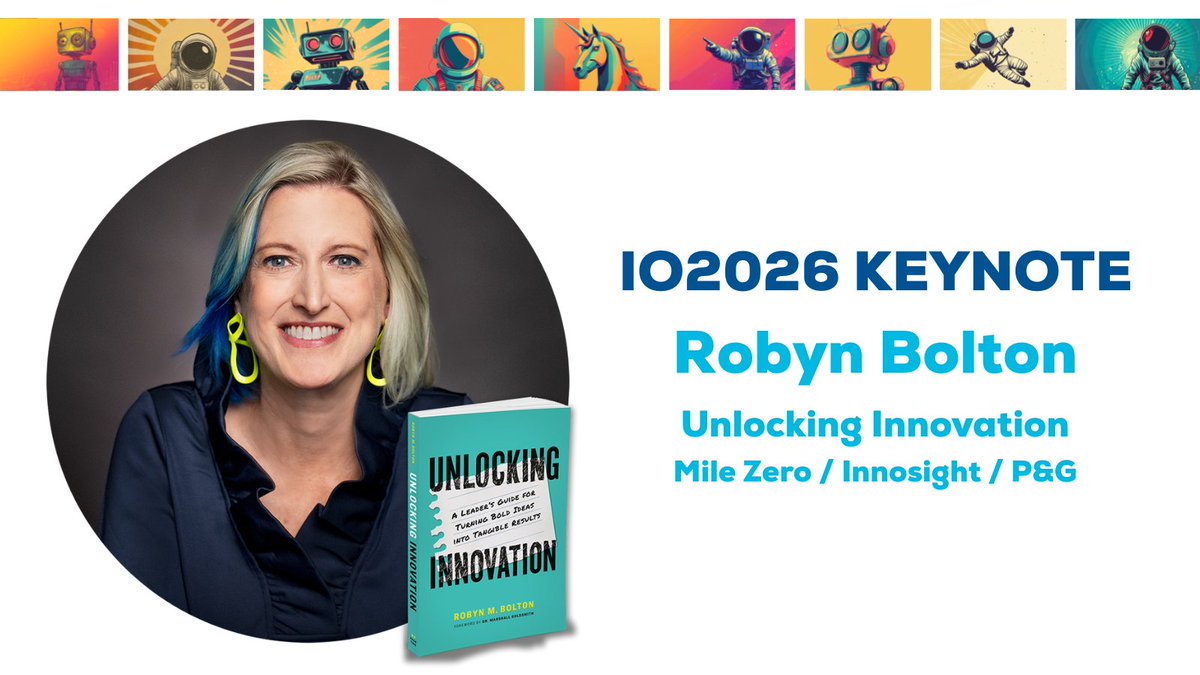

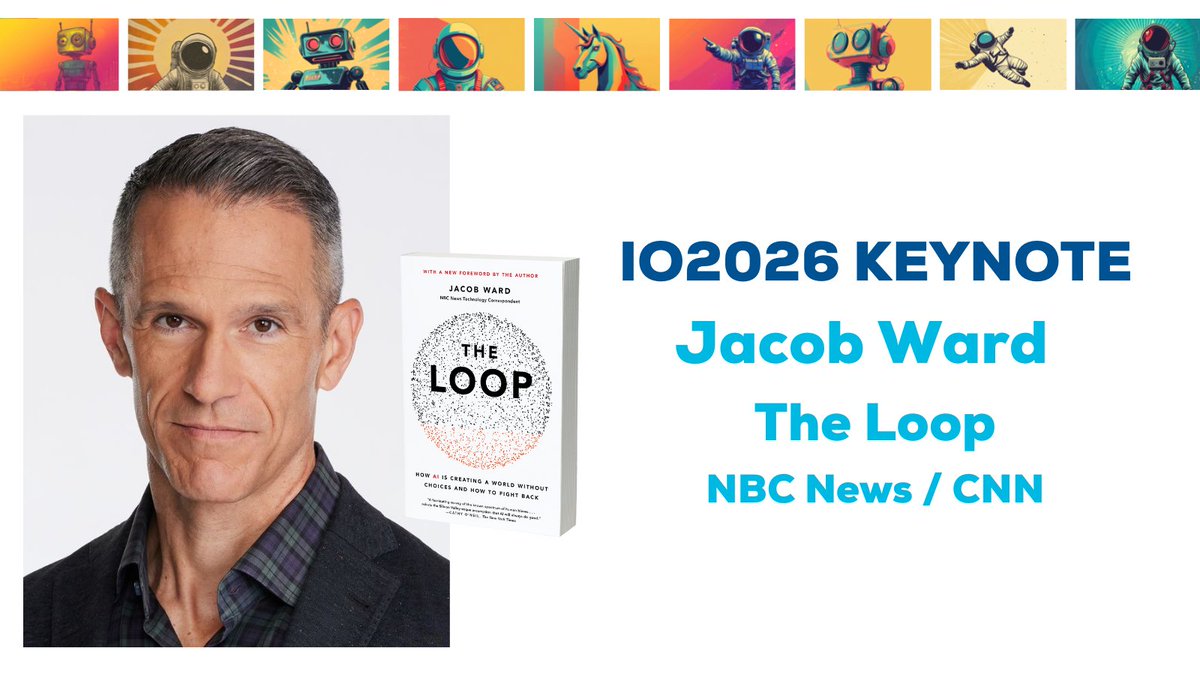

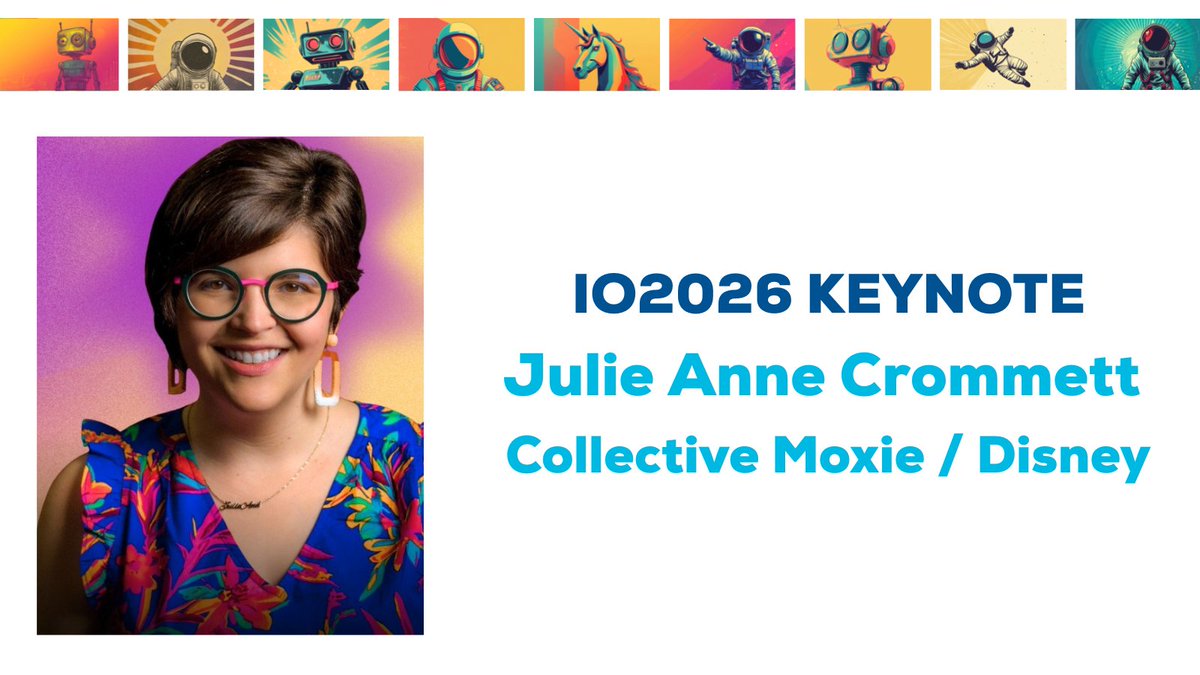

Innovation happens when ideas collide. IO2026 (@theiosummit) brings together startups, corporate innovators, R&D teams, product leaders, and curious creators. If your team is driving growth, experimentation, or new tech adoption, join us...

🎟️ io2026.com

English