David Aronchick

311 posts

David Aronchick

@aronchick

CEO, https://t.co/U7uKl4r9iE Cofounder, https://t.co/i9QX6JxCBa Ex: MSFT, K8s, Kubeflow, GOOG, AMZN. 4x founder/CEO. There is many a worse and more elaborate life. He/him

What if I told you this guy was falling to the floor every play?

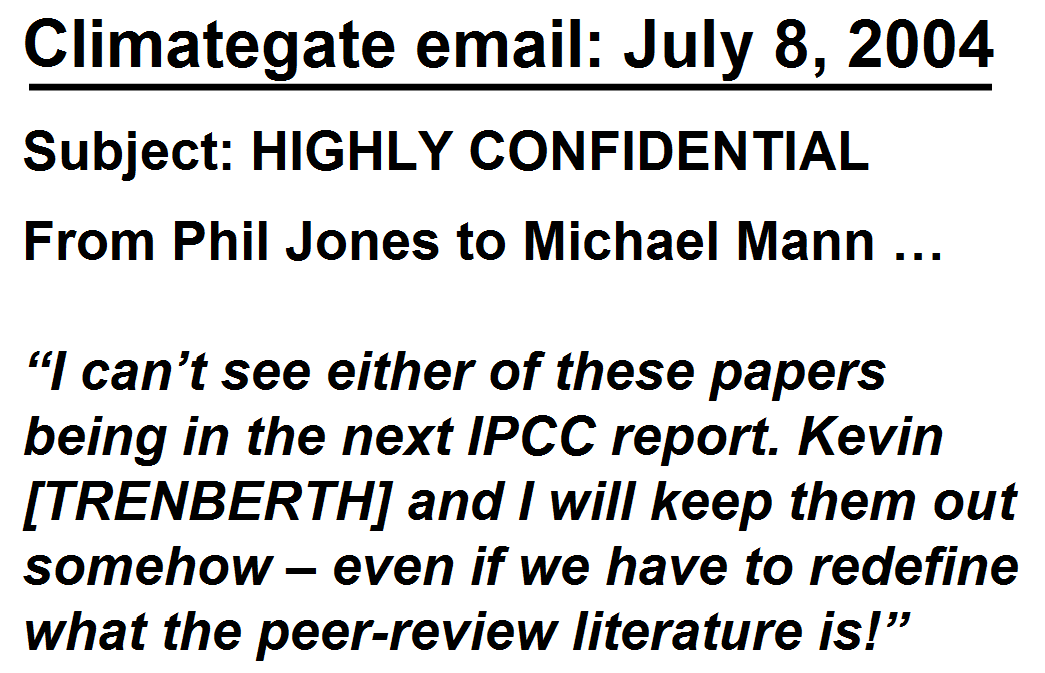

An oil executive is a productive member of society. Climate Alarmists in contrast are involved in dogma, falses narratives, and seek to destroy both the economy and environment. #auspol #BBCNews

@souljagoyteller Can you explain his mistake in more detail? Is it that we can never run out of interesting math to do? Do we know this for sure (a theorem?) or as a common-sensical extension of the idea that we can study whatever mathematical structures we want?

I'm almost at the point where I'm going to stop asking AI questions and instead ask it to find sources that might answer them. Because I know what it's doing: pushing the linguistic buttons in my brain that lead me to believe it and stick with it, even as it gives me garbage.

@Euthenos_ Rural people and suburbanites do not care one bit abt how city people choose to live. You do you. Urban collectivists make it their life’s mission to ensure everyone lives exactly like they do.

Everything from exorbitant FIFA World Cup ticket prices to "fighting hard" for 1,000 fair-priced tickets for NY residents is laughable... Metlife stadium capacity: 82,500 Seven matches: 7 * 82,500 = 577,500 Share of $50 tickets: 1,000 / 577,500 = 0.17%

Ah cool Soviet style lotteries, so empowering! The proletariat can now watch a soccer game with the blessing of luck.

@zdeborova @eiszett Why would you tell on yourself like this?