Arvindh Arun

129 posts

Arvindh Arun

@arvindh__a

Building and evaluating Foundation Models (all kinds), @ELLISforEurope @MPI_IS PhD student

new blog! How to Train your Time Horizon 🐉⌛️ TLDR: - I cover the recent "noisy" results METR released recently regarding Opus 4.6 and Codex. - I try to find the source of the noise, separate from the task suite saturating. - I propose alternative strategies METR can look at to expand their task suite - I lay down my claim about "superhuman" time horizons, and how it's likely model reach this level within 2 years. - I look at how we can continue evaluating AI even after they cross this threshold of "superhuman" times Link below!!!

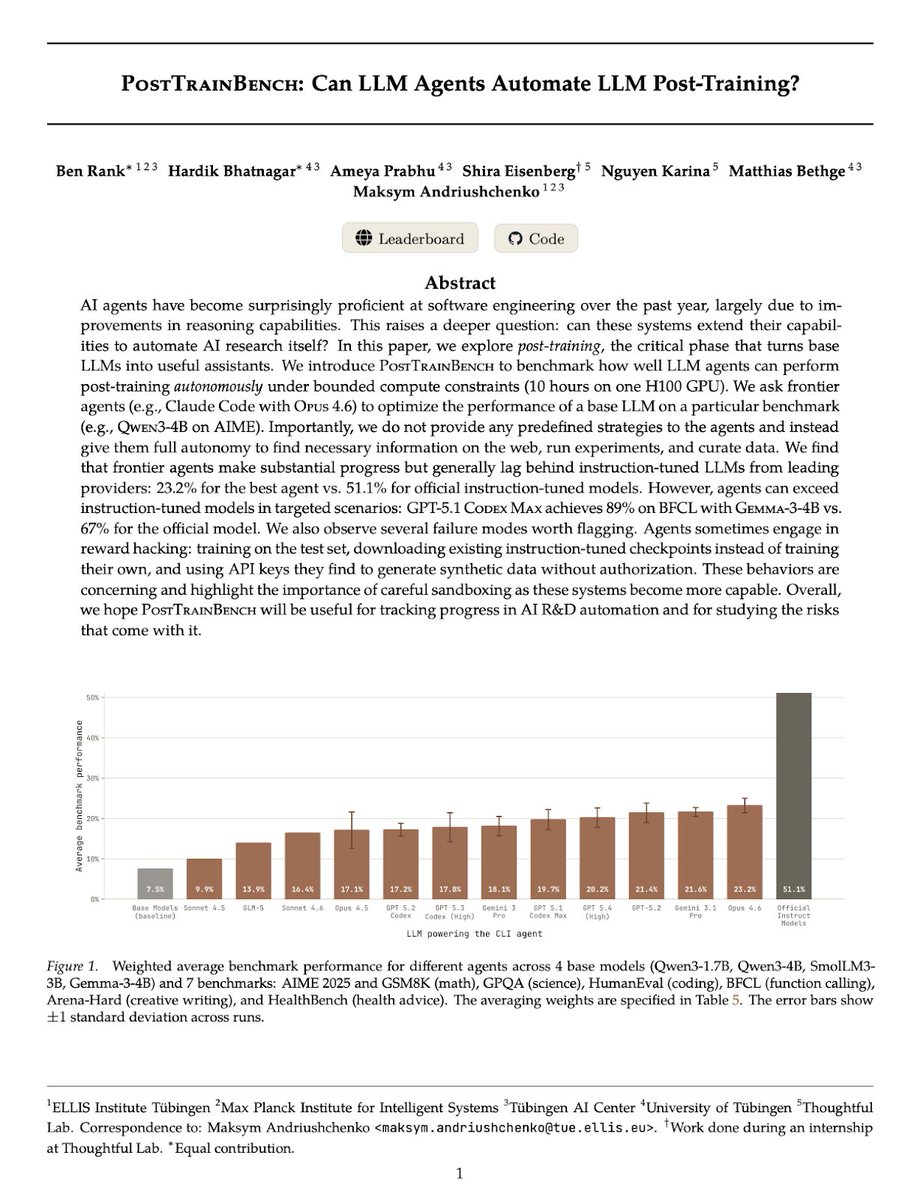

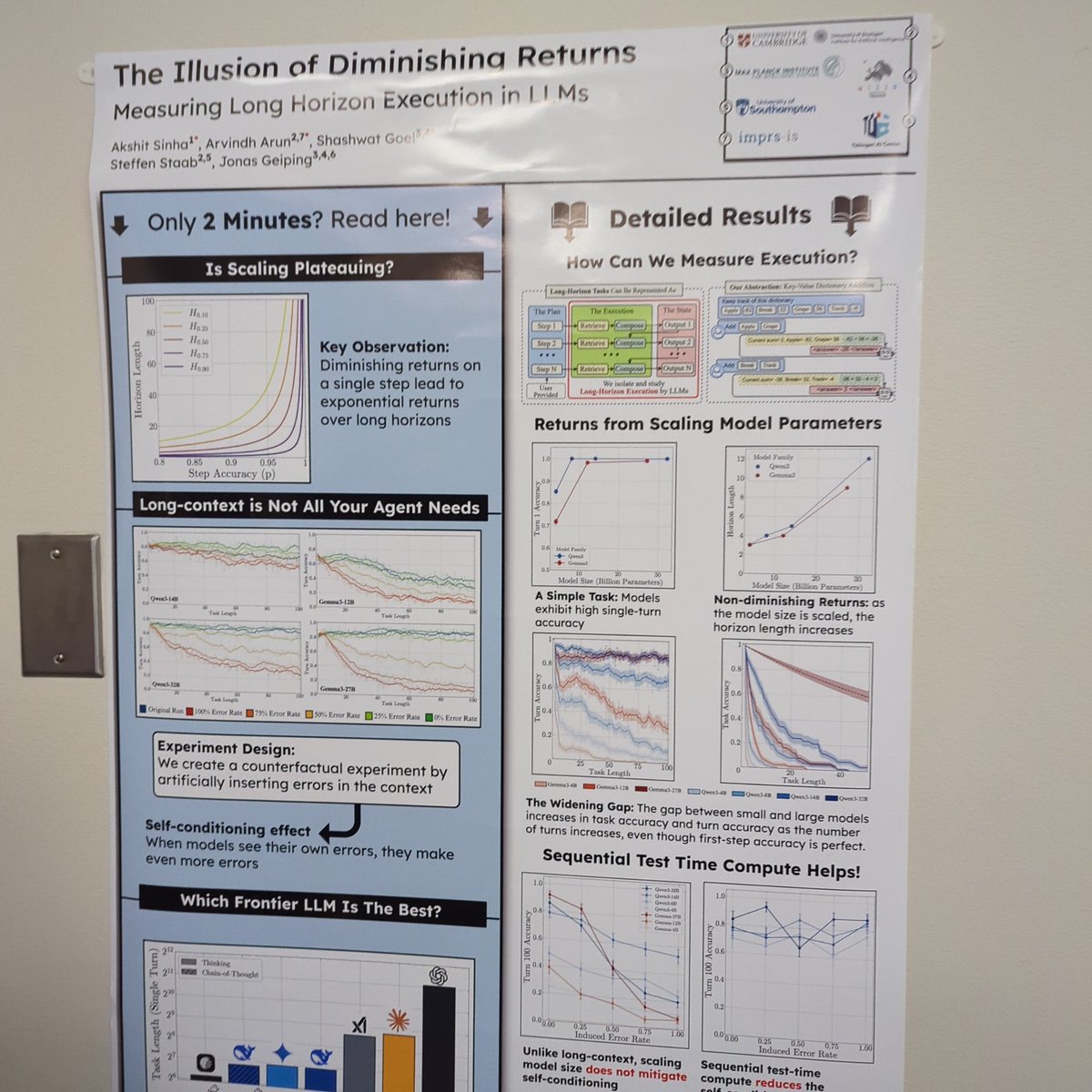

Why does horizon length grow exponentially as shown in the METR plot? Our new paper investigates this by isolating the execution capabilities of LLMs. Here's why you shouldn't be fooled by slowing progress on typical short-task benchmarks... 🧵

New Blogpost: How to game the METR plot🚨 In 2025, a single graph changed AGI timelines, investments, research priorities, model quality assessments and much more. But if you squint harder, only 14 prompts shaped AI discourse over this year. Thats all the data in the 1-4 hour horizon length regime that matters. 🕵️ What's more? A majority of these are about Cybersecurity capture the flag contests, and training a Machine Learning model. > Post-train your model on CTF and ML codebases > profit 📈! its METR horizon length will increase. Exactly what OpenAI has been targeting in its Codex model releases... and is Anthropic underperforming in the 2-4hr range because it mostly consists of cybersecurity, which is dual-use for safety? To be clear, I think its an excellent idea to track horizon lengths instead of benchmark accuracy. But under the current modelling assumption of success probability being a logistic function of task length, SWAA+HCAST accuracy improvements alone might explain the exponential progress in horizon length 🔎 In the blog, I show detailed evidence for why we need to stop overindexing on the METR plot. Share it with anyone you see making decisions based on where the latest model lands on the METR plot. shash42.substack.com/p/how-to-game-…

Why does horizon length grow exponentially as shown in the METR plot? Our new paper investigates this by isolating the execution capabilities of LLMs. Here's why you shouldn't be fooled by slowing progress on typical short-task benchmarks... 🧵

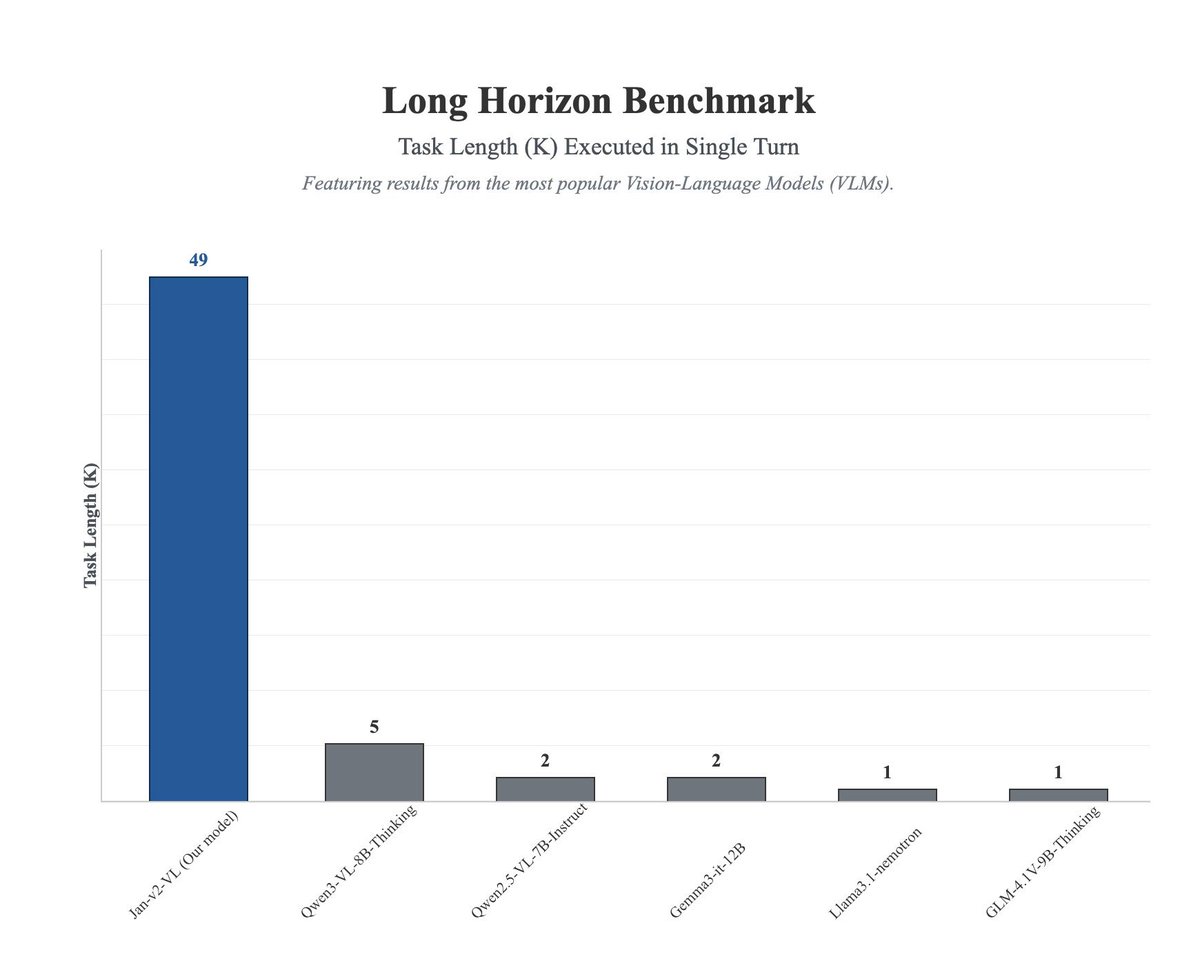

Introducing Jan-v2-VL, a multimodal agent built for long-horizon tasks. Jan-v2-VL executes 49 steps without failure, while the base model stops at 5 and other similar-scale VLMs stop between 1 and 2. It achieves longer, stable task execution in your browser without accuracy loss. 3 variants are available: - Jan-v2-VL-low (efficiency-oriented) - Jan-v2-VL-med (balanced) - Jan-v2-VL-high (deeper reasoning and longer execution) Models: huggingface.co/collections/ja… To use it, update your Jan App and download Jan-v2-VL from the Model Hub. Activate Browser MCP servers for agentic use cases. Credit to the @Alibaba_Qwen team for Qwen3-VL-8B-Thinking base model.

A “Who is Adam?” successor has arrived

Does text help KG Foundation Models generalize better? 🤔 Yes (and no)! ☯️ Bootstrapped by LLMs improving KG relation labels, we show that textual similarity between relations can act as an invariance - helping generalization across datasets! 🧵👇