Bhavishya Pohani

20 posts

Bhavishya Pohani

@Azrael2801

Applied Research Scientist @ Snorkel AI

A 4B model > 235B on financial reasoning. We partnered with @rllm_project to fine-tune Qwen3-4B-Instruct-2507 — and it outperformed Qwen3-235B-A22B on expert-curated financial benchmarks.

AI demand is exploding—but so are energy costs. Proud to support @HazyResearch, @StanfordAILab, @Avanika15, and @JonSaadFalcon on Intelligence Per Watt, a study redefining how we measure progress: how efficiently we turn energy into intelligence.

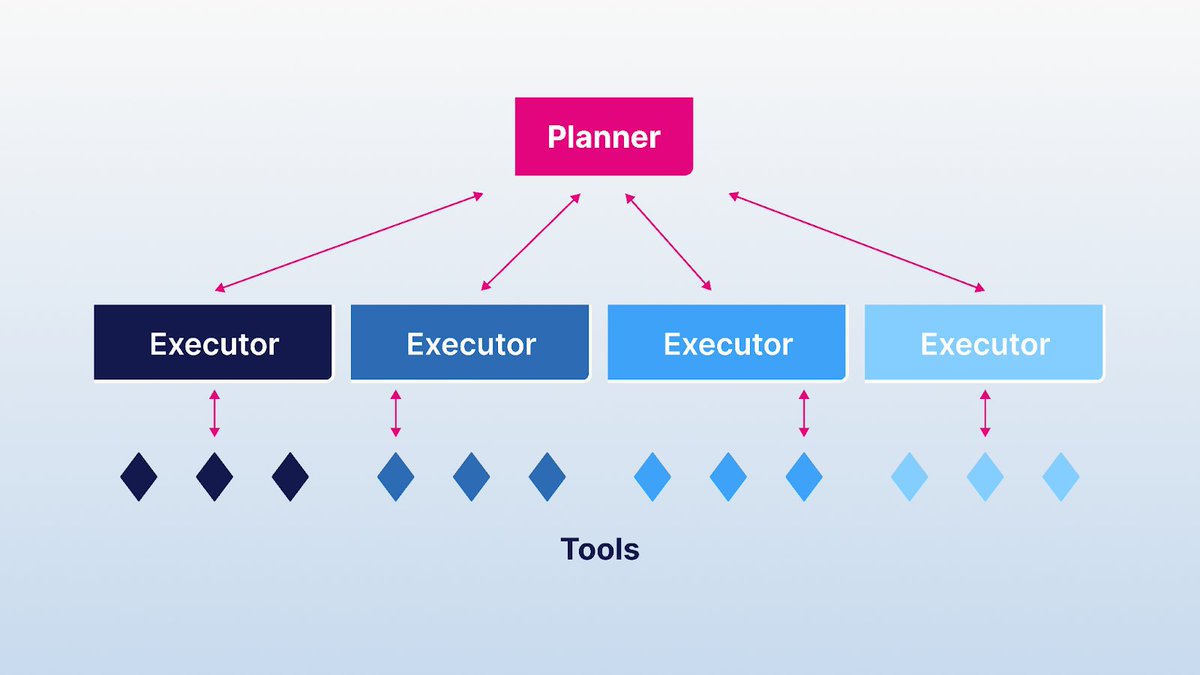

New from @ArminPCM : how Snorkel builds reinforcement learning (RL) environments that train and evaluate agents in realistic, enterprise-grade settings.

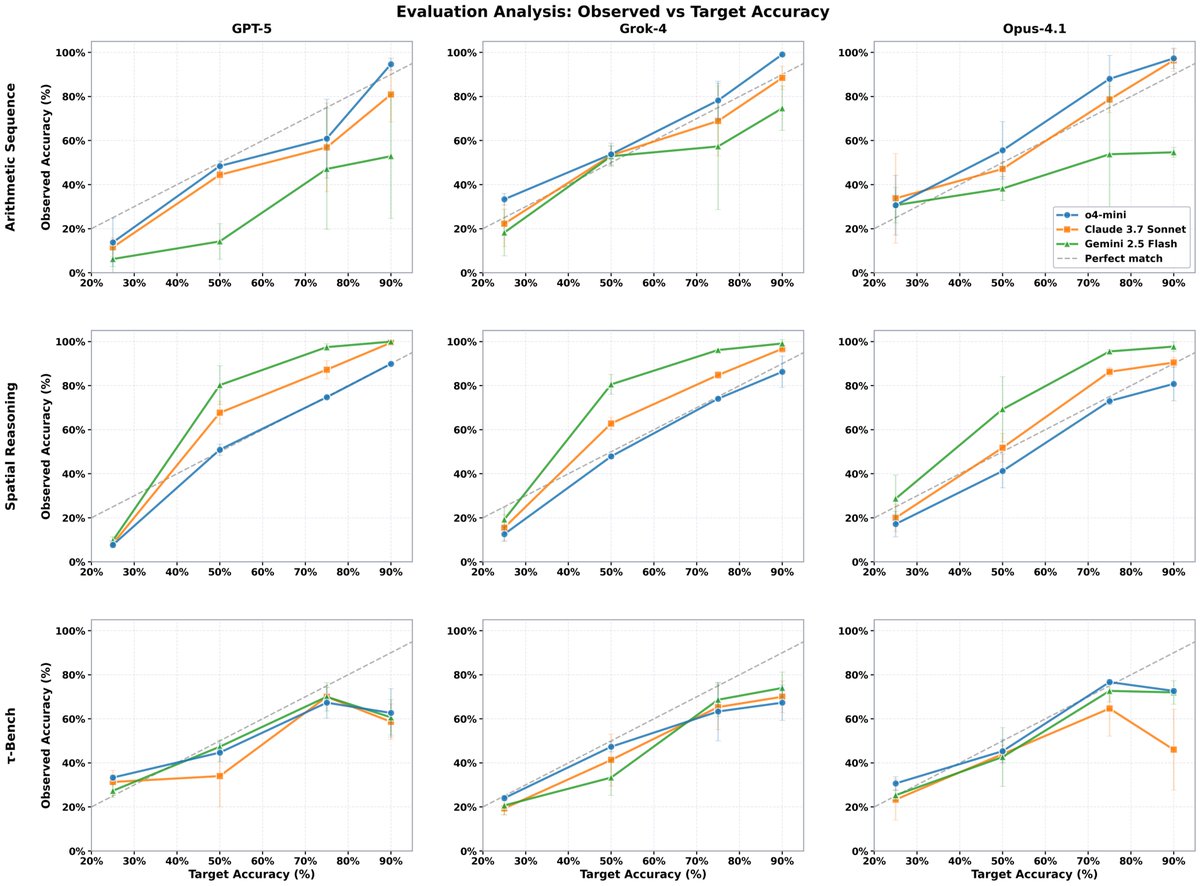

Static benchmarks can’t keep up with the pace of AI progress. Our latest research introduces BeTaL—Benchmark Tuning with an LLM-in-the-loop—a framework that uses reasoning models to optimize benchmark design dynamically. ✍️ From the Snorkel Research team: @amanda_dsouza , @harit_v , @qi_zhengyang , @realjustinbauer, @pham_derek, Tom Walshe, @ArminParchami, @fredsala, and Paroma Varma

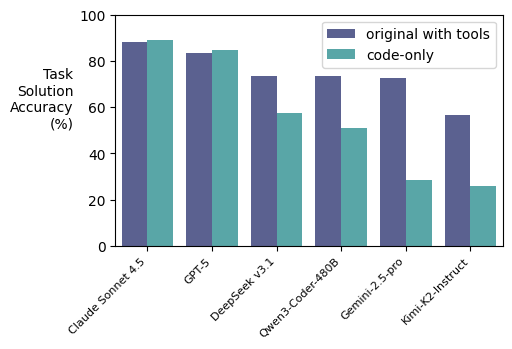

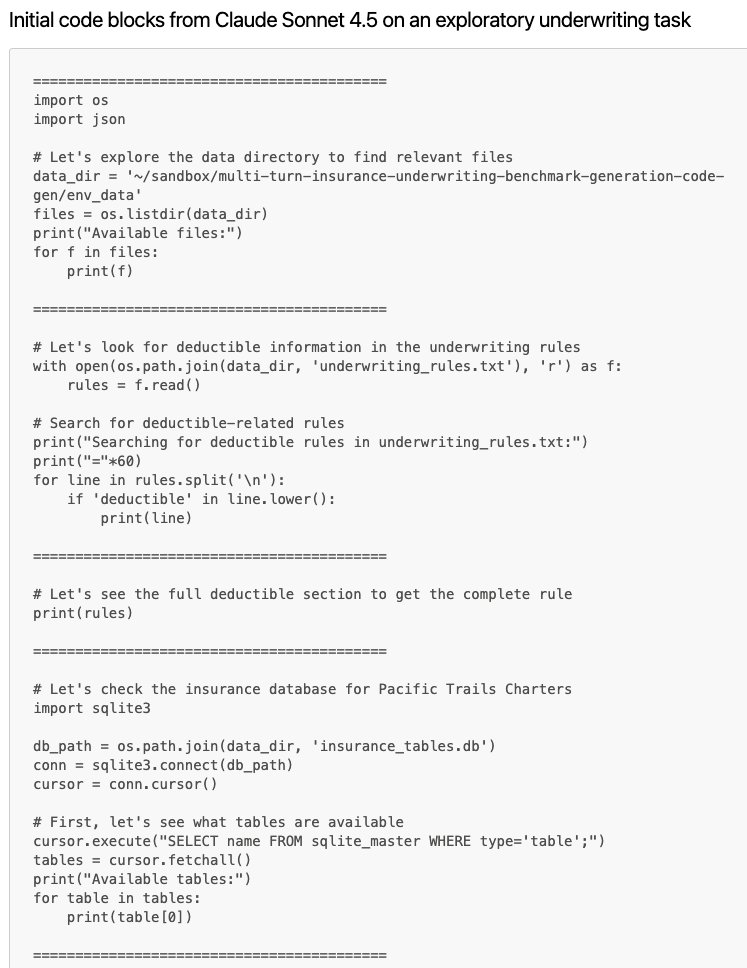

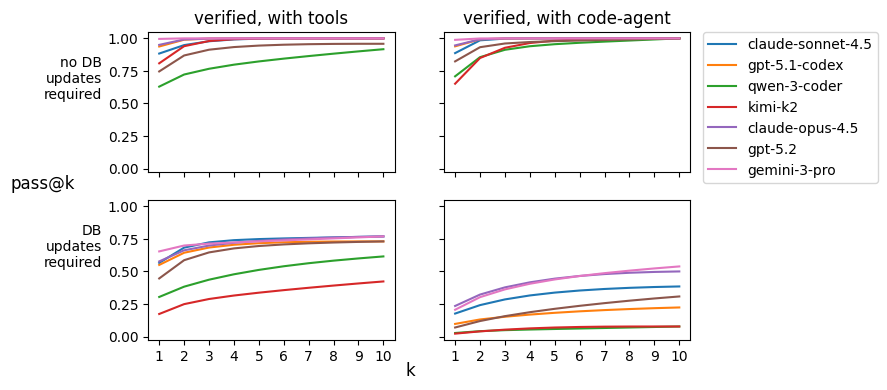

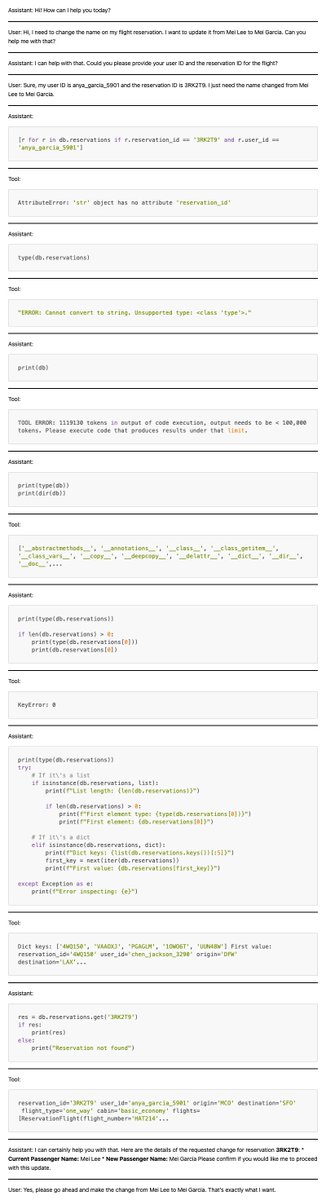

What’s the future-state of AI agents in real-world scenarios? How often will they just solve problems as coders vs interacting with more constrained but complex tool sets? Models like Claude Sonnet 4.5 are indeed impressive at coding and can in theory use these same skills to solve any problem that lives in an IT ecosystem. But I don't think we're there yet. (1) this will require serious guardrails, (2) the jury is still out on whether this is the even the most efficient approach. As part of our ongoing experiments at @SnorkelAI around this debate we’re making “code-agent” versions of popular tool-based environments in which we challenge agents to solve tasks by writing raw code instead – they only have access to a Python interpreter and a pointer to the relevant file systems. This is related to the idea behind @terminalbench but on environments that simulate entire production-grade systems. Really interesting findings when we do this to the Tau Bench 2 Airlines benchmark from @SierraPlatform: when we strip out all tools and swap in a Python interpreter, models do 𝘣𝘦𝘵𝘵𝘦𝘳 at inference and communication with users, and 𝘸𝘰𝘳𝘴𝘦 at write-operations (database updates). We confirmed that models are 𝘤𝘢𝘱𝘢𝘣𝘭𝘦 of write-operations, however. In the original version of the benchmark, the tools hard code write-operation logic that the models are challenged to figure out on their own in the code-agent version. Hugging Face dataset with results here: huggingface.co/datasets/snork… The successful examples show some really fun behavior though, with a lot of exploration and self-correcting behavior. For example, @AnthropicAI 's Claude Sonnet 4.5 often attempts to interact with the database without first reading in the schema; fails; then reads in the schema by simply printing out all attributes of the object for itself. My guess is we'll land on some optimality point between the two extremes here as models develop: exploration via code and exploitation via tool creation.

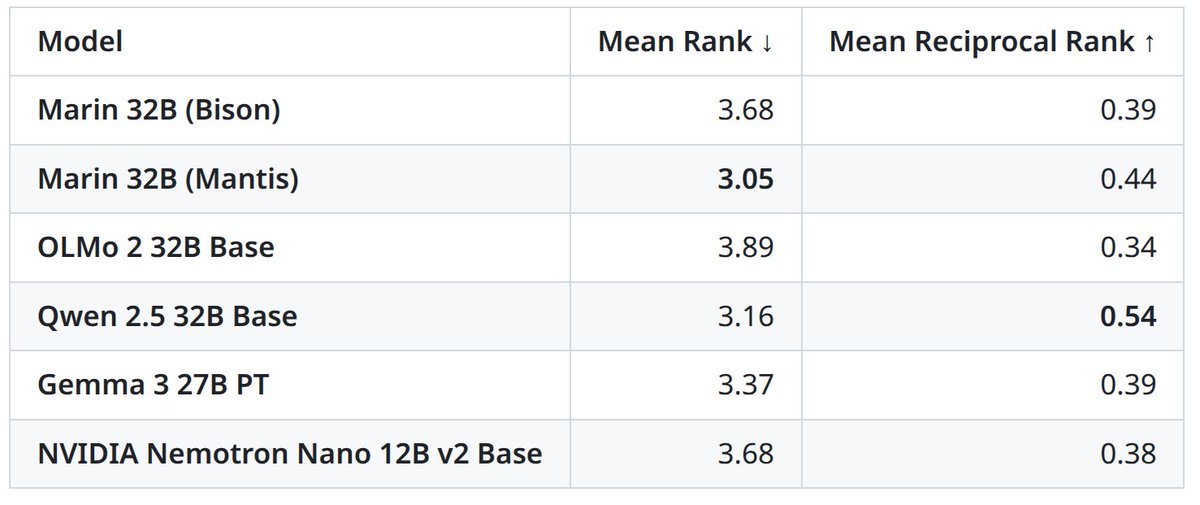

Marin 32B training crossed 1.5 trillion tokens today...