Amund Tveit

240 posts

Amund Tveit

@atveit

Principal AI Engineer | Personal Opinions Only

Performance Hints Over the years, my colleague Sanjay Ghemawat and I have done a fair bit of diving into performance tuning of various pieces of code. We wrote an internal Performance Hints document a couple of years ago as a way of identifying some general principles and we've recently published a version of it externally. We'd love any feedback you might have! Read the full doc at: abseil.io/fast/hints.html

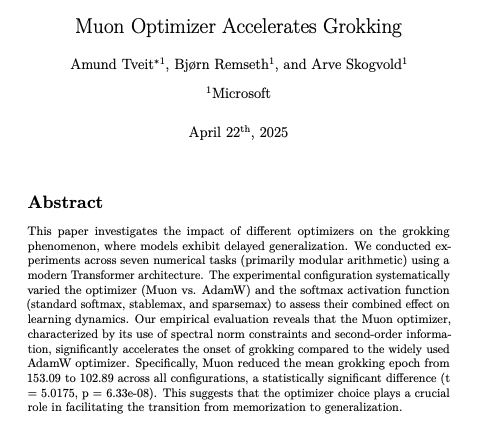

Witnessing the magic of "grokking" in action with the new JAX native Tunix library! The port of Grokking (based on implementation initially in MLX) demonstrates how transformers suddenly "understand" modular arithmetic after initial memorization—jumping from 11% to 99% validation accuracy. 🔗 github.com/atsentia/jax-t… More about Tunix library on: github.com/google/tunix #JAX #DeepLearning #Tunix

What I think will happen is that 10x engineers become 100x engineers and 1x becomes 0 if that makes sense.

A breakthrough in quantum computing. Majorana 1 brings us closer to harnessing millions of potential qubits working together to solve the unsolvable—from new medicines to revolutionary materials—all on a single chip. #QuantumComputing #QuantumReady msft.it/6007UxEHz