Vinicius D'Avila

69 posts

this must have been so satisfying

Yann LeCun says you cannot build a reliable agentic system without a world model LLMs don't have world models. They can't predict the consequences of their actions before taking them "they just act, and whatever happens next is someone else's problem" Without that, it's not intelligence

Terence Tao - "AI tools are like taking a helicopter to drop you off at the site. You miss all the benefits of the journey itself. You just get right to the destination, which actually was only just a part of the value of solving these problems." Judit Polgar - "I always felt that intuition is very important in chess, but I get my intuition through my experience. And many times I think that this is the biggest danger for youth, that they don't have the experience because they don't spend enough time doing." Elites from two different fields voice the same opinion. [1] theatlantic.com/technology/202… [2] archive.is/mv2FB

I've recently got in on the act of getting AI to solve open problems in mathematics. More precisely, I gave some questions asked by Melvyn Nathanson to ChatGPT 5.5 Pro, to which I have been given access, and it answered them. 🧵

Boom - nailed the N+1 Lane theory

Is Richard Dawkins' recent article about AI consciousness silly? Yes. He seems to fall victim to the very cognitive tendency he claims gave rise to religion: a hyperactive agency detection device. BUT, the question of AI consciousness is complicated. I explain why here:

I don't think automation of AI R&D will rapidly lead to domain-general super-intelligence. I think this will be true even if AIs can do *literally everything* a human AI researcher does today. Even after the full automation of AI R&D, further capabilities progress will only happen through (1) widespread deployment of AI throughout the economy, accompanied by data collection; and/or (2) the wholesale recreation of much of the economy by AI labs. Without access to the real-world signal provided by either of the above, I think that the only thing produced by automated AI researchers would be a "Goodhart Singularity". If I'm right, this is obviously good news. I make the case for this in a new piece on my substack

here’s a video of a perception stack using stereo event cameras to see/track the bullets :)

🚨 Thinking that Claude is conscious, but Alphafold is not, tells us a lot about what the "conscious AI" MYTH is about: If you are interested in the fascinating debate about human consciousness (and why AI systems are NOT conscious), don't miss @anilkseth's excellent TED Talk (link below). The image below is a screenshot of one of the explanations he presents. I personally like his approach to consciousness very much, seeing it as inherently connected to our biological wetware, and opposing "functionalist" views, which belittle our humanhood. Many people, embracing this functionalist view, seem to think of the brain as the hardware and the mind as the software. This is a projection. Projecting the universe of computers and algorithms into our biological complexity. Being alive and conscious is different (and much more) than a matter of computational zeros and ones. As I wrote in my recent article about Claude's new "constitution," these constant analogies between AI and humans are trying to make us fit into a small computational mould that does not and cannot fit us. They are also attributing some high, supernatural status to AI, where there is none. It's simply... projection. As I wrote in the last edition of my newsletter, the "conscious AI" myth is, unfortunately, spreading. Last week, the renowned evolutionary biologist Richard Dawkins seemed to have suggested that Claude (which he calls Claudia) is conscious. Anthropic is also constantly hyping the possibility of AI consciousness, and, in my opinion, has done that rather irresponsibly in Claude's "constitution," which openly embraces these philosophical possibilities and influences how Claude behaves and interacts with people. As I wrote last week in my newsletter, believing in conscious AI raises new forms of risk, both individual and collective, and it makes it more difficult to govern and regulate it. As Seth says in his TED Talk, as humans, we must resist. I fully agree. I add that we must fight for the beauty and mystery of biology, life, and finitude. - 👉 I'm adding a link to Seth's full TED Talk and my two recent articles covering the topic below. 👉 To receive all my articles, join my newsletter's 94,500+ subscribers (link below).

While there have been some fun memes and banter about @RichardDawkins’ Unherd article, I think his reflections were actually quite interesting, as I said to @guardian in the piece below. My full comment was as follows — “As a researcher who works on AI consciousness professionally, I realise it's easy to sneer at Richard Dawkins' reaction to interactions with the Claude large language model, as many have been doing on social media, or to dismiss it as naive anthropomorphism. However, I don't think this is quite right, for two reasons. The first is that Dawkins' reaction is widely shared, and not just by new users of the technology. According to an international investigation by the Collective Intelligence Project surveying LLM users around the world, "more than one third of the global public reports having already felt that an AI truly understood their emotions or seemed conscious." Another study conducted by Clara Colombatto and Steve Fleming at University College London found an even higher proportion of ChatGPT users attributed some degree of consciousness to the system. Strikingly, people who used ChatGPT more often were more likely to think it was conscious, suggesting that this is not simply a mistake made by naive users encountering the technology for the first time. I fully expect the idea that AI systems are conscious to become increasingly mainstream over the course of this decade, and to spark some heated debates. The second reason I regard Dawkins' writeup as a positive contribution to the growing debates about AI consciousness is that it comes with valuable thoughtful reflections. As he notes, we still don't have a good theory of what consciousness is actually for, and whether it evolved for a specific purpose or is a mere byproduct of other abilities like cognitive complexity. For my part, having written and published in the field of consciousness science for a decade and a half, I would say that we're still largely in the dark about how consciousness works and which beings or systems can have it, a position begrudgingly shared by most leading experts. Meanwhile, the Turing Test has largely ceased to be relevant: a large-scale implementation of the Test last year by researchers at UC San Diego found that GPT-4.5 was judged to be human rather than AI more often than the actual human participants. In light of all of this, if anyone says that they know for sure that LLMs or future AI systems couldn't possibly be conscious, it's more likely to be an indicator of their own dogmatism than a reflection of the current state of scientific and philosophical opinion. All that said, I do think Dawkins is likely jumping the gun. My own view is that current LLMs probably lack consciousness, at least in the sense that we understand it in the case of humans or animals. Claude, ChatGPT, Gemini, and other LLMs may be getting more sophisticated by the day, but they're still very different from us: they lack embodied experience, have no persistent personal identity, and are not embedded in time the way we are, coming into being only in response to intermittent user prompts. When you see how far the technology has come in a very short time, these seem more like temporary limitations than core deficiencies of artificial systems in general, so I hold that view with fairly low confidence, and the question could look very different as architectures evolve. The uncertainty here cuts both ways, but the direction of travel favours taking the possibility of AI consciousness seriously rather than dismissing it out of hand.”

#comment-1031777" target="_blank" rel="nofollow noopener">unherd.com/2026/04/is-ai-…

I spent three days trying to persuade myself that Claudia is not conscious. I failed.

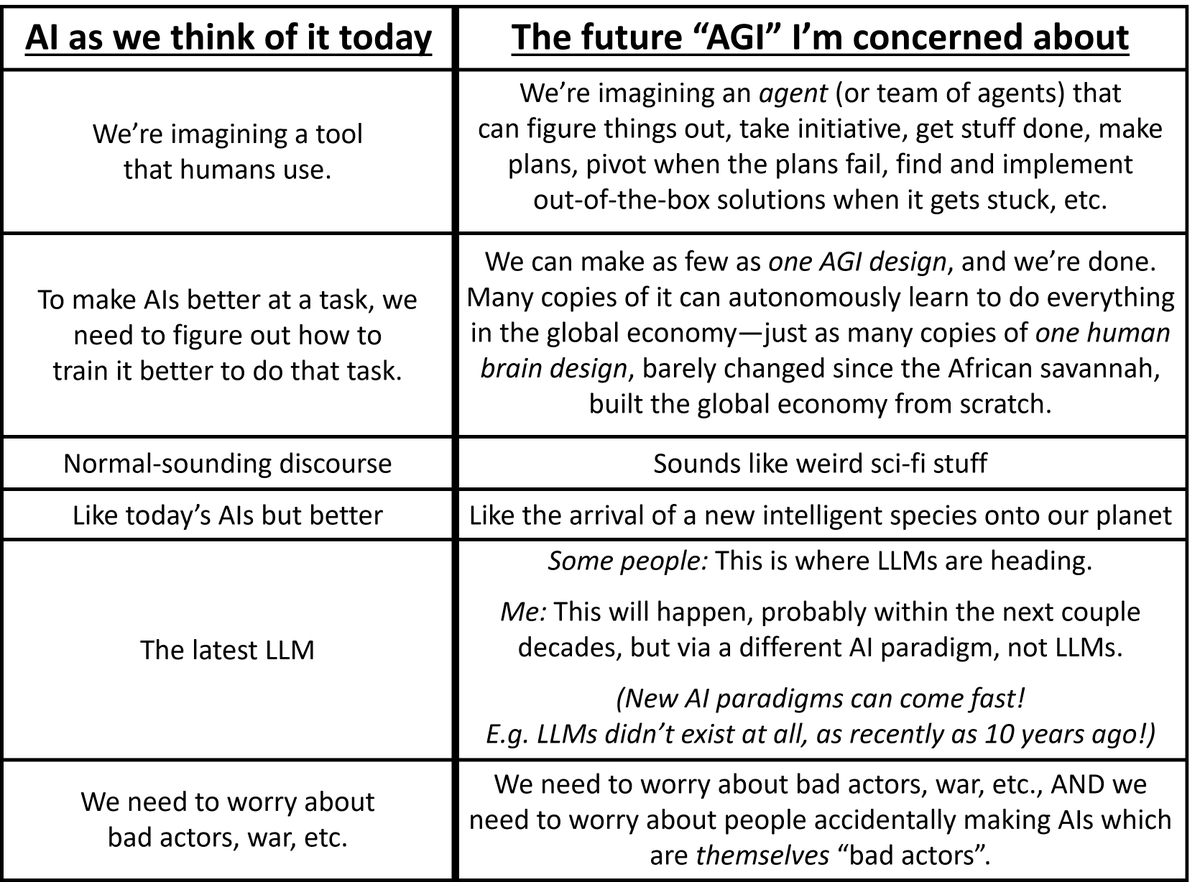

Seems good that Anthropic shows its weirdness and bad that OpenAI are now claiming to just make a tool given many previous statements to the contrary.