Jinpeng Wang

182 posts

Jinpeng Wang

@awinyimgprocess

Tenure-track Professor in Central South University, NUS PHD. Focus on Multi-modality Learning and Data-centric AI.

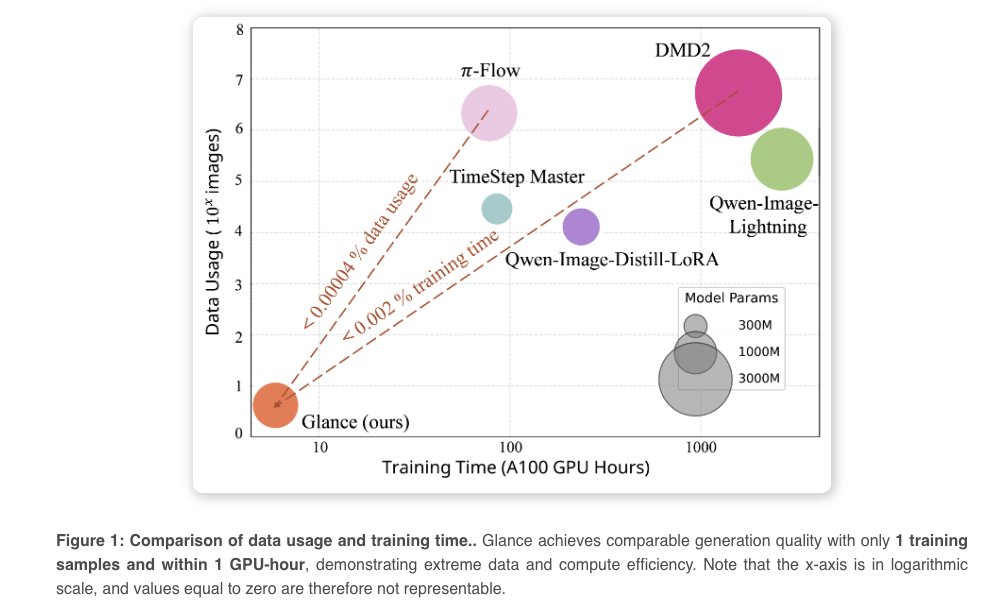

Thanks AK for sharing our work. We speed up Qwen-image and Flux Inference speed from 50 steps to less than 10steps. 1 sample is enough for speeding up diffusion model in specific domain. Paper Link: arxiv.org/abs/2512.02899 Code Link: github.com/CSU-JPG/Glance

Glance Accelerating Diffusion Models with 1 Sample

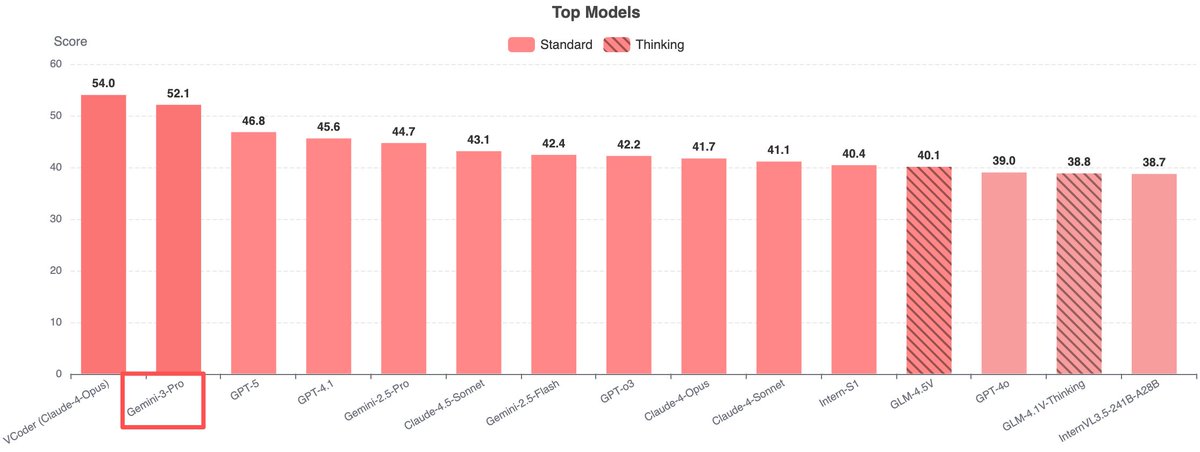

VCode app is out SVG as Symbolic Visual Representation Turn any image into symbolic SVG

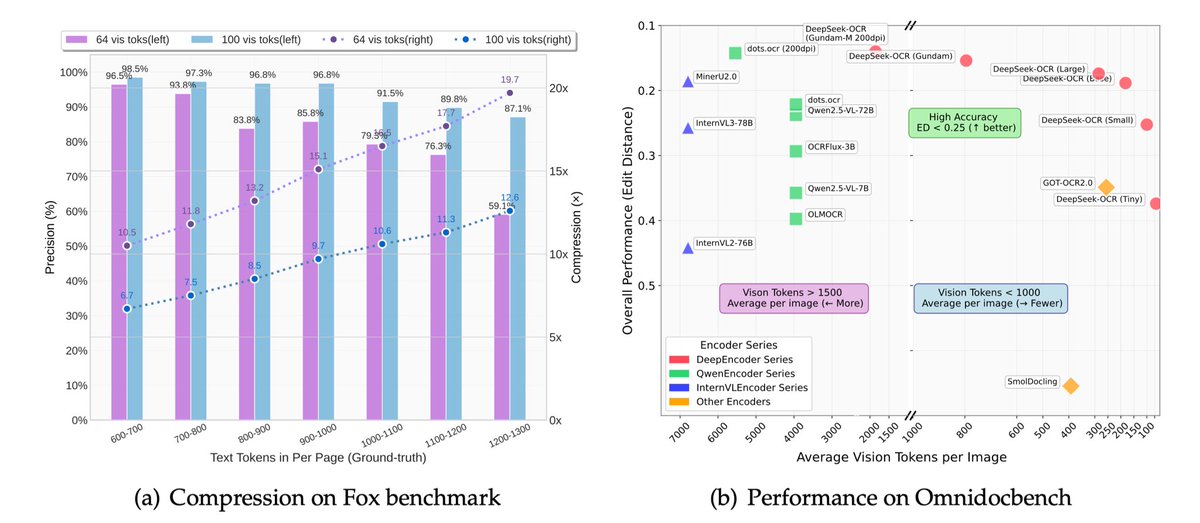

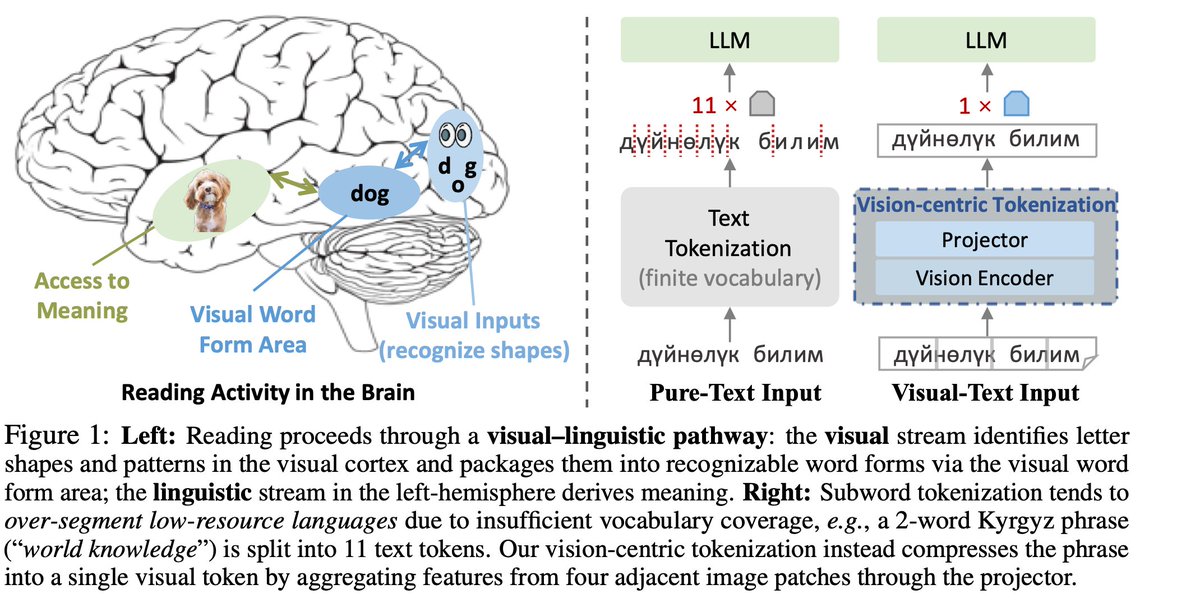

As LLMs grow to trillions of parameters and context windows stretch to hundreds of thousands of tokens, compute costs explode. Vision Centric Token Compression (Vist) offers a fix — a vision-centric token compression method that mimics human reading. It converts distant context into images for a vision encoder to skim, while the LLM focuses on nearby text. Matching full-context accuracy with 2.3× fewer tokens, Vist slashes FLOPs by 16%, memory by 50%, and outperforms prior compression methods by 7.6% across major benchmarks. Vision-centric Token Compression in Large Language Model NUST, CSU, NFU Paper: arxiv.org/abs/2502.00791 Our report: mp.weixin.qq.com/s/zYnxpBhRsndl… 📬 #PapersAccepted by Jiqizhixin

VCode app is out SVG as Symbolic Visual Representation Turn any image into symbolic SVG

VCode a Multimodal Coding Benchmark with SVG as Symbolic Visual Representation