Ayash retweetledi

Ayash

162 posts

Ayash

@ayashtwtt

everything has an end except bananas which have two.

Katılım Temmuz 2020

395 Takip Edilen94 Takipçiler

india when ?

Avalanche Team1@AvaxTeam1

Onchain whisky night with @SuntoryWeb3 An amazing time with great people who used @coreapp & JPYC stablecoin to pay for drinks on the Avalanche c-chain Their "Proof-of-Consumption" was recorded entirely onchain Looking forward to seeing everyone again at the next event 🔺🇯🇵

English

Ayash retweetledi

Ayash retweetledi

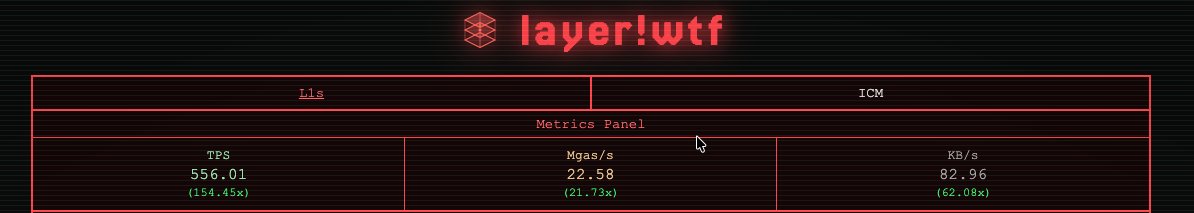

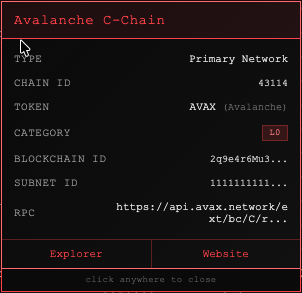

Introducing layer1.wtf

A live performance monitor for Avalanche L1s.

Direct RPC data.

Updated every 5 seconds.

See what’s actually happening on-chain.

layer1.wtf

English

If you're a crypto builder, read this.

Applications for the Build Games, a $1,000,000 builder competition on Avalanche just opened.

- all star judges

- designed for founders

- any idea on avalanche is eligible

Read more and apply here: build.avax.network/build-games?re…

English

Ayash retweetledi

Avacado Docs just dropped

avacado.app/docs

Check the docs and tell us what you think.

English

Ayash retweetledi

I am becoming progressively more worried for our humanity due to extensively terrifying improvements to AI generated content media.

From blatant misinformation to even the recent commercial adaptations of AI (acquisition of Suno into the music industry) WHICH NOBODY NEEDS.

We need artists to thrive - not feel discouraged.

Please be weary with what you see or hear online, contemporary media feels like nothing is real anymore.

AI sucks and we need to stand up against it.

English

Ayash retweetledi

Ayash retweetledi

Ayash retweetledi

Ayash retweetledi

My son called me crying

“Dad, school ended 4 hours ago. I’m still waiting for you to pick me up!”

Click.

I hung up.

Weak pitch. No clear call to action. Didn’t give me a reason to care.

I went back to cold emailing billionaires.

He’ll have to learn a better way to get my attention if he wants my help.

English

Ayash retweetledi

She dumped me last night.

Not because I don't listen.

Not because I'm always on my phone.

Not even because I forgot our anniversary (twice).

But because,

in her exact words:

"You only pay attention to the parts of what I say that you think are important."

I stared at her for a moment and realized...

She just perfectly described the attention mechanism in transformers.

Turns out I wasn't being a bad boyfriend. I was being mathematically optimal.

See, in conversations (and transformers), you don't give equal weight to every word. Some words matter more for understanding context. Attention figures out exactly HOW important each word should be.

Here's the beautiful math:

Attention(Q, K, V) = softmax(QK^T / √d_k)V

Breaking it down:

Q (Query): "What am I looking for?"

K (Key): "What info is available?"

V (Value): "What is that info?"

d_k: Key dimension (for scaling)

Think library analogy:

You have a question (Query). Books have titles (Keys) and content (Values). Attention finds which books are most relevant.

Step-by-step with "The cat sat on the mat":

Step 1: Create Q, K, VEach word → three vectors via learned matrices W_Q, W_K, W_V

For "cat":

Query: "What should I attend to when processing 'cat'?"

Key: "I am 'cat'"

Value: "Here's cat info"

Step 2: Calculate scoresQK^T = how much each word should attend to others

Processing "sat"? High similarity with "cat" (cats sit) and "mat" (where sitting happens).

Step 3: Scale by √d_kPrevents dot products from getting too large, keeps softmax balanced.

Step 4: SoftmaxConverts scores to probabilities:

"cat": 0.4 (subject)

"sat": 0.3 (action)

"mat": 0.2 (location)

"on": 0.1 (preposition)

"the": 0.1 (article)

Step 5: Weight valuesMultiply each word's value by attention weight, sum up. Now "sat" knows it's most related to "cat" and "mat".

Multi-Head Magic:Transformers do this multiple times in parallel:

Head 1: Subject-verb relationships

Head 2: Spatial ("on", "in", "under")

Head 3: Temporal ("before", "after")

Head 4: Semantic similarity

Each head learns different relationship types.

Why This Changed Everything:

Before: RNNs = reading with flashlight (one word at a time, forget the beginning)

After: Attention = floodlights on entire sentence with dimmer switches

This is why ChatGPT can:

Remember 50 messages ago

Know "it" refers to something specific

Understand "bank" = money vs river based on context

The Kicker:Models learn these patterns from data alone. Nobody programmed grammar rules. It figured out language structure just by predicting next words.

Attention is how AI learned to read between the lines.

Just like my therapist helped me understand my focus patterns, maybe understanding transformers helps us see how we decide what matters.

Now if only I could implement multi-head attention in dating...

Still waiting for "scaled dot-product listening" to be invented.

English

Ayash retweetledi