Balakumar Sundaralingam

44 posts

Balakumar Sundaralingam

@balakumar_

Senior Research Scientist @NVIDIA | Robotics PhD @UUtah | Robot Manipulation

Most capable generalist robotics models today are closed or at best, open weights. But robotics won’t reach its ChatGPT moment without real openness. That GPT moment was built on years of open tools and datasets such as Python, PyTorch, ImageNet and more, that let researchers inspect, reproduce, and build. Today, we’re introducing MolmoAct 2: a fully open-source action reasoning model for real-world robotics. We rethought and reshaped everything! 🧵👇

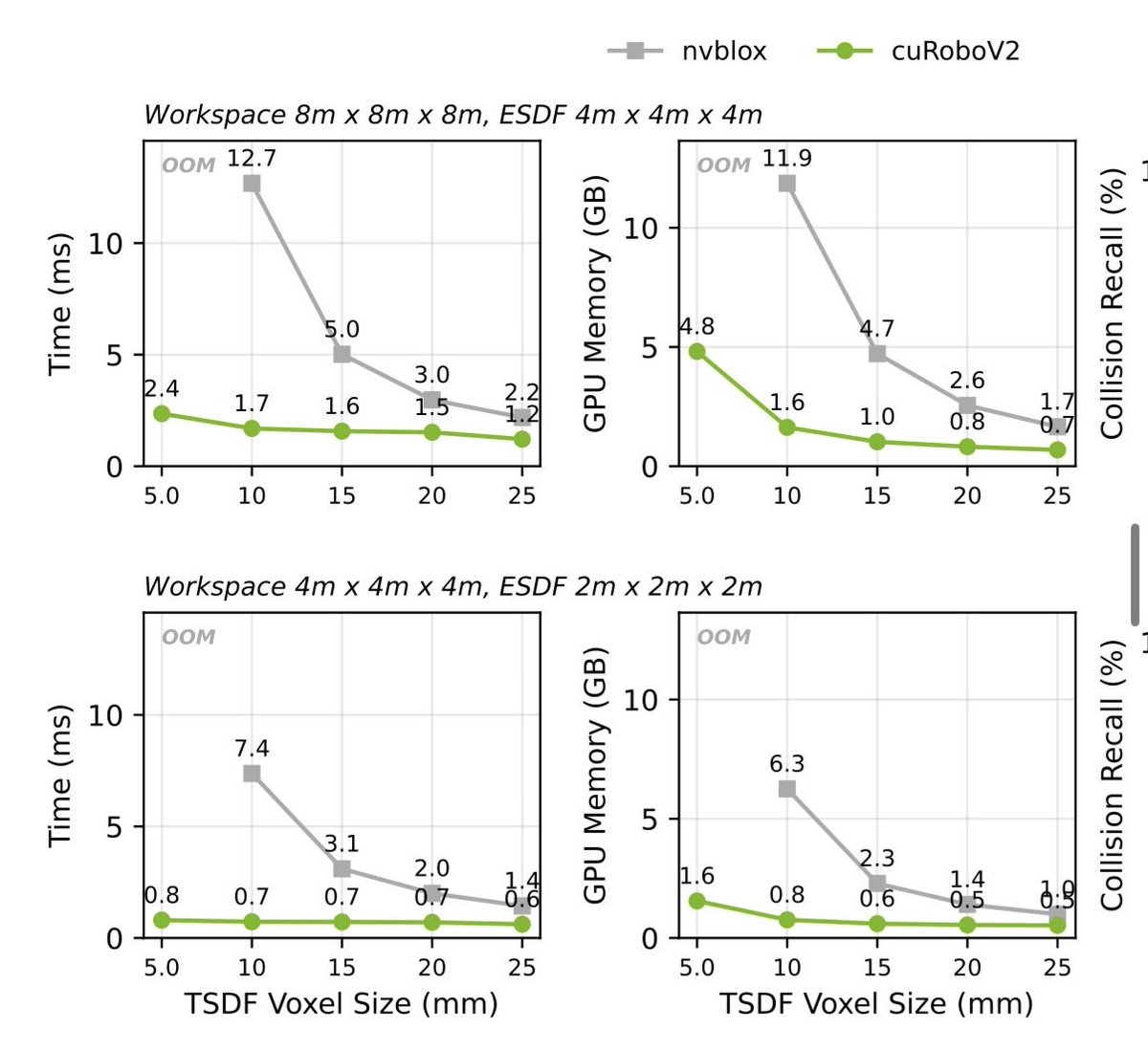

@pablovelagomez1 CuRoboV2 implements a TSDF mapper for manipulation (fixed workspace, 5mm voxels, multiple cameras). We designed it for performance (all ops on GPU) and memory efficiency (fp16). This leads to 10x faster rgbd->esdf while using 8x less memory (page 31).

Should legged robot use RL or trajectory optimization? #icra2024

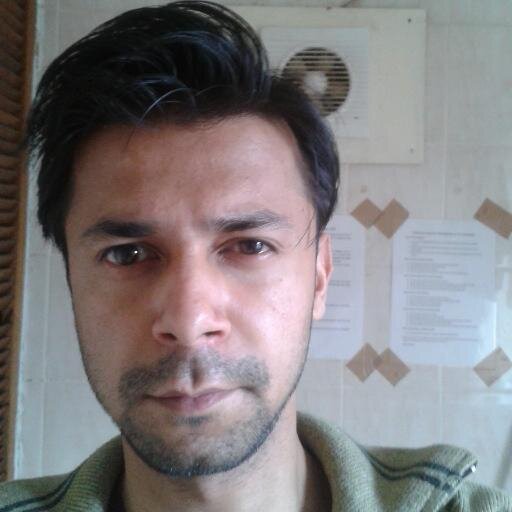

🤖 cuRobo, a new #CUDA accelerated motion generation toolkit, can solve complex #robotics problems in milliseconds. ⚡ It includes implementations of kinematics, collision checking, numerical and trajectory optimization, and more. 👀 #NVIDIAResearch code nvda.ws/3MxDmNG

We are excited to release our work on DexPilot, a markerless, glove-free and vision based teleoperation of dexterous robot hand-arm system pdf is here github.com/ankurhanda/dex… link to more videos sites.google.com/view/dex-pilot youtu.be/qGE-deYfb8I