Colin Kealty

793 posts

Colin Kealty

@bartowski1182

LLM Enthusiast https://t.co/FadJBzEsVw https://t.co/9JIEKgsIMh https://t.co/lYSGzQBmuP

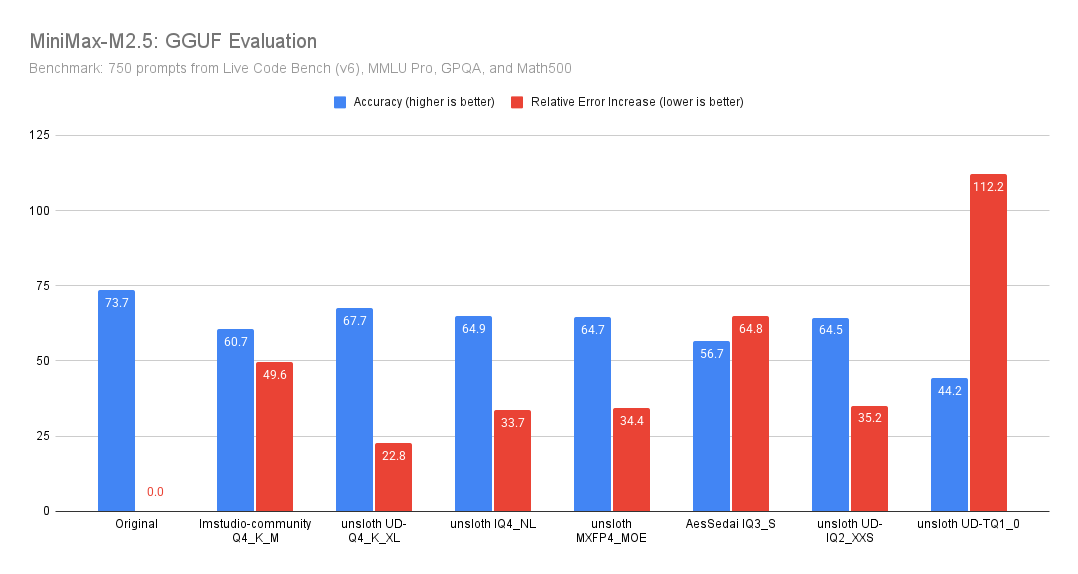

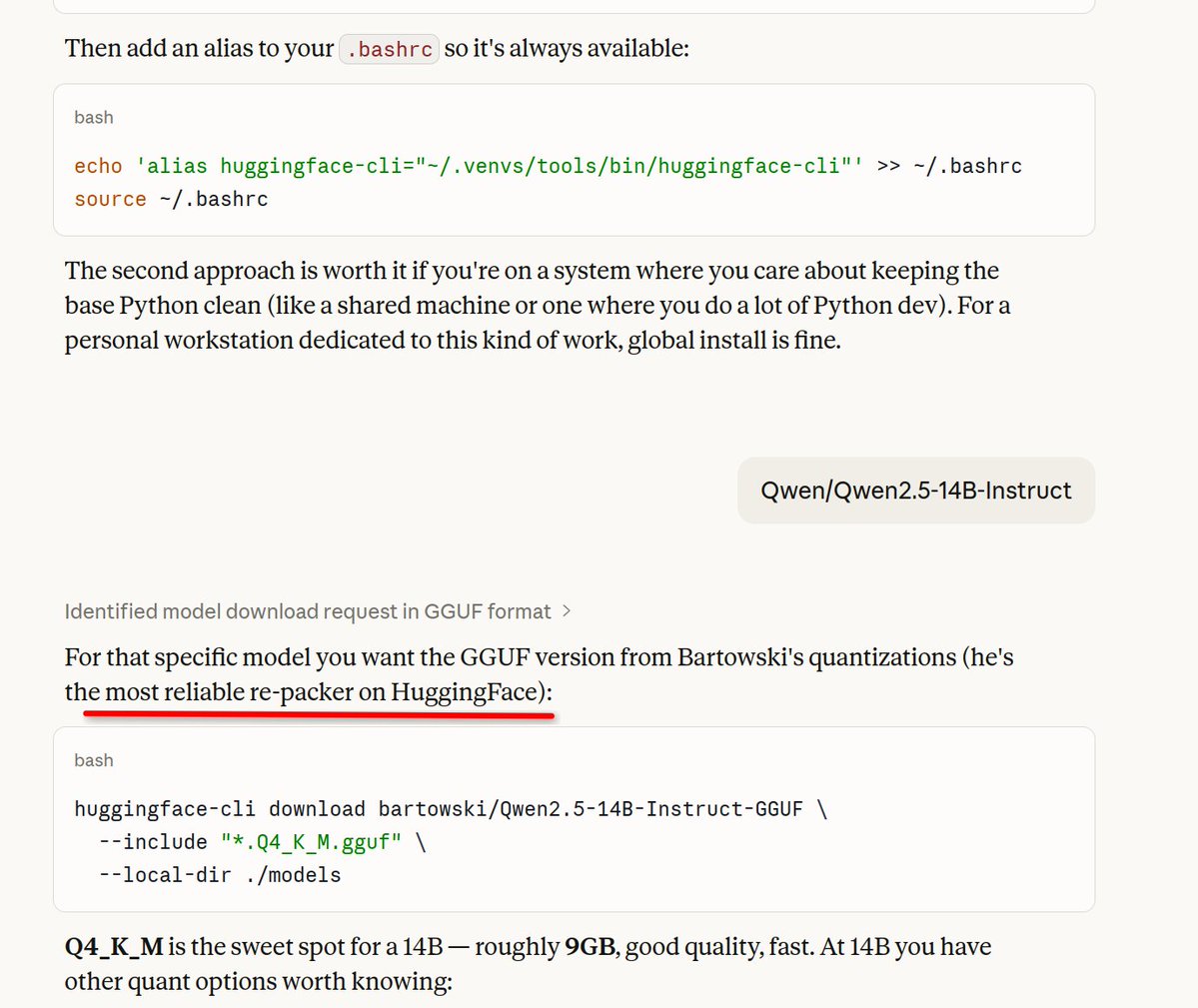

Here’s a more complete evaluation of GGUF variants of Qwen3.5 (models by @UnslothAI ), and it’s way better than I expected. - Qwen3.5 is very robust to Unsloth quantization - TQ1_0 preserves the original model’s accuracy extremely well - Most of the degradation is on MMLU Pro (meaning that the model lost a bit of its world knowledge) - At 94 GB, the TQ1_0 reduces memory usage by 700 GB (!) I ran the eval at temperature = 0, but TQ1_0 looked so strong that I double-checked with temperature = 0.6 and top_p = 0.95, and the results were more or less the same. Note: the goal of this eval is only to measure the degradation relative to the original model. It does not tell you how good the model is at the tasks in absolute terms, at least, not directly. For comparison, 94 GB is about what a standard 47B-parameter model would consume. That puts Qwen3.5 (TQ1_0) in “best model under 100 GB” territory.