basvanopheusden

2K posts

basvanopheusden

@basvanopheusden

Research at OpenAI, previously @imbue_ai and @cocosci_lab lab at Princeton. All opinions my own

🚨New preprint! LLM teams are being deployed at scale, yet we lack the tools to predict when they’ll succeed, fail, or how to design them. Distributed computing faced the exact same questions and figured out how to answer them. We show those insights apply directly to LLMs 🧵👇

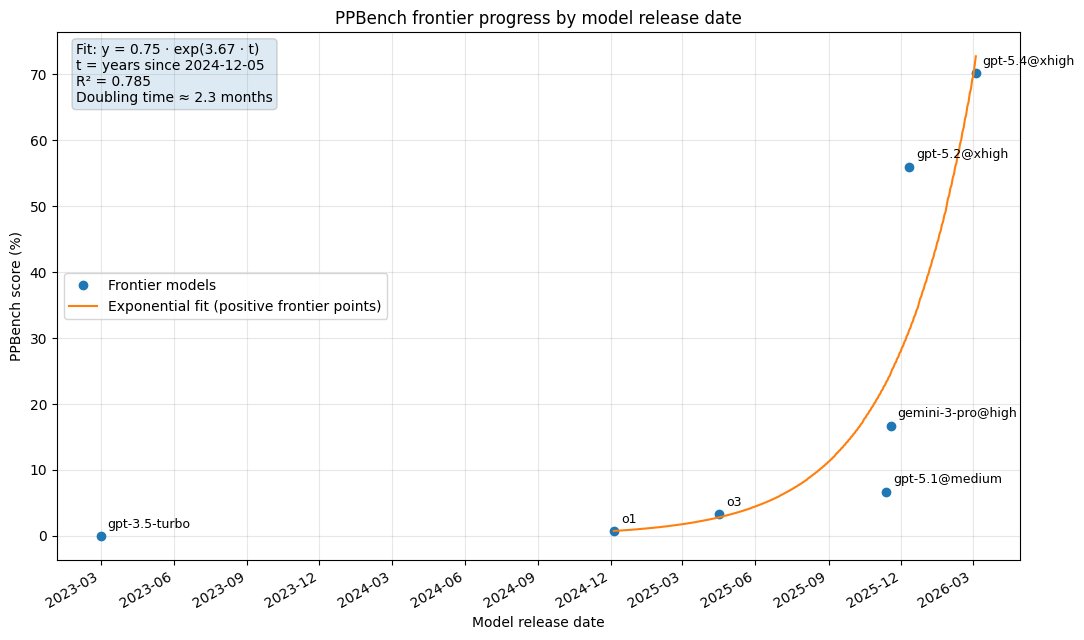

(1/N) Pencil Puzzle Bench is out! 51 LLMs tested on pencil puzzles (multi-step, logical reasoning, verifiable at each step) Dataset: 62k unique puzzles, 94 types. Evaluation: covers 300 puzzles across 20 types Best score: GPT 5.2@xhigh 56%, half the puzzles are still unsolved

GPT 5.4 xHigh cloned flappy bird (1 of 1) in 2 attempts. You can’t make this up. The only thing it struggled with that took multiple attempts was putting the medal perfectly inside the circle during the end card (you can’t still tell it’s ever so slightly off) Nonetheless this technology is just so cool to toy around or mod your favorite 2D games.

Sold out! But I had Claude create and deploy all 80 volumes of The Weights to the site as well-formatted PDFs, so you can download them for free if you want. 58,276 pages in total. 117 million floating point numbers. This is everything that makes GPT-1. weights-press.netlify.app

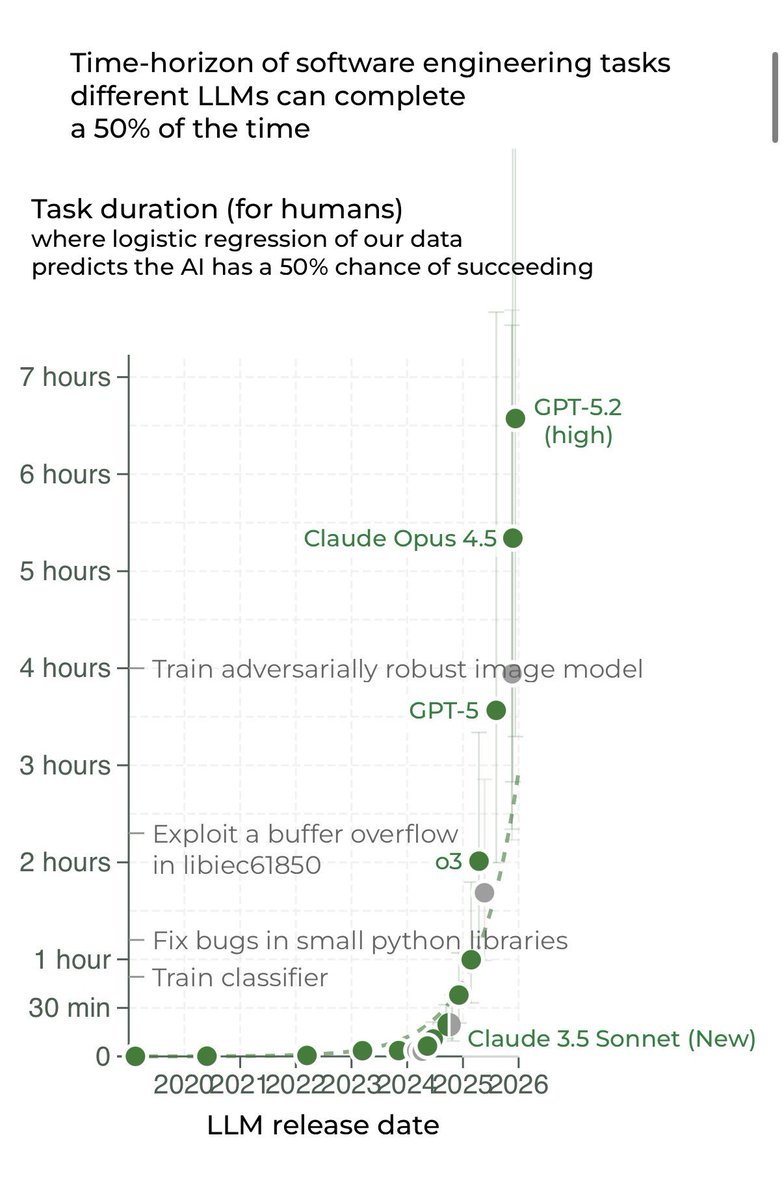

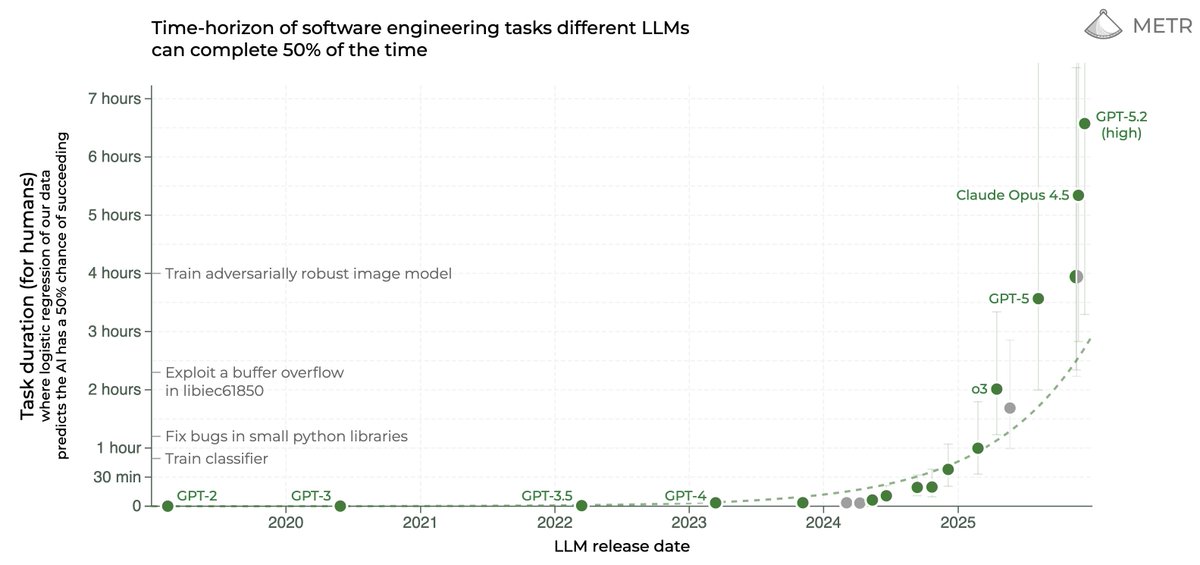

We estimate that GPT-5.2 with `high` (not `xhigh`) reasoning effort has a 50%-time-horizon of around 6.6 hrs (95% CI of 3 hr 20 min to 17 hr 30 min) on our expanded suite of software tasks. This is the highest estimate for a time horizon measurement we have reported to date.