jon becker

3.5K posts

jon becker

@beckerrjon

any sufficiently advanced technology is indistinguishable from magic. senior software engineer @coinbase

Best GitHub repository for trading on Polymarket right now TauricResearch/TradingAgents lets you build a complete trading system - from market analysis to the final position decision It's an entire trading firm made of LLM agents, each playing their own role: • Fundamentals analyst evaluates data and metrics • Sentiment analyst monitors market mood • Technical analyst looks at patterns and indicators • Bull and bear debate each other before every trade • Risk manager evaluates the position and gives final approval A position only opens after all agents have reached a decision How to use it on Polymarket: • Pick an open market: "Will the Fed cut rates in June?" • Feed the question to the agents instead of researching it yourself • Fundamentalist pulls macro data, sentiment scans what's being said online • Bull and bear argue the position from both sides • Risk manager sizes the position based on the debate • You get a reasoned decision with full context, not a gut feeling Supports GPT-5, Claude, Grok, Gemini - you can mix models for different tasks Repository: github.com/TauricResearch…

Yesterday I posted about HIP4 being the first HIP to use HyperEVM. Full research → liquidterminal.xyz/hip4/home HIP4 has no official documentation. No verified source. No ABI. So we reverse-engineered the contract from bytecode and calldata on testnet. What we mapped: → Full reconstructed ABI (selectors, signatures, access control) → Every event (DepositReceived, Claimed, ContestCreated, ContestFinalized, MerkleRootPublished) → All revert strings mined from bytecode → Storage layout (owner, mappings, initialization flags) → Complete contest lifecycle: createContest → deposit → publishMerkleRoot → claim → sweepUnclaimed → Bridge architecture L1↔EVM (asset index formula, outcome token mapping) → Real decoded testnet transactions → JS + Python code examples Some findings: - Pre-deployed at genesis, not a standard deployment - renounceOwnership always reverts, admin is permanent by design - Merkle-based claims, 0.9% platform fee on reward pool - Three market types: custom, priceBinary, recurring liquidterminal.xyz/hip4/home Testnet only. This is v1, early test from the team, raw design, and some things might be off. Nothing is final. If you spot errors or have insights, feedback is very much appreciated. Hyperliquid.

I'll take a week to perform an interesting and probably stupid experiment: Hunting for live EVM bugs by checking the deployed bytecode. I'm allowing myself to cheat a little bit by checking the verified code to quickly understand what's going on. I'll also use a Yul decompiler for complex contracts and try a disassembler for simpler ones. There are critical contracts out there holding really big bags that are worth the effort. My main goal though is just to understand what's going on under the hood, and maybe get some inspiration for any potential unknown vectors. Also for understanding what's needed to get a clean input for any automated tools to perform further analysis. I don't expect to find any bugs honestly. It will be painful, but fun at the same time. I just love having the freedom to navigate any crazy paths I choose 🧙♂️

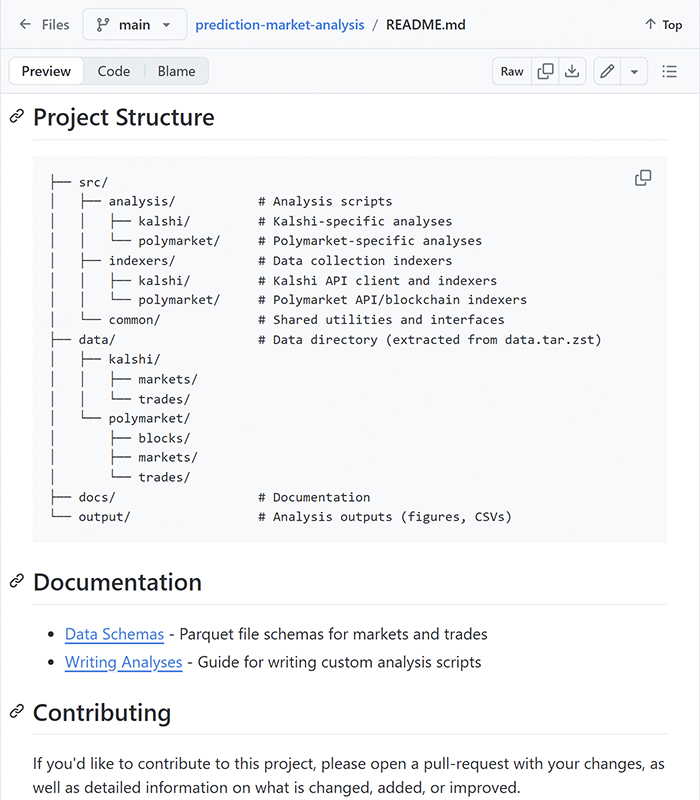

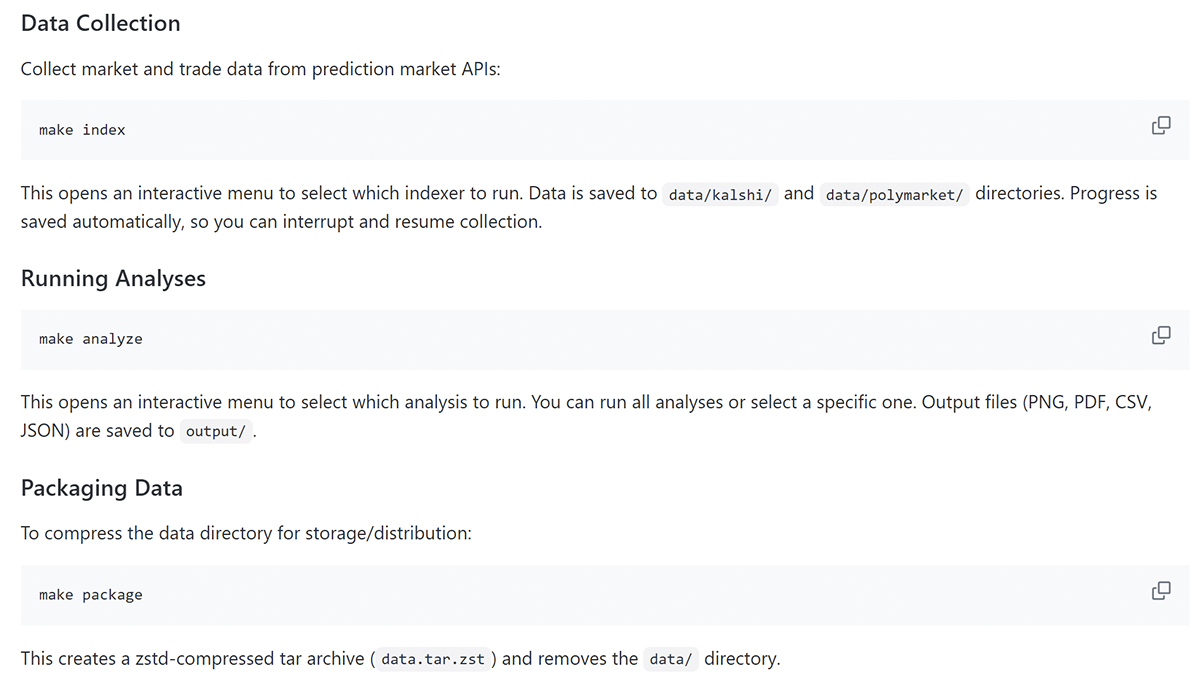

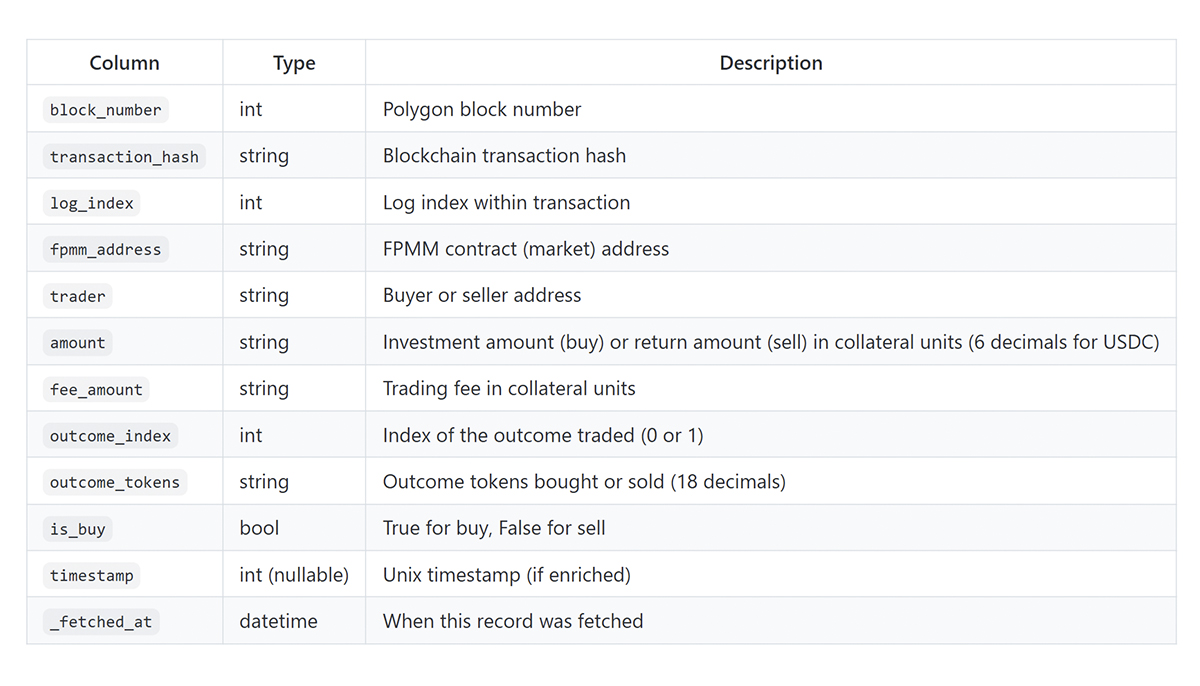

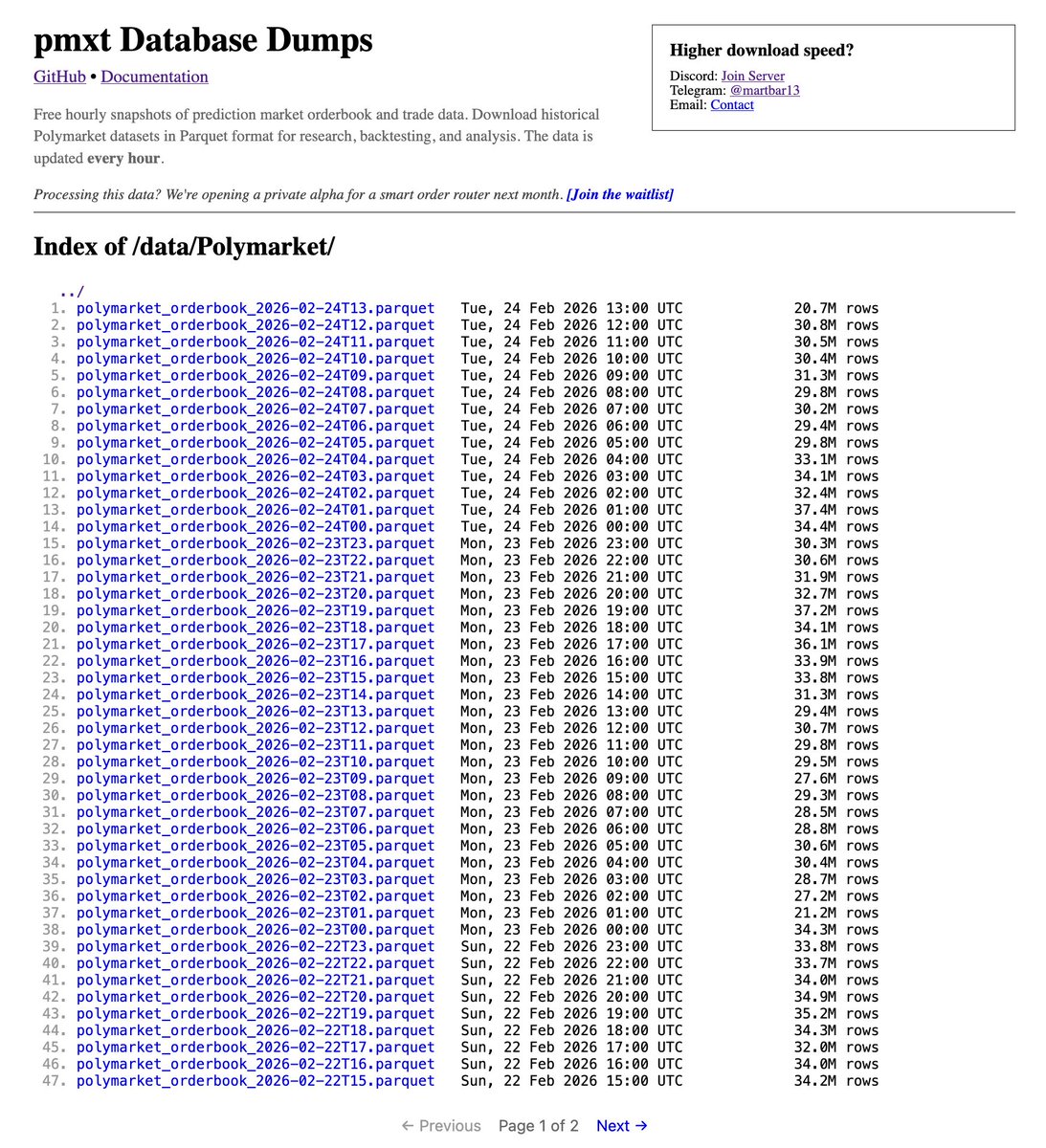

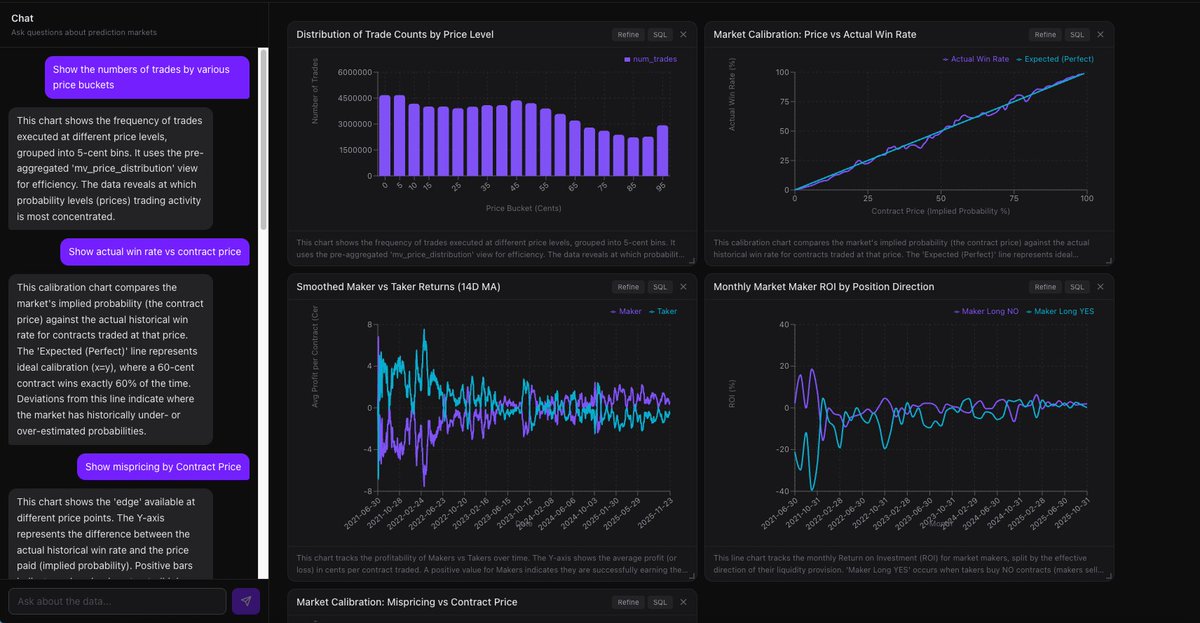

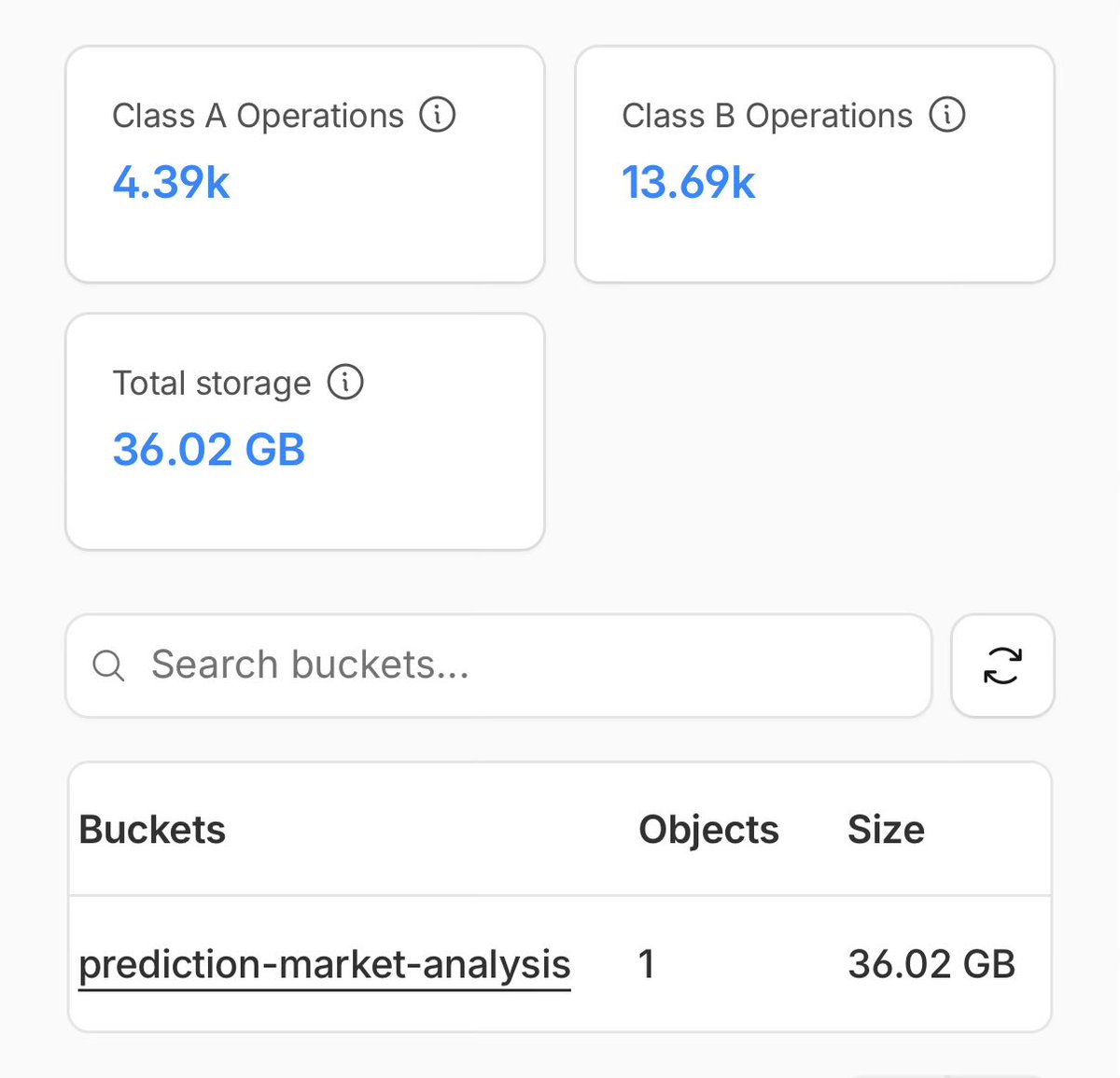

added polymarket data to the public dataset. 400m+ trades going back to 2020. 36gb compressed. MIT licensed, free to download via @Cloudflare R2.

added polymarket data to the public dataset. 400m+ trades going back to 2020. 36gb compressed. MIT licensed, free to download via @Cloudflare R2.