Benjamin Elizalde

50 posts

Applications are open for the doctoral program in AI! 🎓 100 fully funded PhD positions across 10 Finnish universities 🧭 Collaboration with industry Professors @arnosolin and @lauraruotsa introduce the program in this video 👇 Apply here by April 2 ➡️ fcai.fi/doctoral-progr…

Announcing fully-funded PhD positions on our new "Bioacoustic AI" project: bioacousticai.eu Apply now for a #PhD studying animal sounds (#bioacoustics), #deeplearning, #acoustic signals, and #ecology! 2 open to apply now, 8 more coming soon. (Pls share)

New workshop announcement 📢📢 We are excited to announce our IEEE ICASSP 2024 workshop, Explainable AI for Speech and Audio! We have two tracks for papers with deadlines January 20, and February 20. The details on how to submit: xai-sa-workshop.github.io/web/Call%20for…

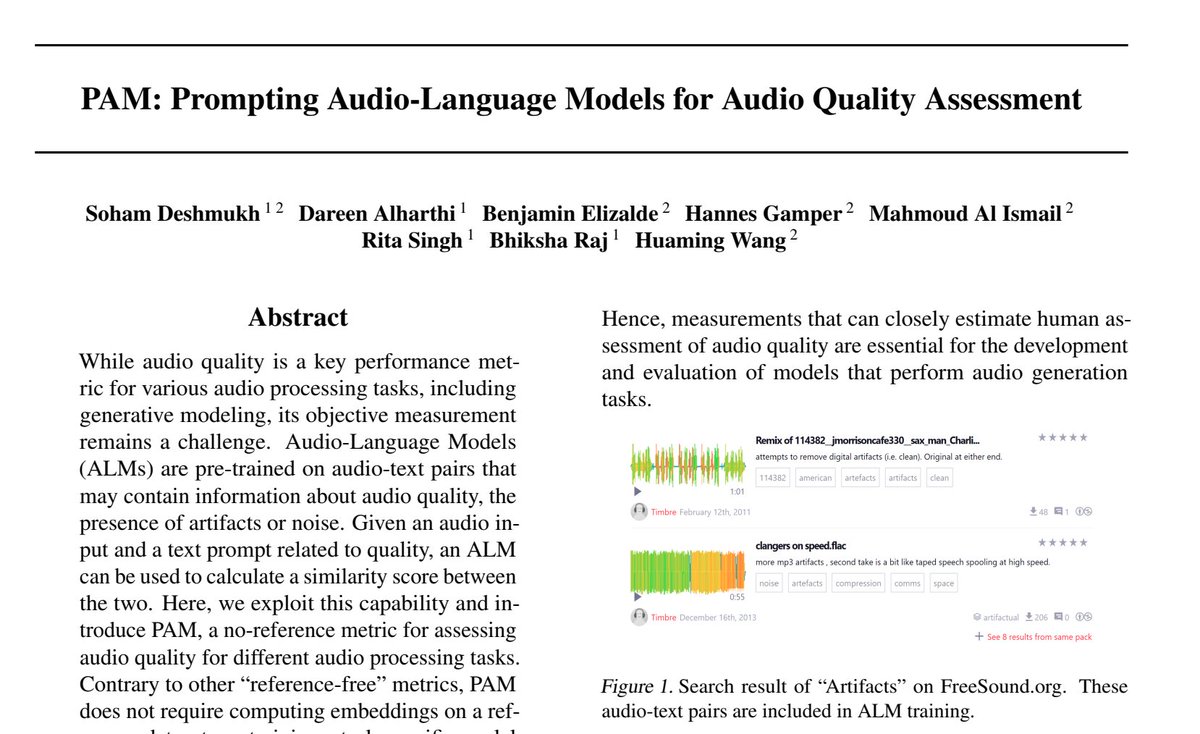

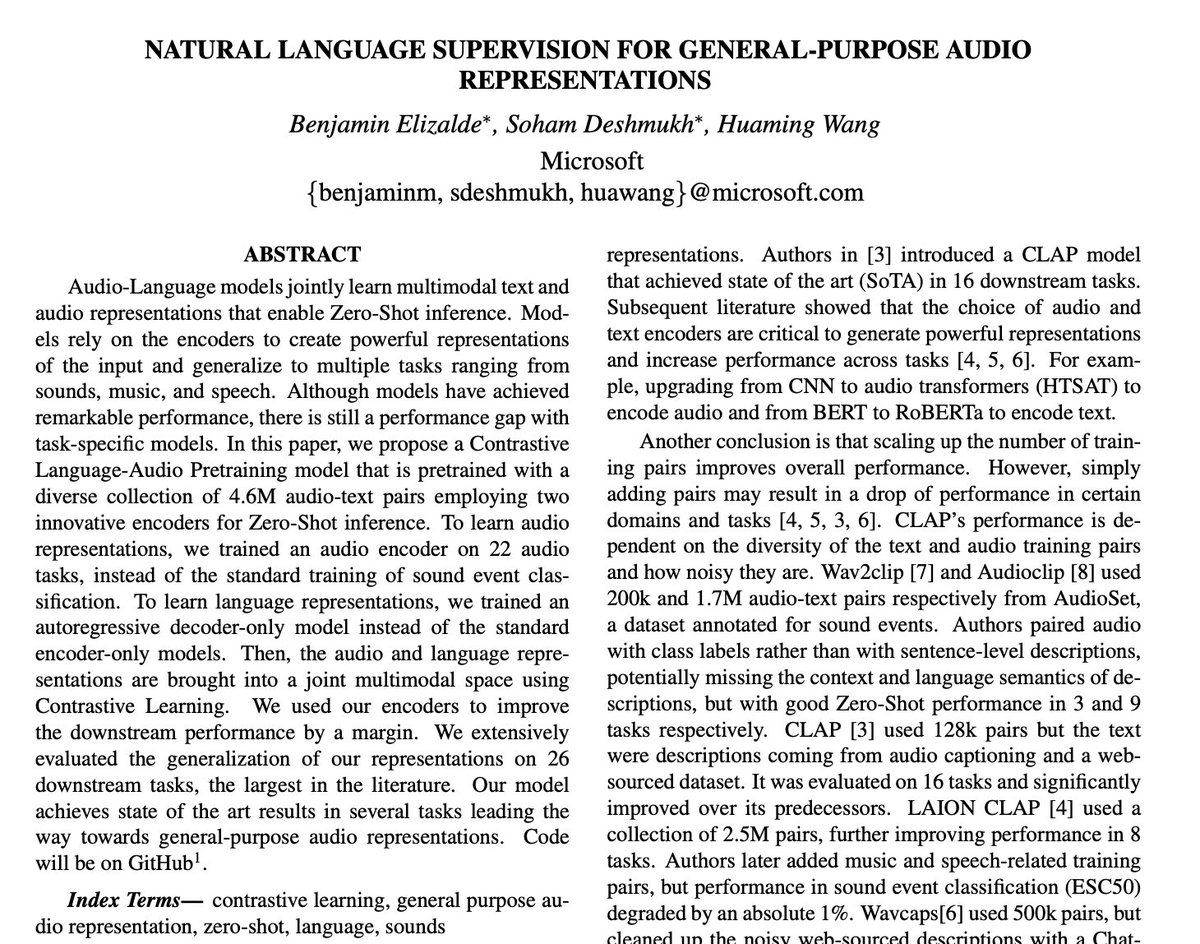

Pengi: An Audio Language Model for Audio Tasks introduce Pengi, a novel Audio Language Model that leverages Transfer Learning by framing all audio tasks as text-generation tasks. It takes as input, an audio recording, and text, and generates free-form text as output. The input audio is represented as a sequence of continuous embeddings by an audio encoder. A text encoder does the same for the corresponding text input. Both sequences are combined as a prefix to prompt a pre-trained frozen language model. The unified architecture of Pengi enables open-ended tasks and close-ended tasks without any additional fine-tuning or task-specific extensions. When evaluated on 22 downstream tasks, our approach yields state-of-the-art performance in several of them paper page: huggingface.co/papers/2305.11…

Pengi: An Audio Language Model for Audio Tasks introduce Pengi, a novel Audio Language Model that leverages Transfer Learning by framing all audio tasks as text-generation tasks. It takes as input, an audio recording, and text, and generates free-form text as output. The input audio is represented as a sequence of continuous embeddings by an audio encoder. A text encoder does the same for the corresponding text input. Both sequences are combined as a prefix to prompt a pre-trained frozen language model. The unified architecture of Pengi enables open-ended tasks and close-ended tasks without any additional fine-tuning or task-specific extensions. When evaluated on 22 downstream tasks, our approach yields state-of-the-art performance in several of them paper page: huggingface.co/papers/2305.11…