berk atıl

220 posts

berk atıl

@berkatilgs

PhD student at Penn State University. NLP Researcher

New @Scale_AI paper! We’re introducing Professional Reasoning Bench (PRBench), the largest open-source, rubric-based evaluation for real-world professional reasoning in Law and Finance. PRBench contains a total of 1,100 tasks and 19,000+ rubric criteria authored by 182 experts!

Life update: Completed my PhD. From never wanting to get a masters degree to here, it’s been a memorable journey. Time flies, 4 years went by quite fast.

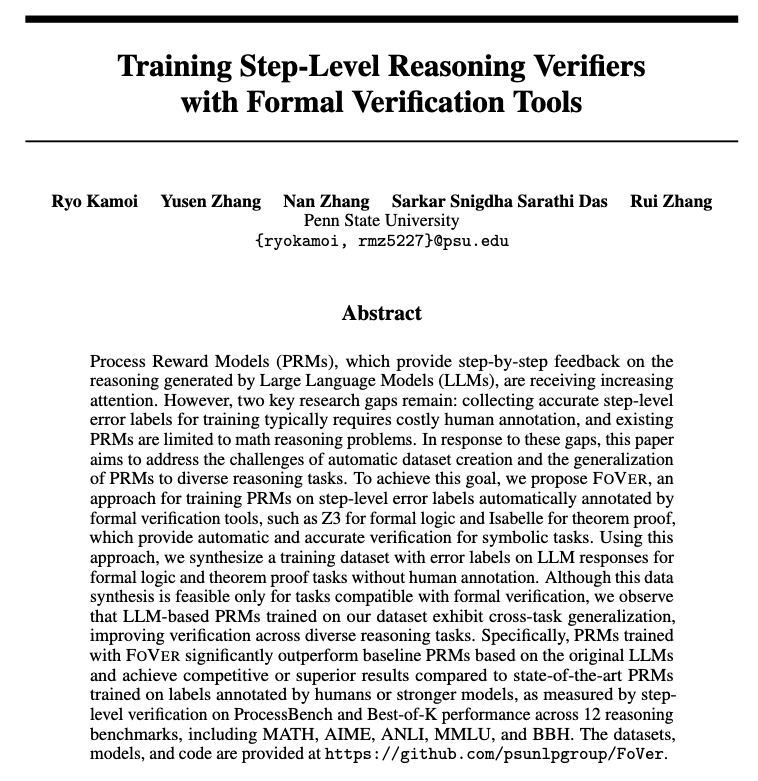

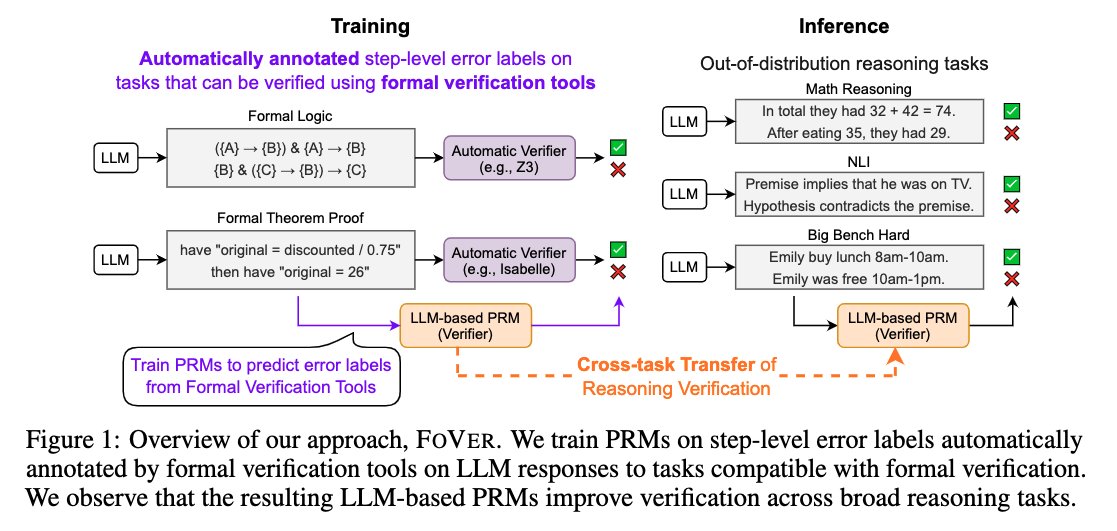

📢 New paper! FoVer enhances PRMs for step-level verification of LLM reasoning w/o human annotation 🚀 We synthesize training data using formal verification tools and improve LLMs at step-level verification of LLM responses on MATH, AIME, MMLU, BBH, etc. arxiv.org/abs/2505.15960

📢 Happy to introduce SiReRAG: our #ICLR2025 paper on RAG indexing! Facilitating comprehensive knowledge synthesis on multihop reasoning, SiReRAG models both similarity and relatedness signals of a corpus. Code: github.com/SalesforceAIRe… Paper: arxiv.org/abs/2412.06206 (1/N)🧵

Check out this recent work from @uncnlp on evaluating fairness in summarization. We propose two new metrics to quantify fairness. This was in collaboration with @ruizhang_nlp, and @HaoyuanLi9 will be presenting it at #NAACL25

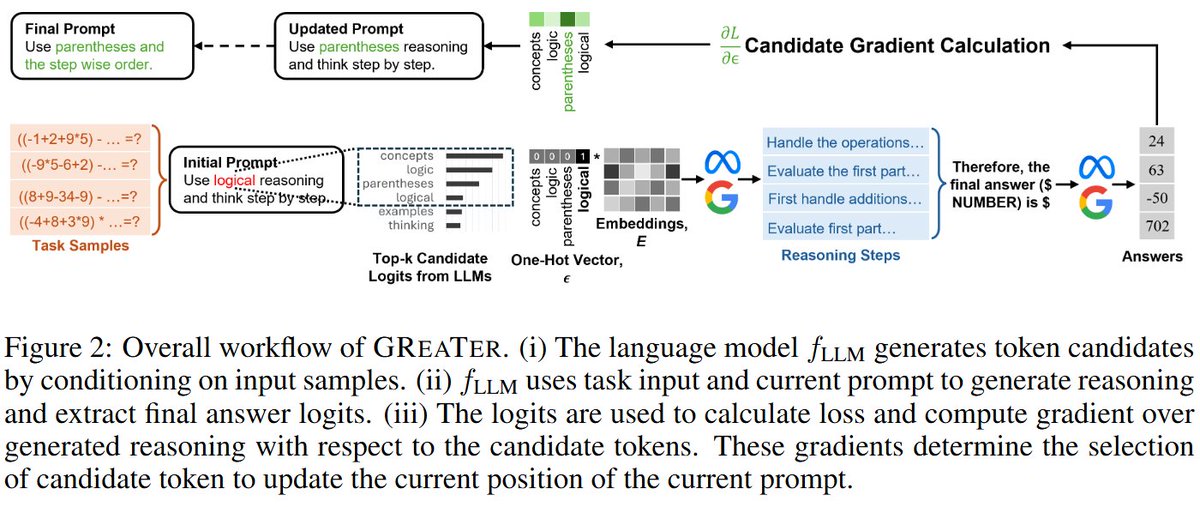

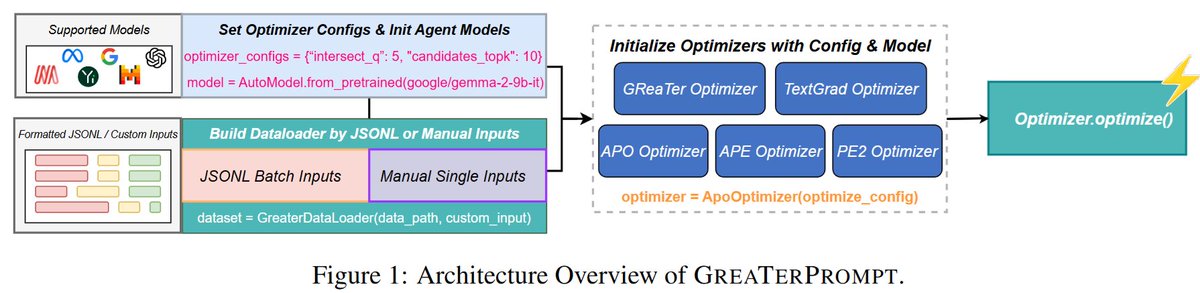

🚀If you're looking for inference-time techniques to max out the reasoning ability of your local LLMs, check our #ICLR2025 paper GreaTer for gradient-based prompt optimization! We generate fluent & strategic prompts outperforming APO/APE/PE2/TextGrad on multiple reasoning benchmarks! 📊🔥 🔑Three key takeaways 1️⃣Gradient can better guide prompt refinement than text-based feedback, unlocking stronger reasoning in small, local LLMs without relying on expensive proprietary models. 2️⃣Incorporate CoT reasoning — not just final answers — leads to smarter, more context-aware prompts. 3️⃣Use perplexity to constrain the search space to generate fluent and readable prompts. We make it extremely easy to use GreaTer as a Python library with GUI interfaces: 📝Paper: arxiv.org/abs/2412.09722 🔗Python Library: github.com/psunlpgroup/Gr…

🚨 New #ICLR2025 Paper & Library Alert! Tired of hand-crafting prompts or relying on massive LLMs for optimization? Meet GReaTer — a gradient-based method that leverages gradients over reasoning chains to optimize prompts and help small models reason better. Even better? Check out GReaTerPrompt, a fully open-source library that wraps GReaTer and other prompt optimization techniques into a unified API — plus an easy-to-use Web UI for non-experts. 📝 GReaTer (ICLR’25): arxiv.org/abs/2412.09722 @RyoKamoi @bo_pang0 @YusenZhangNLP @CaimingXiong @ruizhang_nlp 🛠️ GReaTerPrompt: arxiv.org/abs/2504.03975 Wenliang Zheng, @YusenZhangNLP , @ruizhang_nlp 📦 PyPI: pypi.org/project/greate… 🔗 GitHub: github.com/psunlpgroup/Gr…, github.com/psunlpgroup/Gr… (1/N)

We are organizing MASCSLL this year and have an amazing speaker list. Submit your work before March 24.