Berk Ustun

764 posts

@berkustun

Assistant Prof @UCSD. I work on safety, interpretability, and personalization in ML. Previously @GoogleAI @Harvard @MIT @UCBerkeley🇨🇭🇹🇷

The GDM mechanistic interpretability team has pivoted to a new approach: pragmatic interpretability Our post details how we now do research, why now is the time to pivot, why we expect this way to have more impact and why we think other interp researchers should follow suit

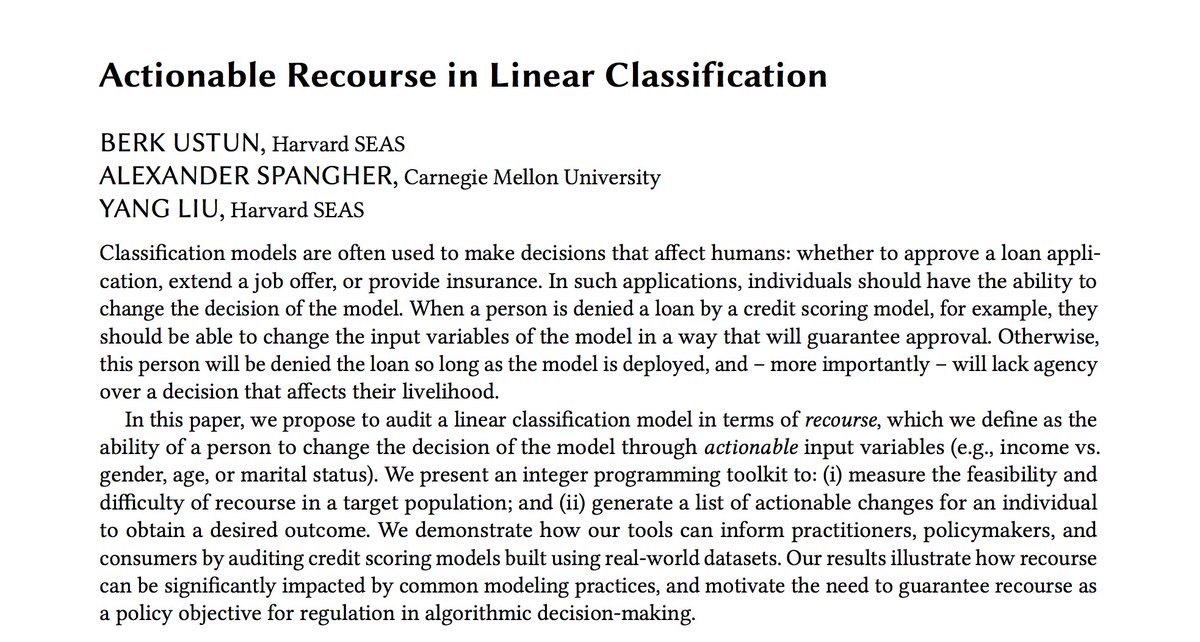

Right to explanation laws assume explanations help people detect algorithmic discrimination. But is there any evidence for that? In our latest work w/ David Danks @berkustun, we show explanations fail to help people, even under optimal conditions. PDF shorturl.at/yaRua