吳小比

2.8K posts

吳小比

@billywu75

精彩不亮麗,起落是無常。 不做投資。美股賭場裡的賭徒一個

🚨🚨 $RKLB Rocket Lab USA - FCC Docket 0430-EX-ST-2026 Rocket Lab has filed an experimental Special Temporary Authority application with the FCC to conduct a rocket launch and pre-launch testing. They are requesting confidential treatment for certain information within the application, specifically Exhibit 1 (Narrative) and Attachment 1 (NTIA Space record data form), citing commercial sensitivity, competitive disadvantage, and national security concerns. The information includes operational parameters, trajectory details, and mission events. Rocket Lab argues that disclosing this information would harm its competitive position and potentially expose sensitive data to foreign governments. They invoke FOIA Exemption 4 and Section 154(j) of the Communications Act to support their request for confidentiality. 🔗 apps.fcc.gov//els/GetAtt.ht….

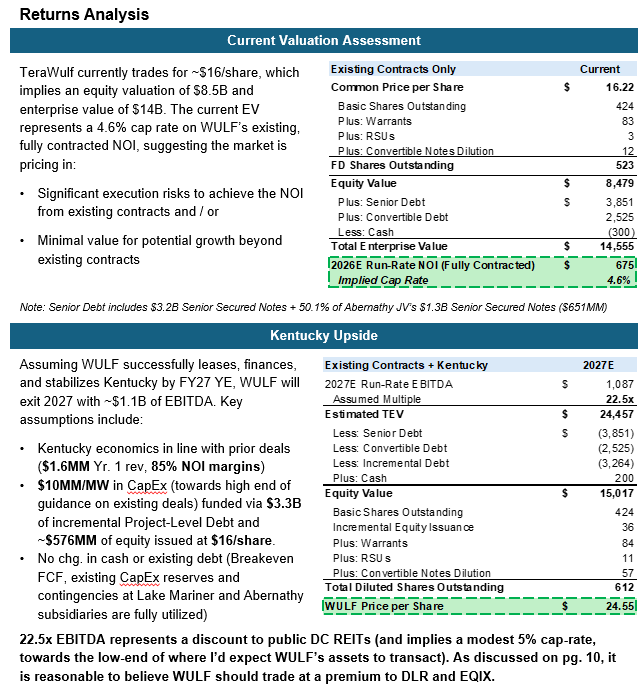

$CIFR has $9.3 billion in contracted revenue from AWS and Google. Revenue starts in H2 2026. The company goes from pre-revenue to $670 million in months. But the market is still pricing it like a Bitcoin miner.

@billywu75 就大家都覺得最穩的時候資本市場暫時利多出盡了

Rocket Lab Emerging as Potential Bus Provider for 2,800-Satellite Equatys Constellation In the wake of Mobile World Congress 2026, industry speculation has intensified regarding a potential partnership between Rocket Lab (Nasdaq: RKLB) and Equatys, the newly detailed Direct-to-Device (D2D) joint venture between Viasat and Space42. The venture aims to deploy a massive LEO constellation of up to 2,800 satellites to provide seamless 3GPP-aligned connectivity to standard smartphones and IoT devices globally. #MissionsConstellations satnews.com/2026/03/25/roc…

$MU $SNDK $EWY Every few months a paper drops claiming AI needs less memory. This is that paper. There is no such thing as a free lunch. Compressing KV cache in controlled benchmarks is not the same as scaling it universally to every user, task, and platform in the world. Accuracy collapse is real at scale. As expected from a research paper, the downsides never make the headlines. Remember Titans Architecture? Google said it would make memory usage more efficient. Didn't move the needle. If anything, it drove more HBM demand. Jevons Paradox. What did Deepseek do? What's memory demand today? You be the judge. HBM is still the primary fuel for AI training. That does not change. The paper targets long-context inference which relies on a full memory stack: HBM for speed, server DRAM and NAND for capacity and storage. When inference runs, memory spills from HBM into conventional DRAM and NAND. HBM stays fully utilized. At best, this research reduces the spill in a controlled workload, IMO.

The fact that memory stocks are crashing because of Google’s Turboquant is a pretty good indicator of how many clueless people this market is filled with. It’s like saying Aramco should crash because Toyota came out with a next-generation hybrid engine.