binal

1.4K posts

binal

@binalkp91

ai @ sequoia capital global equities

Katılım Haziran 2013

1.8K Takip Edilen506 Takipçiler

binal retweetledi

GIF

Punchbowl News@PunchbowlNews

Senate Commerce Committee Chair Ted Cruz on Tuesday acknowledged the desire “to protect against catastrophic risk” from the most advanced artificial intelligence systems, while warning against regulatory overreach. @BenBrodyDC has the story: punchbowl.news/article/tech/c…

ZXX

binal retweetledi

Security is an economic decision.

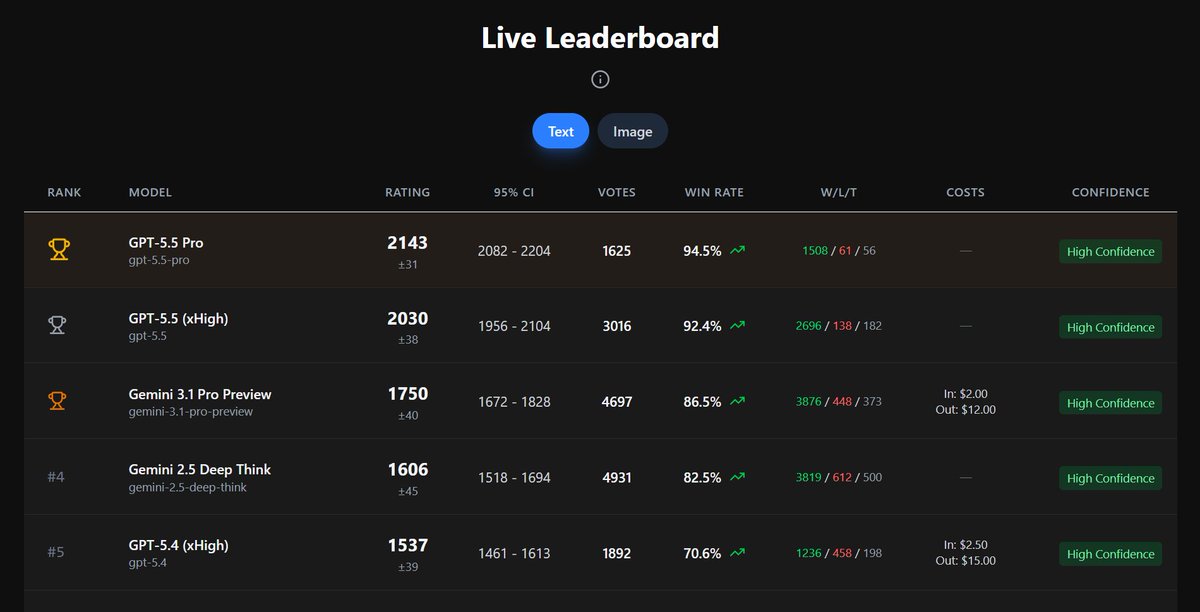

For a fixed cost, within @XBOW, which model has the best odds of crafting an exploit?

GPT-5.5 > Mythos > Opus 4.6 on real OSS web vulns.

Curves below.

English

So there's still nearly 83% of the market that has nothing to do with semiconductors.

Kevin Gordon@KevRGordon

The Semiconductor industry is now a record 17.4% of the S&P 500's market cap

English

binal retweetledi

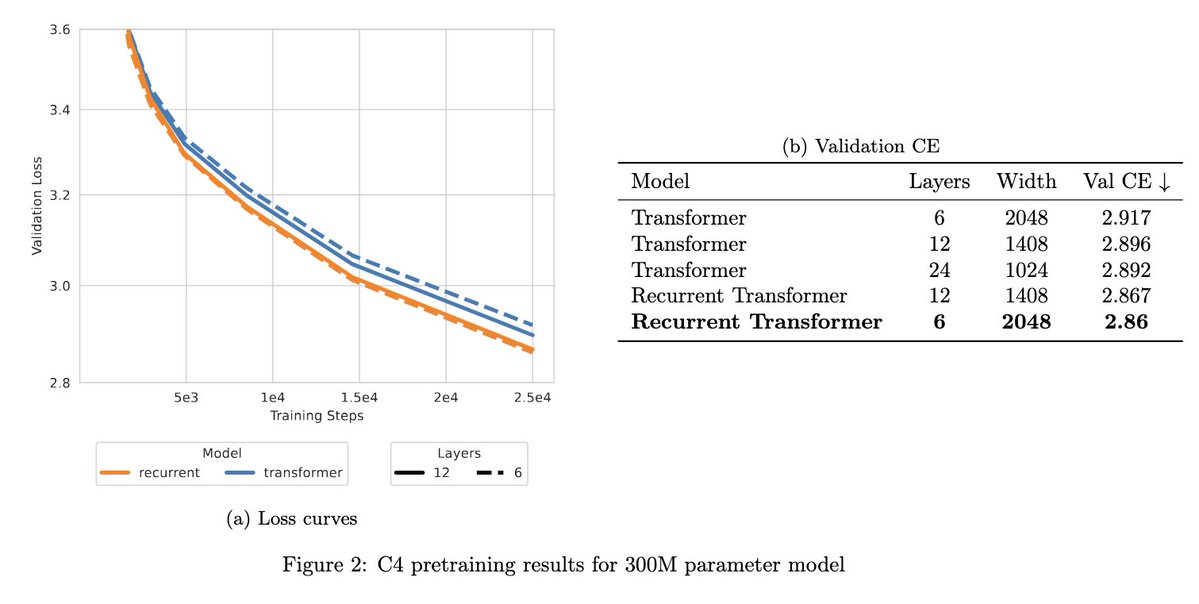

[2/5] In arxiv.org/abs/2604.17121, @ShoaibASiddiqui, @savvyRL, and I explain why feedforward transformer architectures are fundamentally ill suited to complex state-tracking tasks.

English

@celestepoasts maybe I’m numb to magnitude at this point but it doesn’t seem like that much versus all the GW deals being signed?

English

binal retweetledi

A long, long time ago, I was about to receive an offer letter and was asked what would I do if it came with a $10m guarantee. My answer:

“I would hire an army of Cimmerian mercenaries, conquer your fund, see the employees driven before me, and hear the lamentations of the women.”

I didn’t get that job.

English

@Miles_Brundage They said it's an "early checkpoint" in the post which leads me to believe just 5.5 though hard to say to your point.

English

binal retweetledi

@tunahorse21 o1 pro when they let you paste in as many tokens as you wanted, i always wonder how much i cost OpenAI those first few months

English

asked it to generate an image of itself answering how many r's are in strawberry

ChatGPT@ChatGPTapp

at long last

English

@Miles_Brundage i think it's reasonable that codex is both growing wildly and openai has enough compute headroom (for now?) to keep resetting rate limits. sounds like they have pathways to keep scaling compute too

x.com/thsottiaux/sta…

Tibo@thsottiaux

Looking at the traffic dashboard for Codex just now, it would be scary if we didn't have a lot more compute coming online in the coming weeks. All according to plan fortunately.

English

Ah that would make sense (though I'm still not sure what to make of something being on fire given the credit reset thing) x.com/binalkp91/stat…

binal@binalkp91

@Miles_Brundage I took “they” to be Anthropic

English

Isn't this obviously false since they keep resetting the limits like every week? x.com/jimcramer/stat…

Jim Cramer@jimcramer

The bottom line: they are short compute and Codex is on fire...

English

binal retweetledi

binal retweetledi

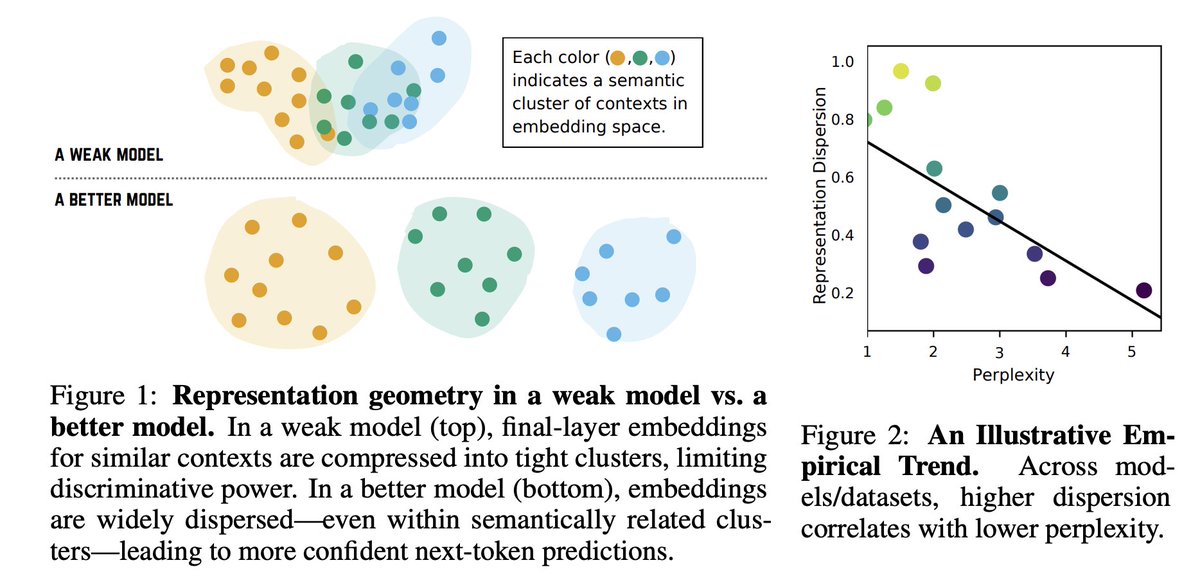

What does a good language model look like internally in geometry?

We find a simple but surprising signal:

👉 the more spread out its hidden representations are, the better it predicts

(even for semantically similar contexts)

ICLR 2026 arxiv.org/pdf/2506.24106

Presenting now👇

English