Marvel Studios is reportedly considering moving 'AVENGERS: DOOMSDAY' forward to December 11. Avoiding clashing with 'DUNE: PART THREE’ and getting some IMAX screens. (Via: m.youtube.com/watch?v=pXsNZ5…)

bucca

28.8K posts

@bngnlul

flamengo, direito e machine learning. calm as buddha

Marvel Studios is reportedly considering moving 'AVENGERS: DOOMSDAY' forward to December 11. Avoiding clashing with 'DUNE: PART THREE’ and getting some IMAX screens. (Via: m.youtube.com/watch?v=pXsNZ5…)

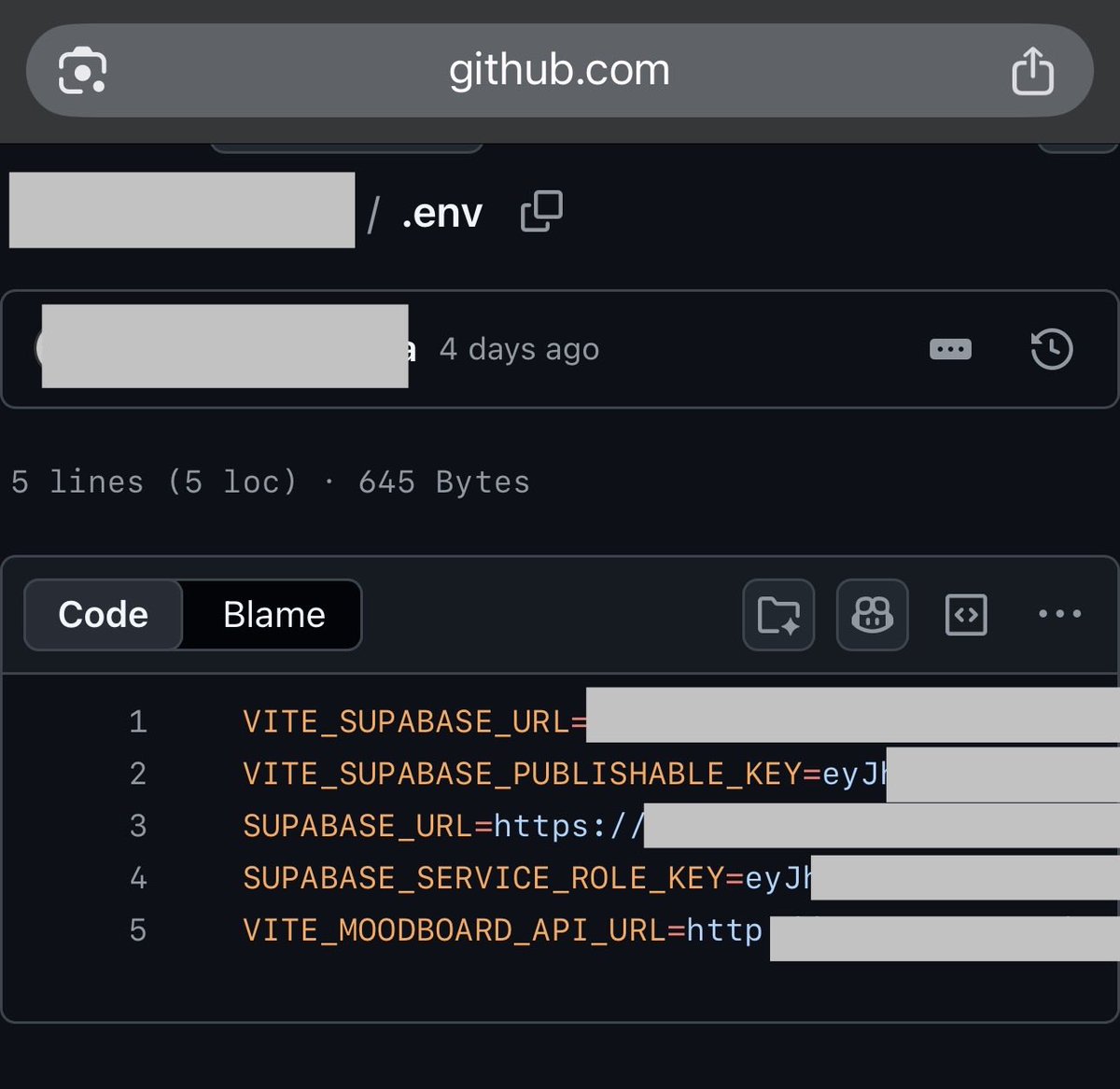

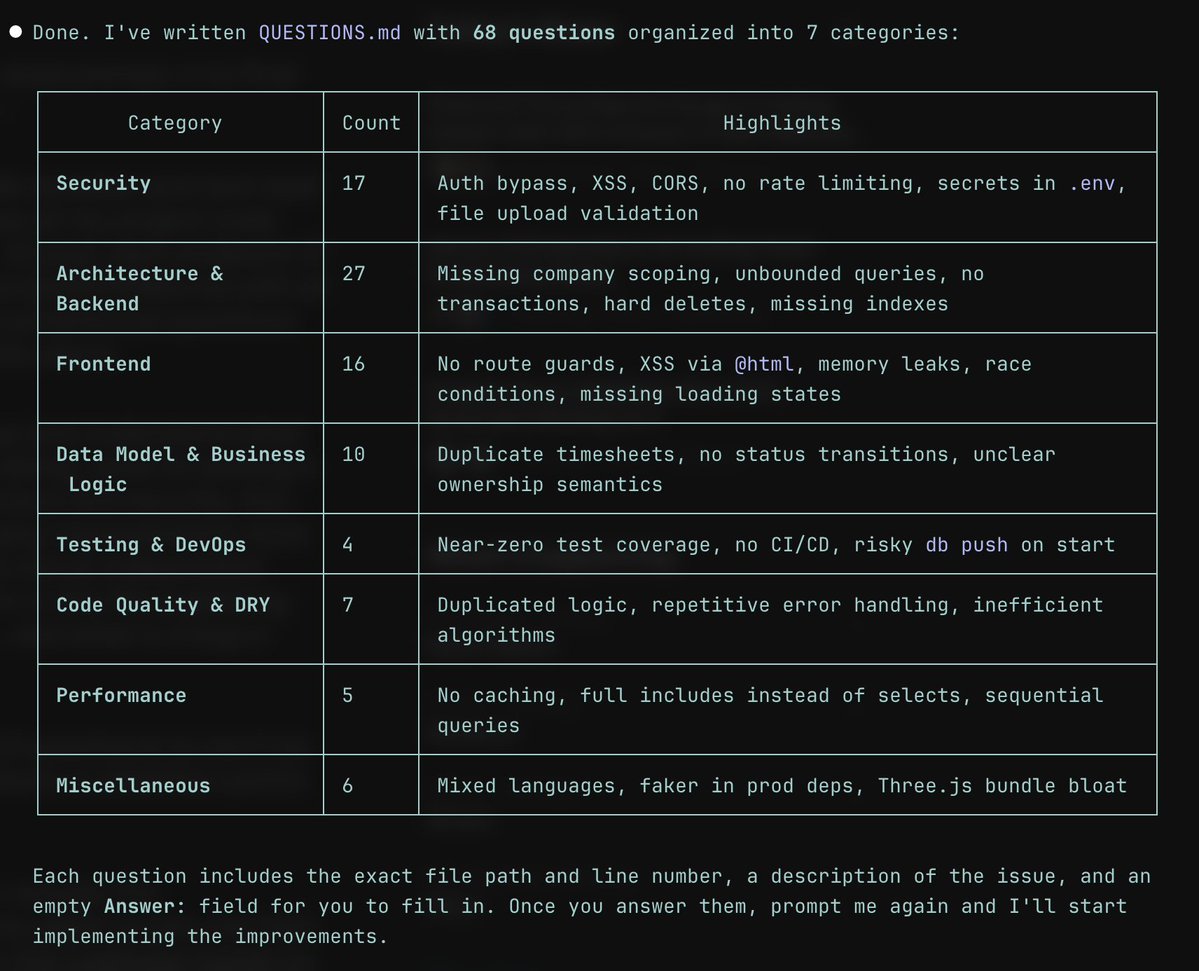

uma coisa daora é q ele ta no github pages e consegui toda a source code consegui a rota pra o profile com tracert ================ C:\Users\rsha>tracert cinema.abrahub.com Rastreando a rota para abraham1152.github.io [185.199.111.153] ================ outra coisa ele fez o .gitignore mas nao colocou o .env, entao tem um .env no github de todas as chaveskkkkk ================ 10:42PM INF 206 commits scanned. 10:42PM INF scan completed in 8.79s 10:42PM WRN leaks found: 7 ================ alias, q codigo feio e mal escrito sinceramente, eu ia fazer uma analise melhor do site mas foi vazado em 2 minutos entao nem vale a pena

Just look at the difference a year makes