Bo An

2.9K posts

Bo An

@bo__an

PhD@Yale; STS; history of computing, economics, AI, media & China; also make academic apps for fun.

NO WAY ROONEY RECREATED RONALDO’S BICYCLE KICK AGAINST JUVENTUS 🤯

The AI Scientist: Towards Fully Automated AI Research, Now Published in Nature Nature: nature.com/articles/s4158… Blog: sakana.ai/ai-scientist-n… When we first introduced The AI Scientist, we shared an ambitious vision of an agent powered by foundation models capable of executing the entire machine learning research lifecycle. From inventing ideas and writing code to executing experiments and drafting the manuscript, the system demonstrated that end-to-end automation of the scientific process is possible. Soon after, we shared a historic update: the improved AI Scientist-v2 produced the first fully AI-generated paper to pass a rigorous human peer-review process. Today, we are happy to announce that “The AI Scientist: Towards Fully Automated AI Research,” our paper describing all of this work, along with fresh new insights, has been published in @Nature! This Nature publication consolidates these milestones and details the underlying foundation model orchestration. It also introduces our Automated Reviewer, which matches human review judgments and actually exceeds standard inter-human agreement. Crucially, by using this reviewer to grade papers generated by different foundation models, we discovered a clear scaling law of science. As the underlying foundation models improve, the quality of the generated scientific papers increases correspondingly. This implies that as compute costs decrease and model capabilities continue to exponentially increase, future versions of The AI Scientist will be substantially more capable. Building upon our previous open-source releases (github.com/SakanaAI/AI-Sc…), this open-access Nature publication comprehensively details our system's architecture, outlines several new scaling results, and discusses the promise and challenges of AI-generated science. This substantial milestone is the result of a close and fruitful collaboration between researchers at Sakana AI, the University of British Columbia (UBC) and the Vector Institute, and the University of Oxford. Congrats to the team! @_chris_lu_ @cong_ml @RobertTLange @_yutaroyamada @shengranhu @j_foerst @hardmaru @jeffclune

LlamaParse Agentic Plus mode now delivers precise visual grounding with bounding boxes for the most challenging document elements. Our latest update brings major improvements to how we handle complex visual content: 📐 Complex LaTex formulas - accurately parse mathematical expressions with precise positioning ✍️ Handwriting recognition - extract handwritten text with location coordinates 📊 Complex layouts - navigate multi-column documents and intricate formatting 📈 Infographics and charts - identify and extract data visualizations with spatial context This means you can now build applications that not only extract text from documents but also understand exactly where that content appears on the page - perfect for creating more intelligent document analysis workflows. Try LlamaParse Agentic Plus mode and see how visual grounding transforms your document parsing capabilities: cloud.llamaindex.ai/?utm_source=so…

Many things to dislike here, but “Results weak? AI pivots to an entirely new hypothesis.” is one of the more worrisome results of AI research: slop or bot, it will be p hacked to meaninglessness

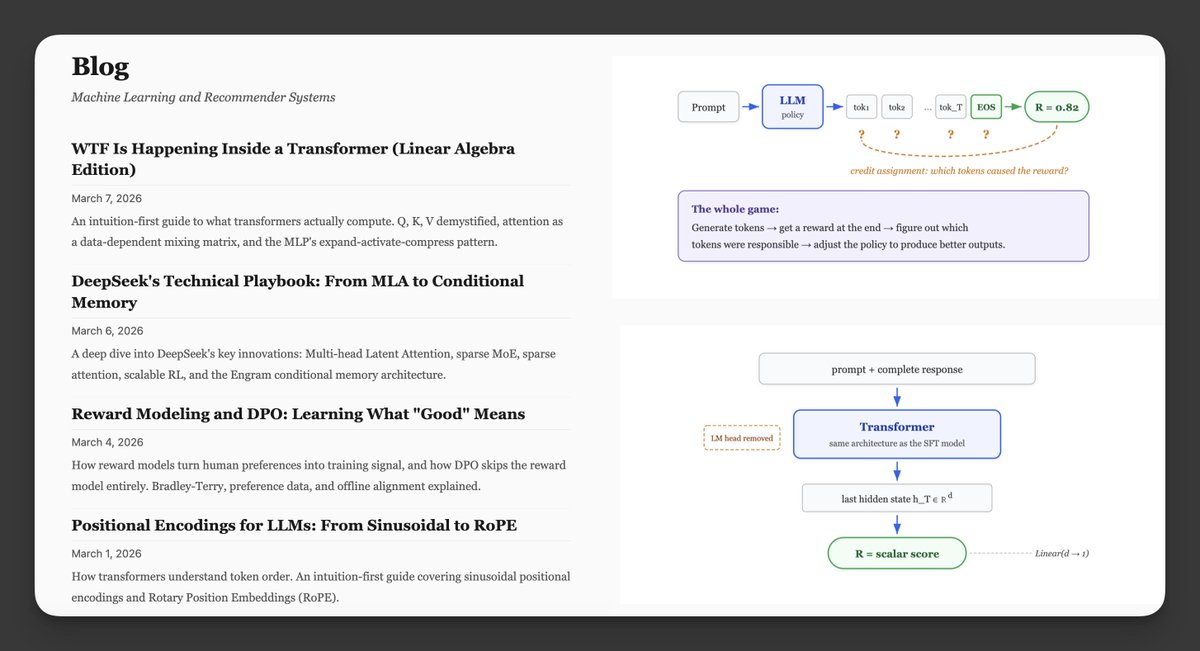

@neural_avb Besides 9-5, sth like this mesuvash.github.io/blog/2026/rl_f…

me stepping down. bye my beloved qwen.

美团这么大公司,抄我的代码给自家 AI 浏览器用,还不给我赞助啊😂 几千亿市值的公司,白嫖嫖到我头上了,shame on you 左:美团 ai 浏览器,右:我的产品 根据我的 GPL 协议,任何使用我代码的产品都要开源,请问美团你的这个新 ai 产品开源了吗?法律意识呢? 更多截图在评论。

Distillation can be legitimate: AI labs use it to create smaller, cheaper models for their customers. But foreign labs that illicitly distill American models can remove safeguards, feeding model capabilities into their own military, intelligence, and surveillance systems.

We’ve identified industrial-scale distillation attacks on our models by DeepSeek, Moonshot AI, and MiniMax. These labs created over 24,000 fraudulent accounts and generated over 16 million exchanges with Claude, extracting its capabilities to train and improve their own models.