Sabitlenmiş Tweet

Bowen Baker

54 posts

Bowen Baker

@bobabowen

Research Scientist at @openai since 2017 Robotics, Multi-Agent Reinforcement Learning, LM Reasoning, and now Alignment.

Katılım Ocak 2017

114 Takip Edilen3.7K Takipçiler

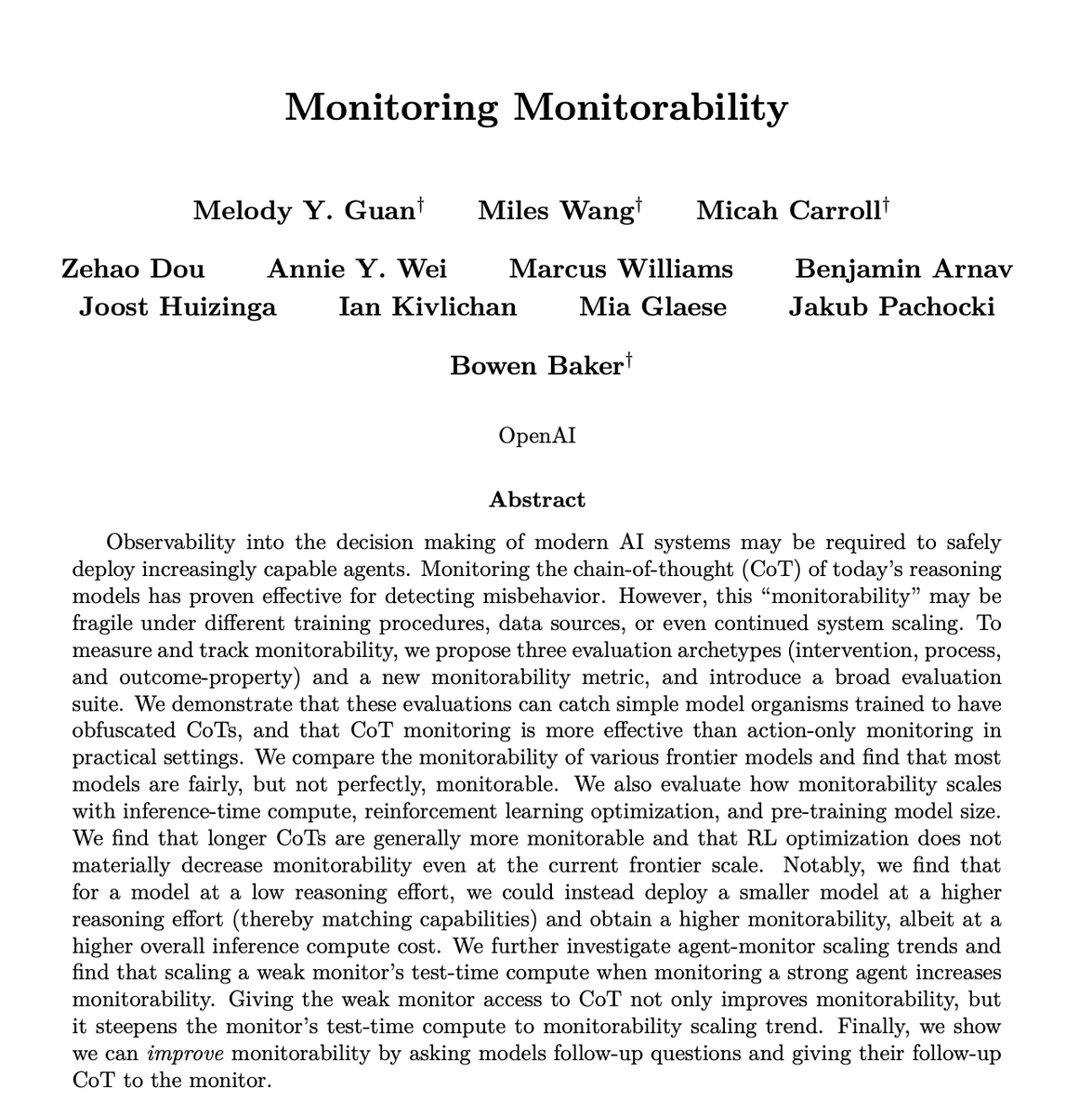

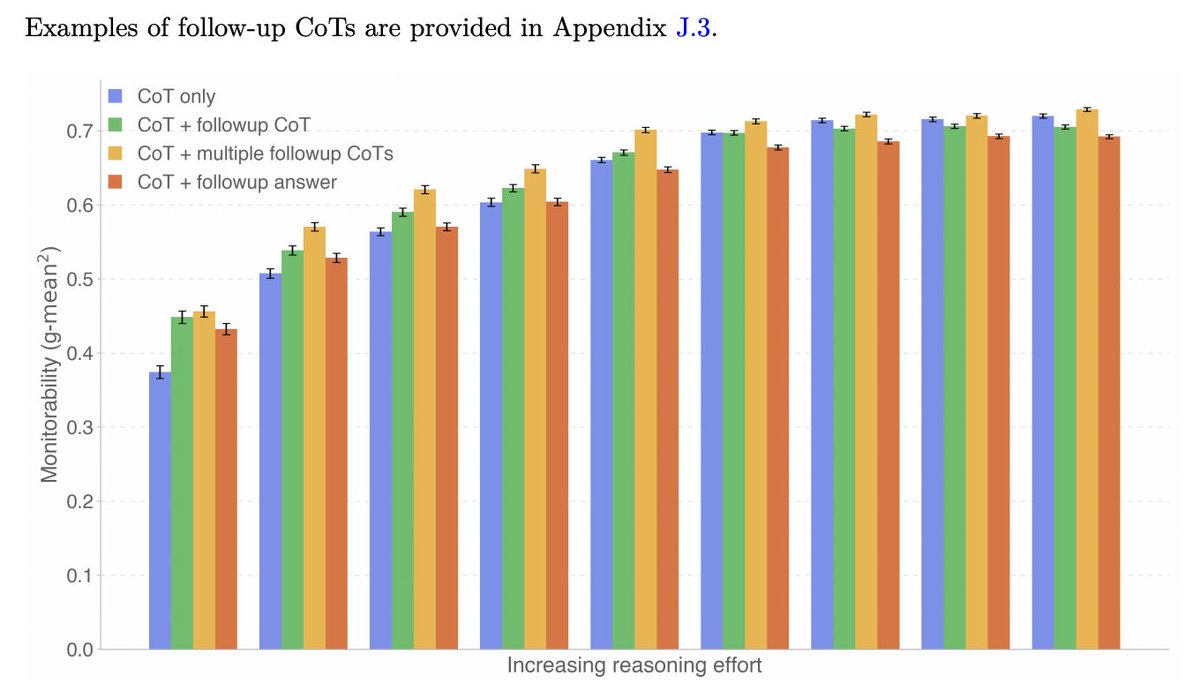

Monitoring an agent's CoT can be incredibly useful for detecting misbehavior. In order to preserve its usefulness, we must be able to quantify monitorability. This motivated our work in creating these evaluations and using them to conduct scaling studies

openai.com/index/evaluati…

English

Today we open sourced many of OpenAI's monitorability evaluations. We hope that the research community and other model developers can build upon them and use them to evaluate the monitorability of their own models.

alignment.openai.com/monitorability…

English

Because there are other possible failure modes, it will still be important to run monitorability evaluations as well (openai.com/index/evaluati…). The more pieces of evidence we can gather that our models are monitorable the better.

English

Models becoming aware they are being monitored and actively controlling their CoT to evade monitoring is one potential failure mode of CoT monitorability. Regularly measuring our models ability to control their CoT is therefore important if we want to preserve CoT monitorability.

Tomek Korbak@tomekkorbak

We have a new eval to help keep chains of thought (CoT) monitorable: CoT Controllability. This tests whether LLMs can control their CoT, helping to evade CoT monitors. So far, the results leave us cautiously optimistic: today’s models struggle to obfuscate their reasoning in ways that undermine monitorability.

English

Bowen Baker retweetledi

We're publishing a new evaluation suite and research paper on Chain-of-Thought (CoT) Controllability.

We find that GPT-5.4 Thinking shows low ability to obscure its reasoning—suggesting CoT monitoring remains a useful safety tool. openai.com/index/reasonin…

English

Bowen Baker retweetledi

New Publication: Chain of Thought Monitorability

Our latest issue brief explores how Chain of Thought monitoring can help prevent certain types of harm, and why it shows promise as a new layer of defense for frontier AI safety and security: frontiermodelforum.org/issue-briefs/c…

English

Bowen Baker retweetledi

To preserve chain-of-thought (CoT) monitorability, we must be able to measure it.

We built a framework + evaluation suite to measure CoT monitorability — 13 evaluations across 24 environments — so that we can actually tell when models verbalize targeted aspects of their internal reasoning. openai.com/index/evaluati…

English

I had a great time working with such an awesome team on this. Looking forward to doing more great research with them.

@MelodyGuan @MilesKWang @MicahCarroll @zehao_dou Annie Wei @Marcus_J_W Benjamin Arnav

@mia_glaese @merettm

English

Bowen Baker retweetledi

Today, OpenAI is launching a new Alignment Research blog: a space for publishing more of our work on alignment and safety more frequently, and for a technical audience.

alignment.openai.com

English

Bowen Baker retweetledi

We’ve developed a new way to train small AI models with internal mechanisms that are easier for humans to understand.

Language models like the ones behind ChatGPT have complex, sometimes surprising structures, and we don’t yet fully understand how they work.

This approach helps us begin to close that gap.

openai.com/index/understa…

English