Zehao Dou

18 posts

@zehao_dou

Member of Technical Staff @OpenAI PhD Grad@Yale S&DS Former @PKU1898 Ex-intern @GoogleAI @MSFTResearch

1/N I’m excited to share that our latest @OpenAI experimental reasoning LLM has achieved a longstanding grand challenge in AI: gold medal-level performance on the world’s most prestigious math competition—the International Math Olympiad (IMO).

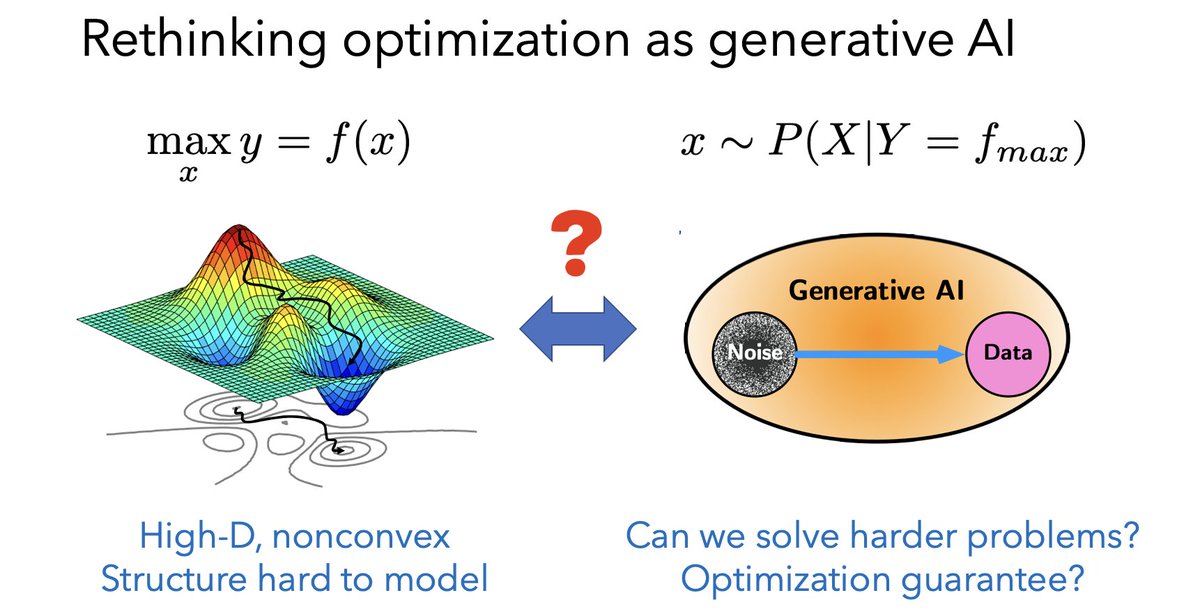

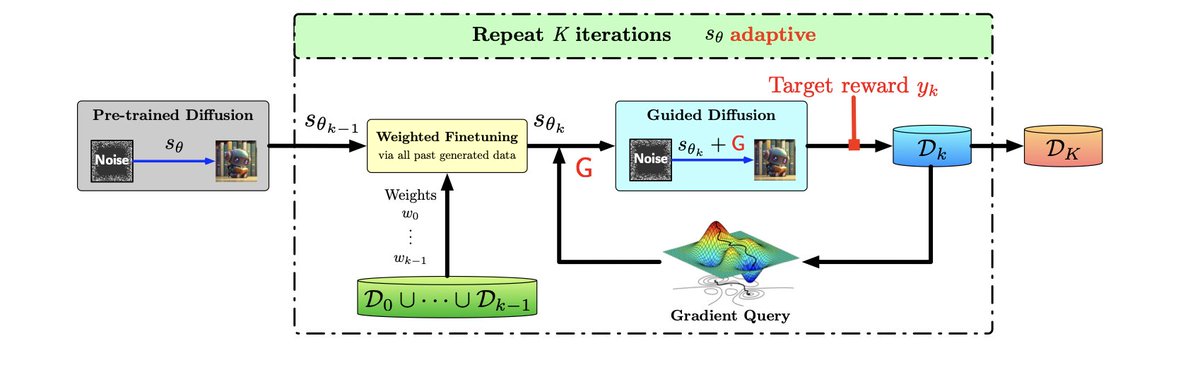

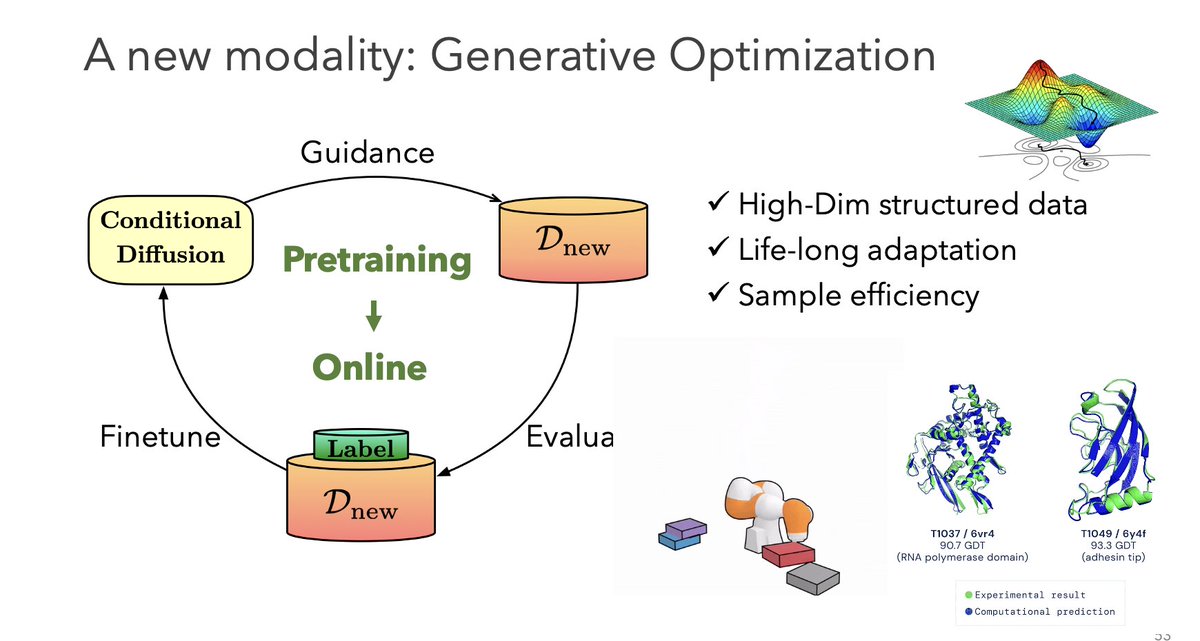

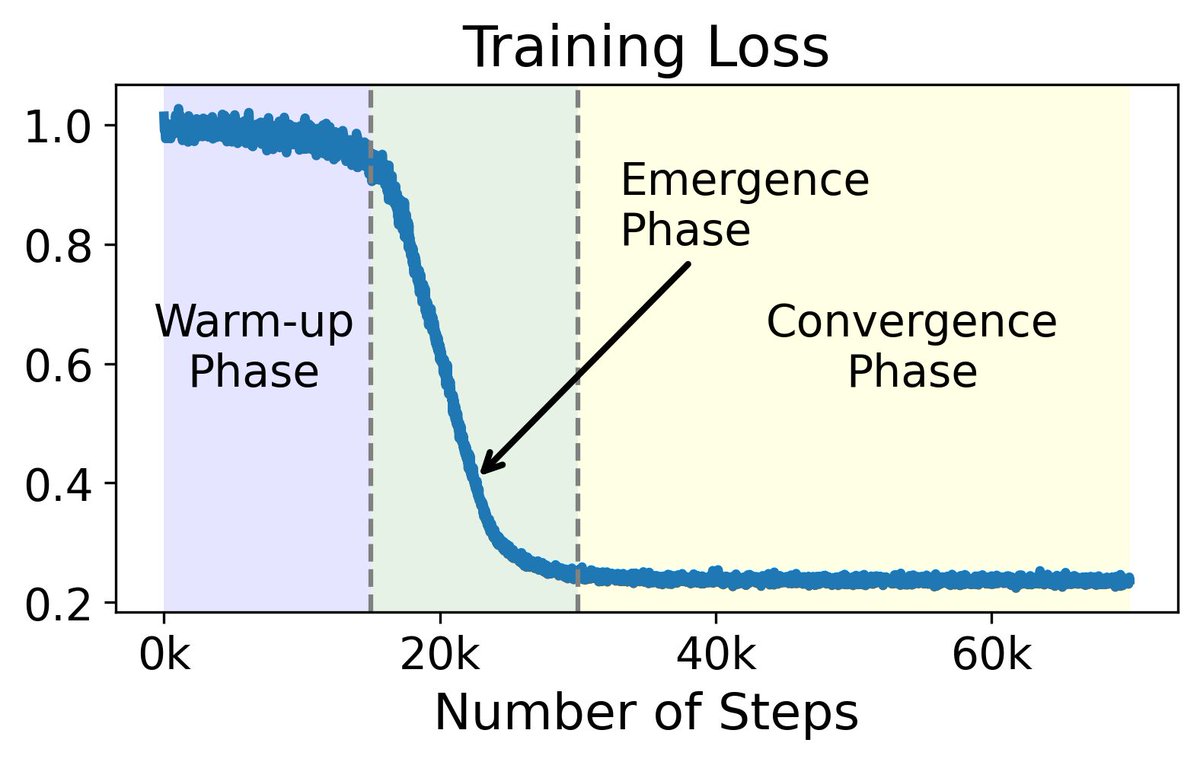

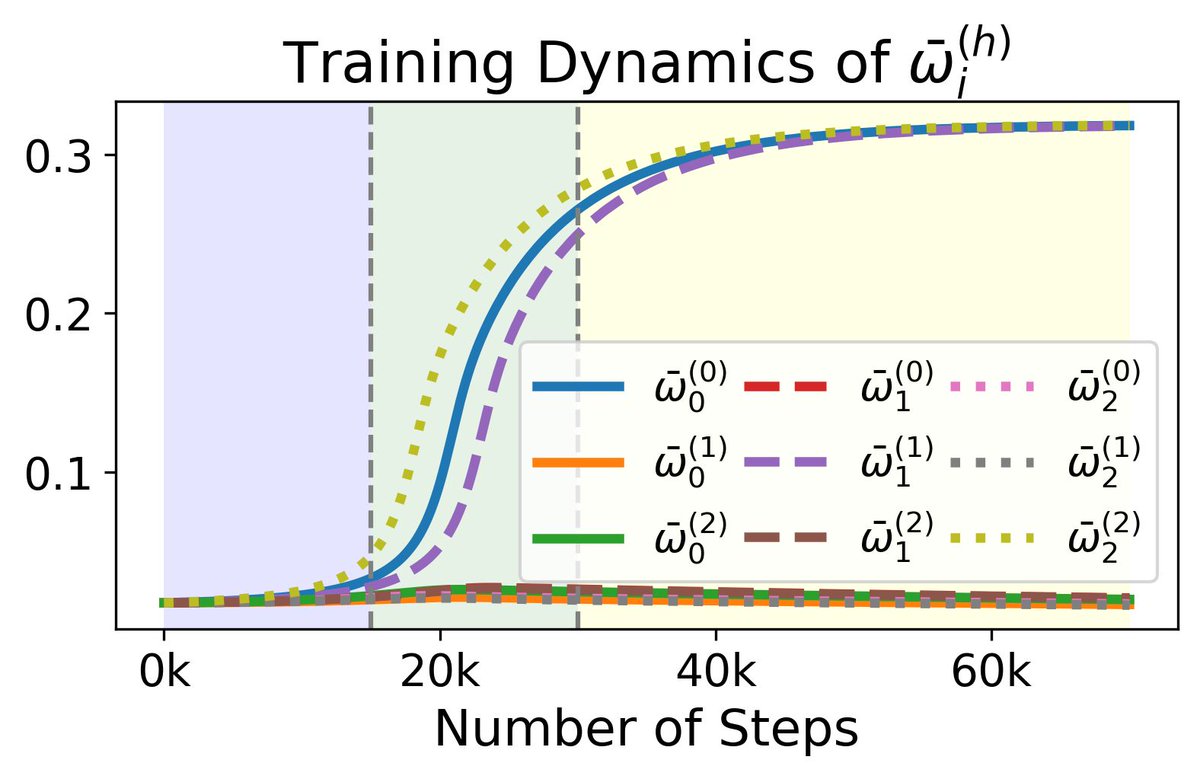

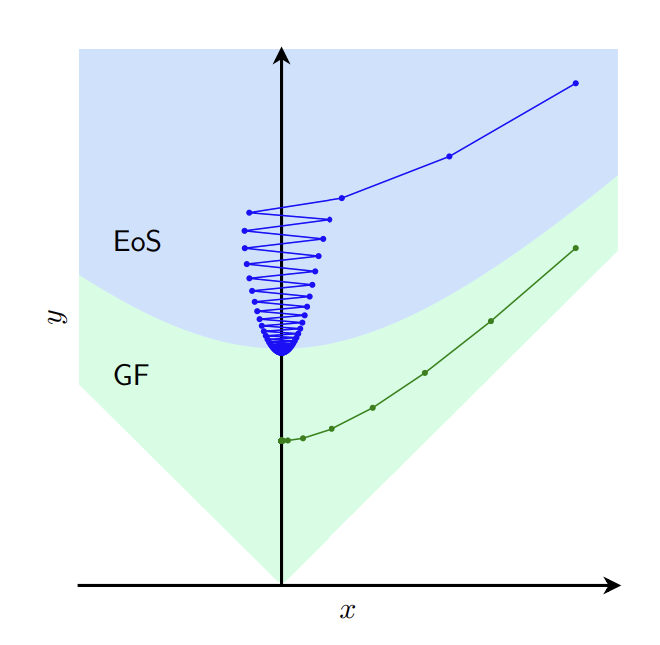

How are you using ChatGPT or Claude 3? We don't just throw a query at ChatGPT once; we do it sequentially, and ChatGPT makes sequential decisions. A natural question here is, does an LLM have the ability to make good sequential decisions? If so, why are pertained models good at sequential decision-making? If not, what methods should be used for training? Our paper provides an answer to these questions. arxiv.org/abs/2403.16843 Answers to these questions and more in this paper with Xiangyu Liu, Asuman Ozdaglar, and @KaiqingZhang