The Nurse Engineer🇳🇬

1.1K posts

The Nurse Engineer🇳🇬

@boochi_dot_dev

• ICU Nurse • Computer Scientist • NeuralMind • ML Engineer

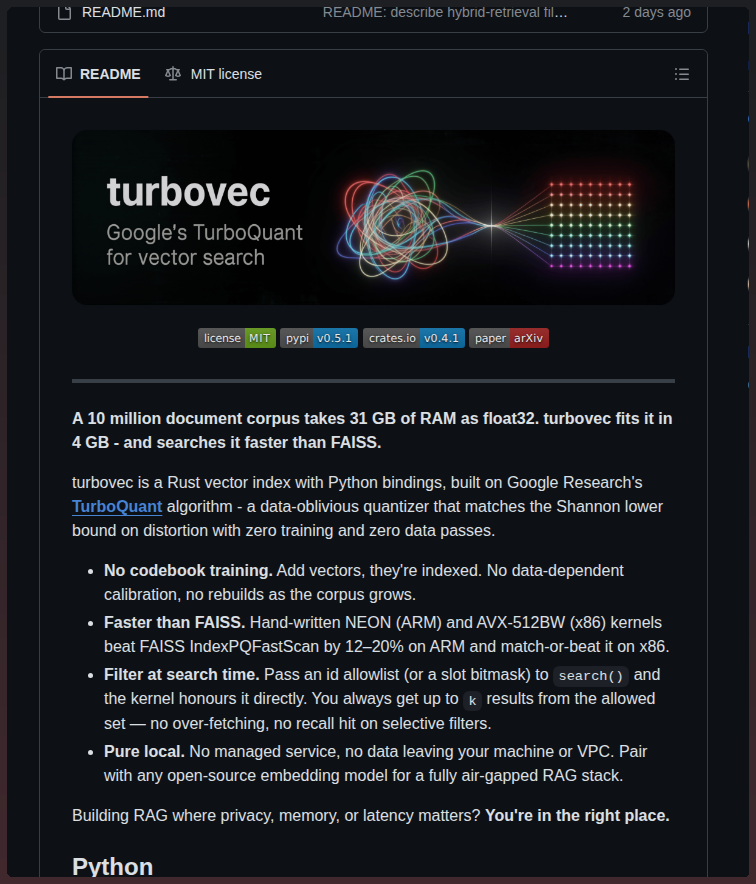

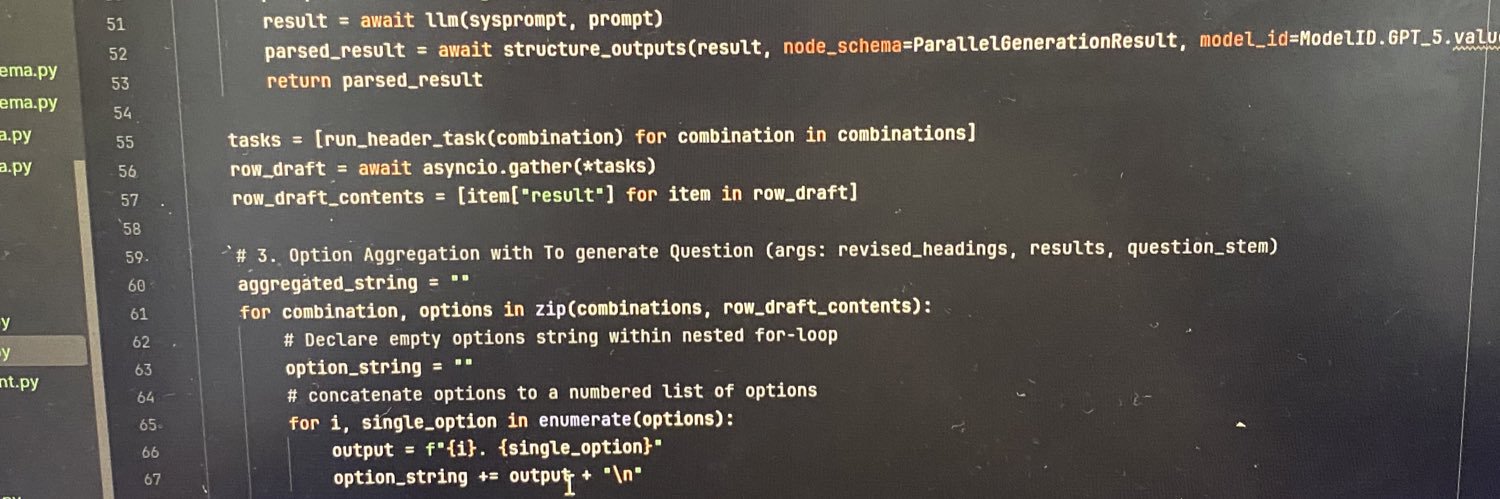

Microsoft Senior AI developer just showed how they build AI agents with Claude at Microsoft. 34-minutes. free. By Microsoft team Opus 4.7 + 1,400+ pre-built MCP tools plug Claude into agent → give it tools → ship to production worth more than any $500 vibe-coding course.

Gemini Flash 3.5 is now on CursorBench, our main coding agent eval. We’ll keep updating the leaderboard as new models come out. cursor.com/evals

What did you get a degree in?

Cosmo Shalizi: "I like to think I am not a stupid man, and I have been reading about, and coding up, neural networks since the early 1990s. But I read Vaswani et al. (2017) ["Attention Is All You Need"] multiple times, carefully, and was quite unable to grasp what "attention" was supposed to be doing. (I could follow the math.) I also read multiple tutorials, for multiple intended audiences, and got nothing from them...the sheer opacity of this literature is I think a real problem." bactra.org/notebooks/nn-a…

What's the difference between : and ;