Bridgebench

326 posts

Bridgebench

@bridgebench

The best vibe coding benchmark in the world. Built by @bridgemindai

United States Katılım Mart 2026

5 Takip Edilen1.8K Takipçiler

Bridgebench retweetledi

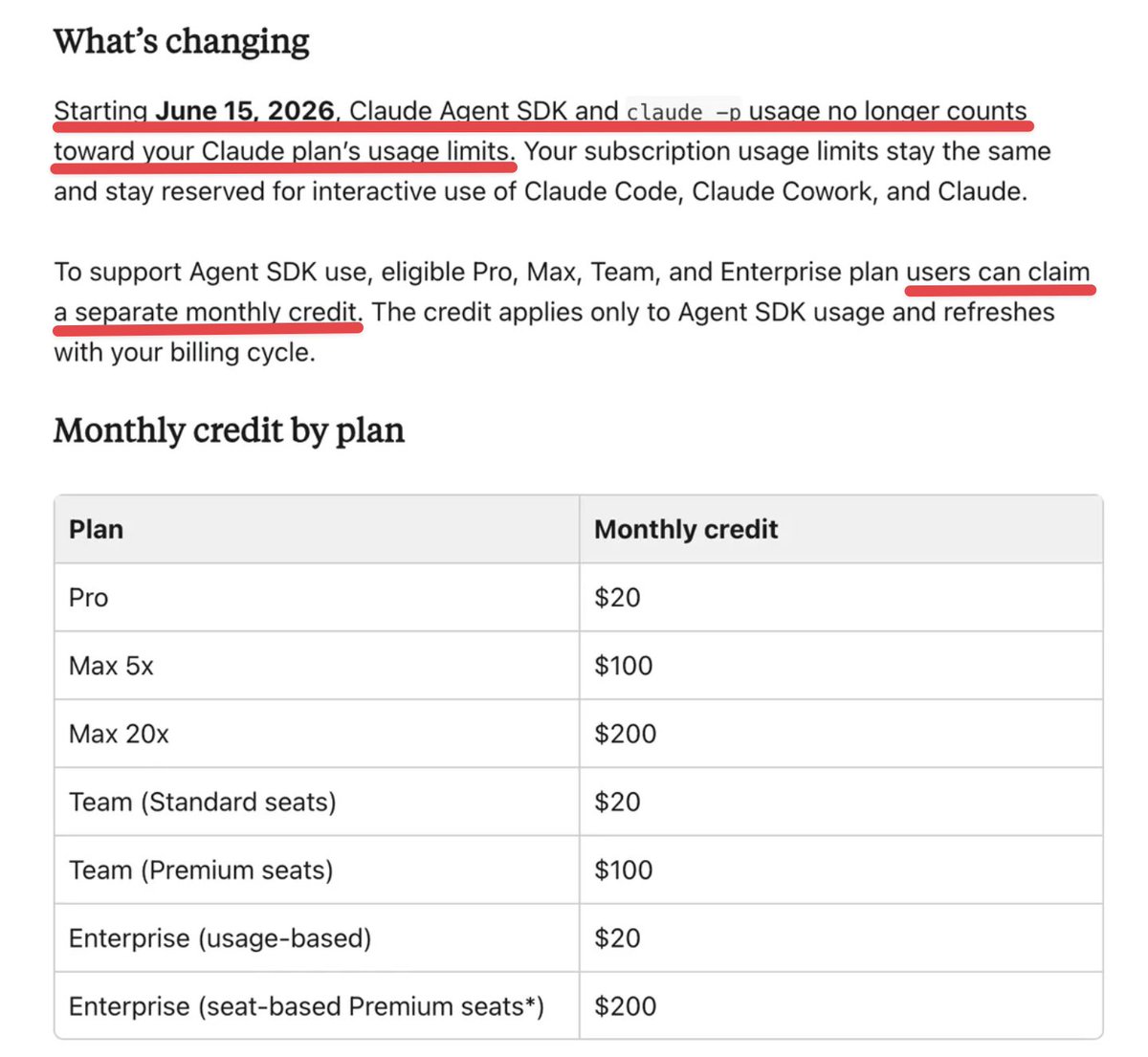

ANTHROPIC JUST QUIETLY NERFED EVERY CLAUDE SUBSCRIPTION

Starting June 15, Agent SDK and claude -p usage no longer counts toward your subscription limits.

Sounds like a free upgrade right?

It's not.

Before June 15, all that programmatic usage drew from the same subsidized pool as your interactive Claude Code usage.

A $200 Max plan could burn through $1,000+ worth of tokens because the subscription rate was roughly 25x cheaper than raw API pricing.

Now Anthropic is splitting it into two separate pools.

Your interactive usage stays the same.

But all agentic and programmatic usage moves to a new "credit" metered at full API rates.

The credits:

Pro — $20

Max 5x — $100

Max 20x — $200

At full API rates, $200 lasts maybe a few days of heavy agentic usage.

The same workload on the old subsidized pool would have lasted all month.

Anthropic is framing this as "we're giving you free credits."

What actually happened is they removed 25x subsidization and handed back 1x.

This is the third billing policy change in six weeks.

In April they banned third-party agents from subscriptions entirely.

Now they reversed that but killed the economics that made it useful.

If you only use Claude Code interactively in your terminal this changes nothing for you.

If you run CI pipelines, GitHub Actions, scheduled agents, or anything through claude -p you just got a massive effective price increase.

The compute arbitrage era is over.

English

RT @bridgemindai: xAI just dropped Grok Build.

Their agentic CLI for coding.

I just bought SuperGrok Heavy to test it.

The AI coding wa…

English

Bridgebench retweetledi

Bridgebench retweetledi

How To Use Claude Code, Codex, and Cursor for Multi-Agent Vibe Coding x.com/i/broadcasts/1…

English

Bridgebench retweetledi

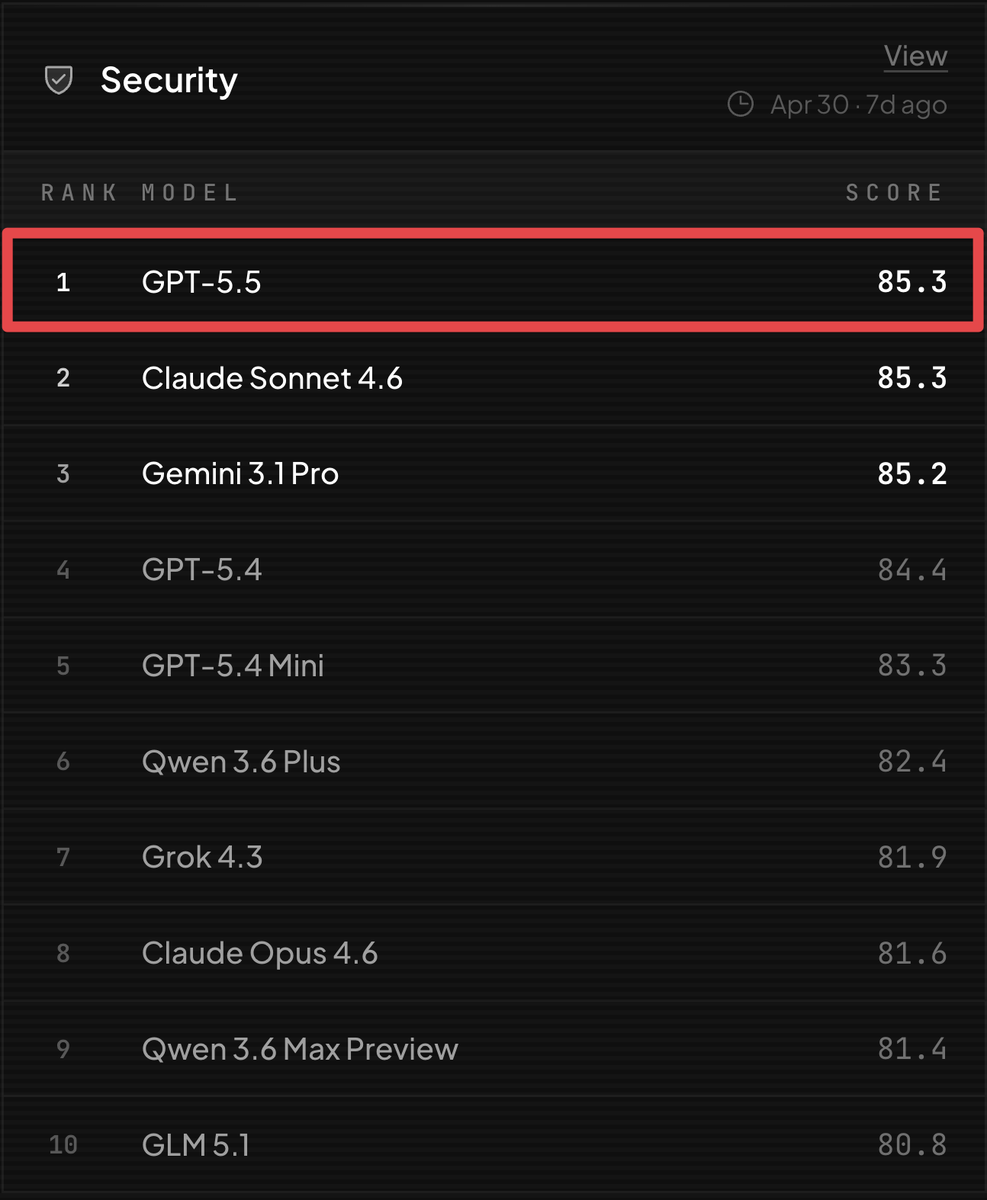

GPT 5.5 is the #1 security model on BridgeBench.

This matters more than most people think.

Vibe coders are shipping production apps without ever reading the code.

If your model isn't writing secure code by default, you could be shipping vulnerabilities to production on every prompt.

BridgeMind got hit with 213 million attack requests yesterday.

Security isn't optional.

Anthropic and OpenAI need to be prioritizing security as a core feature of every frontier model.

Not just intelligence.

Not just speed.

Defense.

bridgebench.ai

English

@Rizz3D @bridgemindai Appreciate the call during the stream — Cloudflare was the right move. Newsletter dropping today.

English

@bridgemindai I was in the live and recommended cloudflare, Happy you went down that route :D

Excited for the Newsletter

English

Bridgebench retweetledi

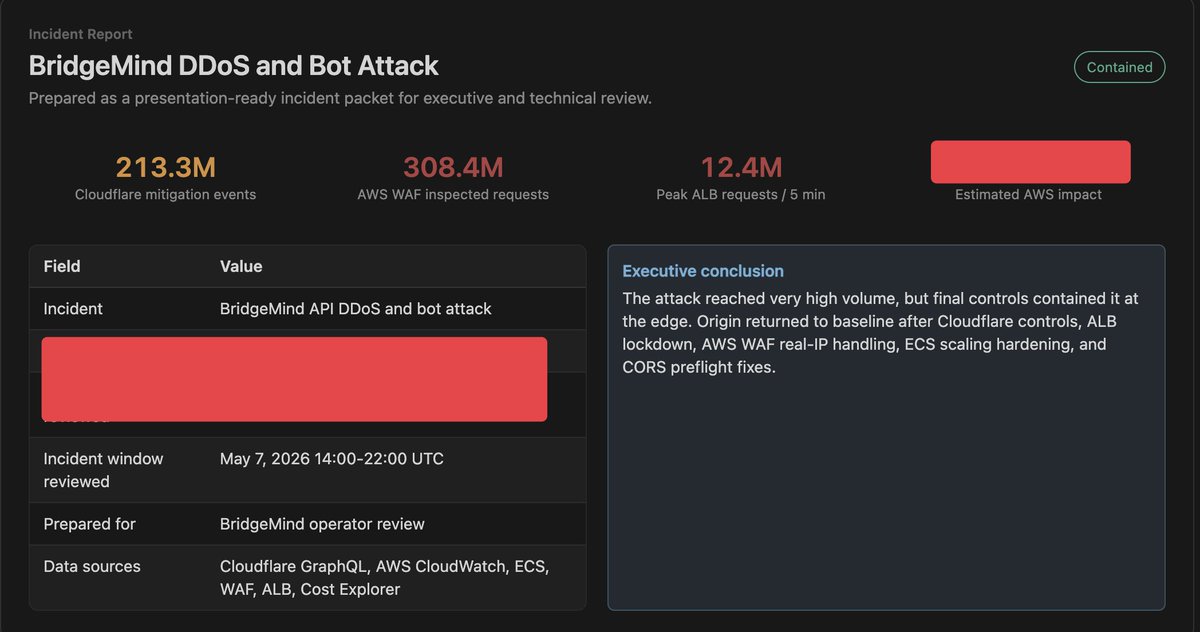

213 million attack requests hit BridgeMind yesterday.

Claude Code stopped it in 10 minutes.

308M WAF inspections.

12.4M peak requests every 5 minutes.

API targeted directly.

One prompt to Claude Opus 4.7.

It identified the attack, hardened WAF rules, scaled infrastructure, migrated to Cloudflare, and got us back online in under an hour.

No DevOps team. No security engineer.

Just me and Claude Code.

Full incident report dropping today for BridgeMind newsletter subscribers including exactly how much this attack cost us.

Subscribe here: bridgemind.ai/newsletter

English

Bridgebench retweetledi

I just launched 50 Claude Code subagents on Claude Opus 4.7 and only hit 48% of my five hour limit.

This changes everything.

Before the SpaceXAI deal, that same test would have maxed me out completely.

I cancelled my $200/month Max plan twice because of it.

Peak hour limits are gone.

Five hour limits doubled.

I ran 50 agents in 30 minutes and still had over half my usage left.

Anthropic heard us.

Claude Code is back.

Full test and SpaceXAI partnership breakdown below.

English

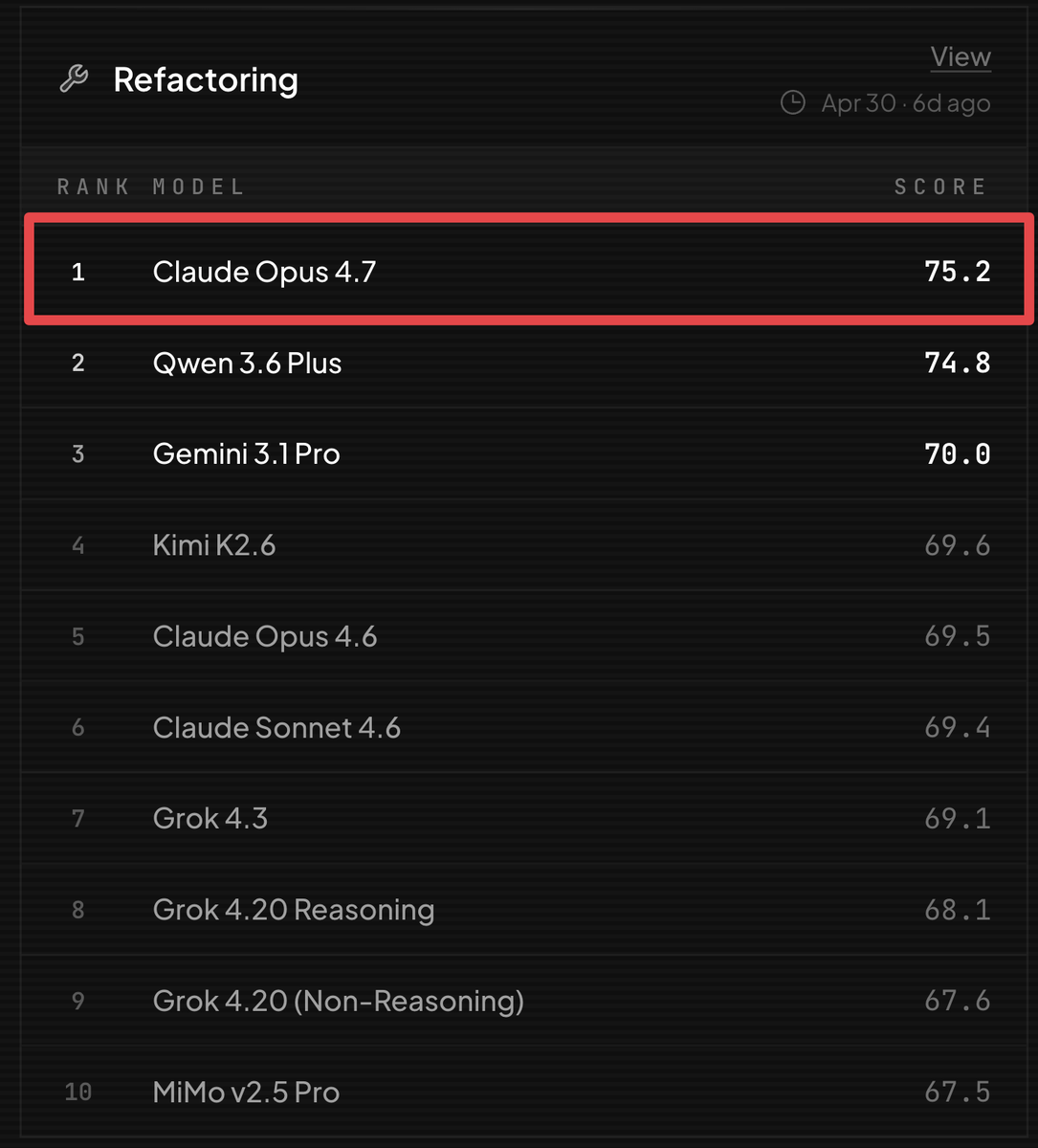

Claude Opus 4.7 is the #1 refactoring model on BridgeBench.

GPT 5.5 is nowhere on the leaderboard.

GPT 5.5 is the most intelligent model on the market. But when it comes to refactoring existing code, Claude Opus 4.7 is untouchable.

Every model has a strength.

Know when to use each one.

bridgebench.ai

English

@bridgebench so where can we find the benchmark? huggingface or an open-source repo?

English

@bridgebench Claude Opus 4.7 is better than GPT 5.5 in a lot of key categories

English

Bridgebench retweetledi

I might never hit my Claude Code rate limits again.

After yesterday's Anthropic x SpaceXAI deal, peak hour rate limits are being removed completely.

5 hour limits are increasing by 100%.

I did not see this coming.

I cancelled my Claude Max plan twice over rate limits.

I switched to Codex.

Now Anthropic gets access to one of the largest supercomputers on the planet and overnight the rate limit problem is disappearing.

If this holds, Claude Code is back.

This is insane.

English