Sabitlenmiş Tweet

I just built a full SAP ESG dashboard mockup for a client in under 60 seconds. Here's how 👇

The workflow:

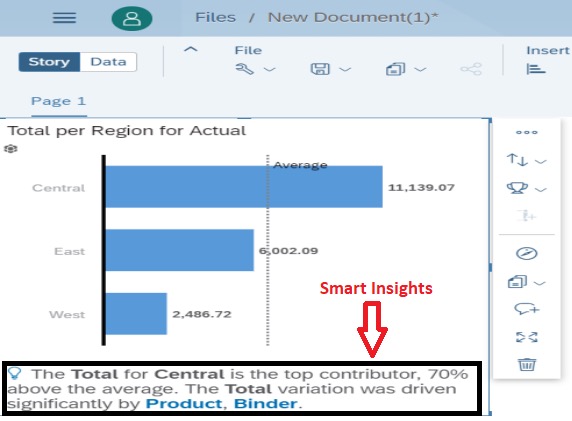

1. Grabbed the official SAP S/4HANA Web UI Kit + SAP Analytics Cloud Dashboard Hi-Fi Kit from GitHub

2. Linked them into Figma as design libraries (free - SAP provides these publicly)

3. Connected Figma to Claude via the MCP connector

4. Asked Opus 4.6 one prompt: build me an ESG dashboard using the SAP Fiori Horizon design language for a mid-market client

What it generated:

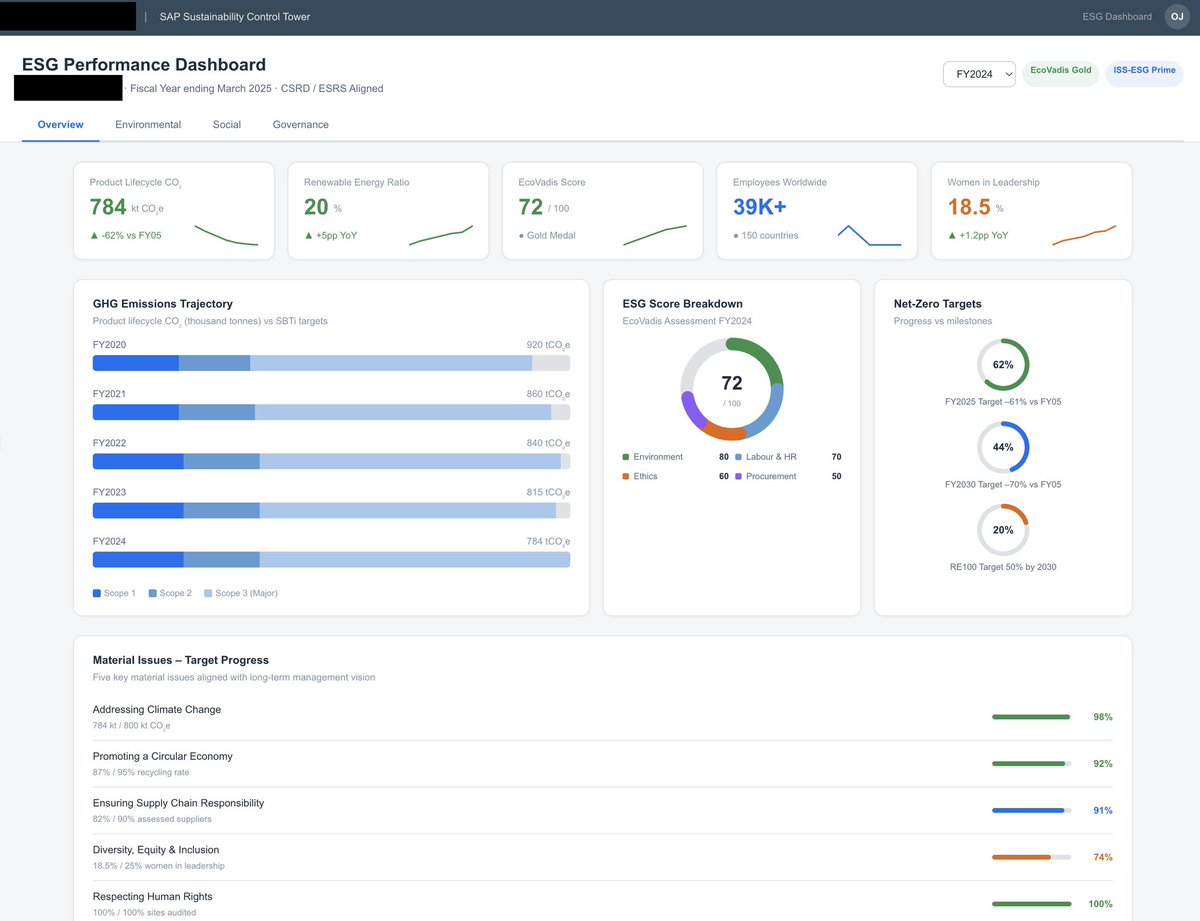

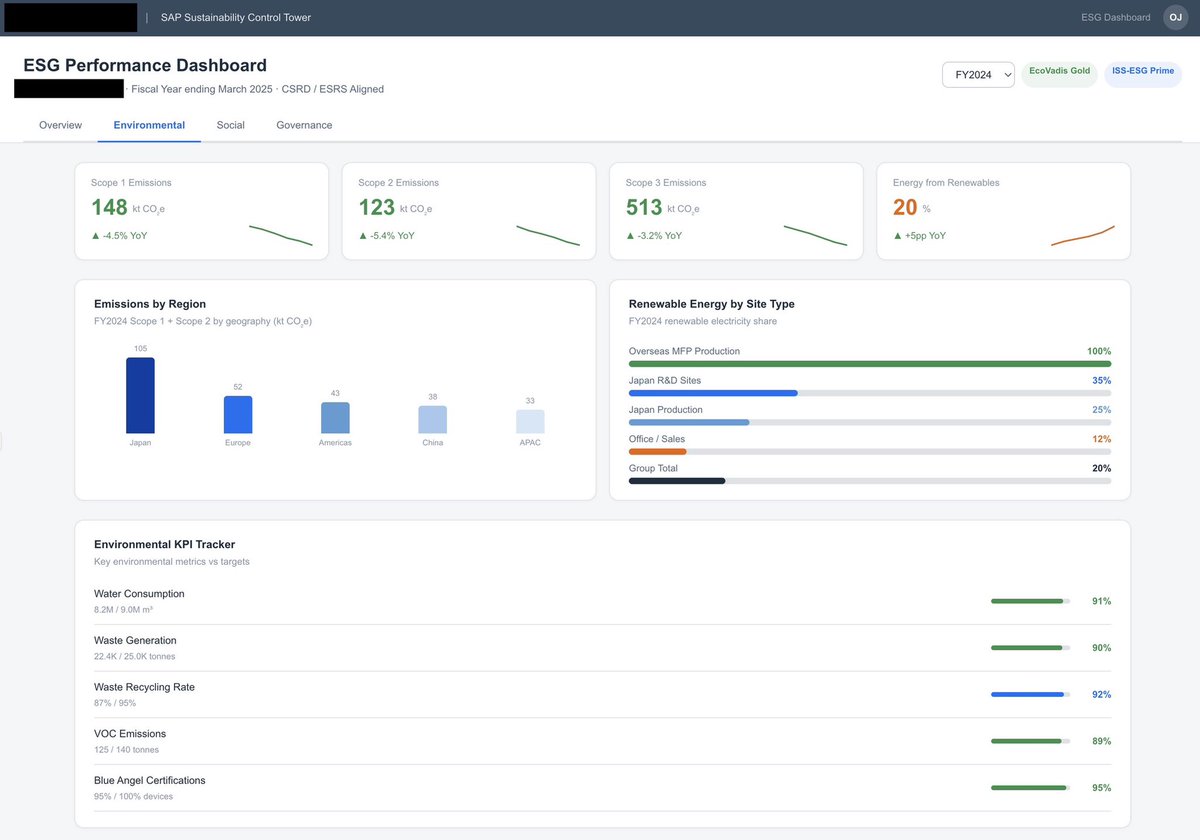

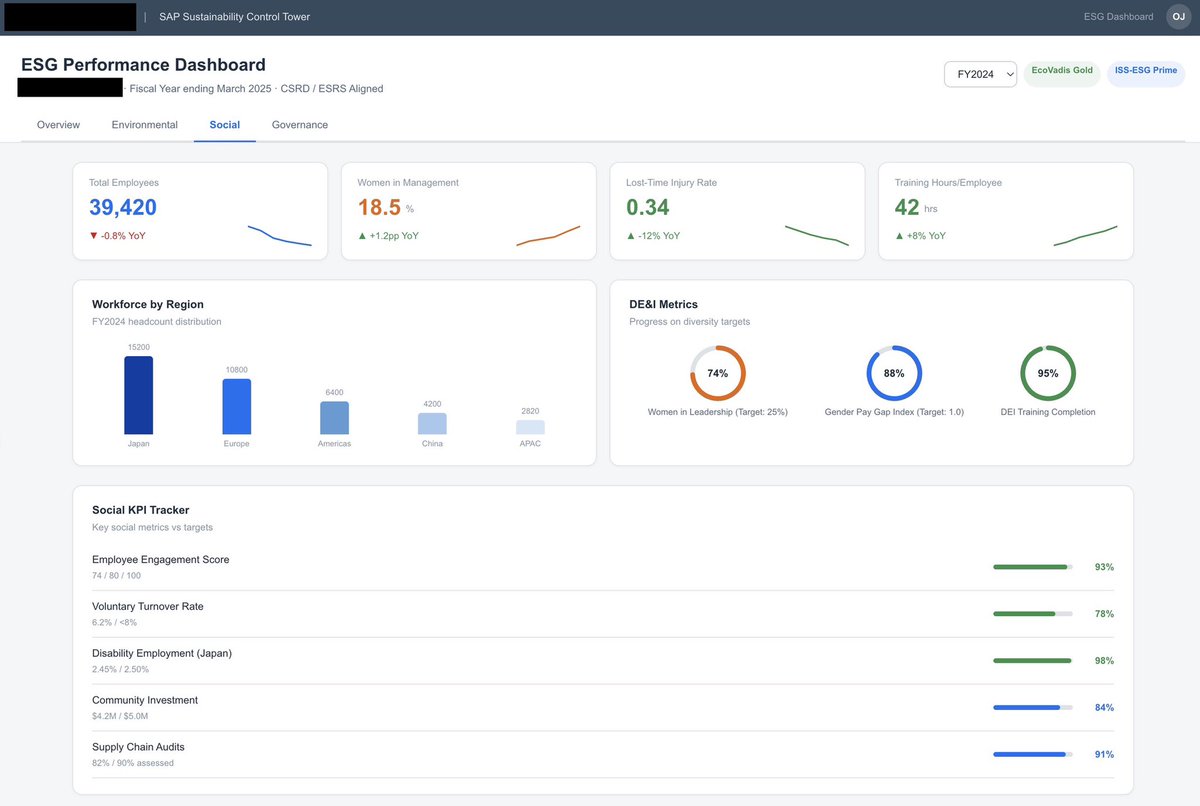

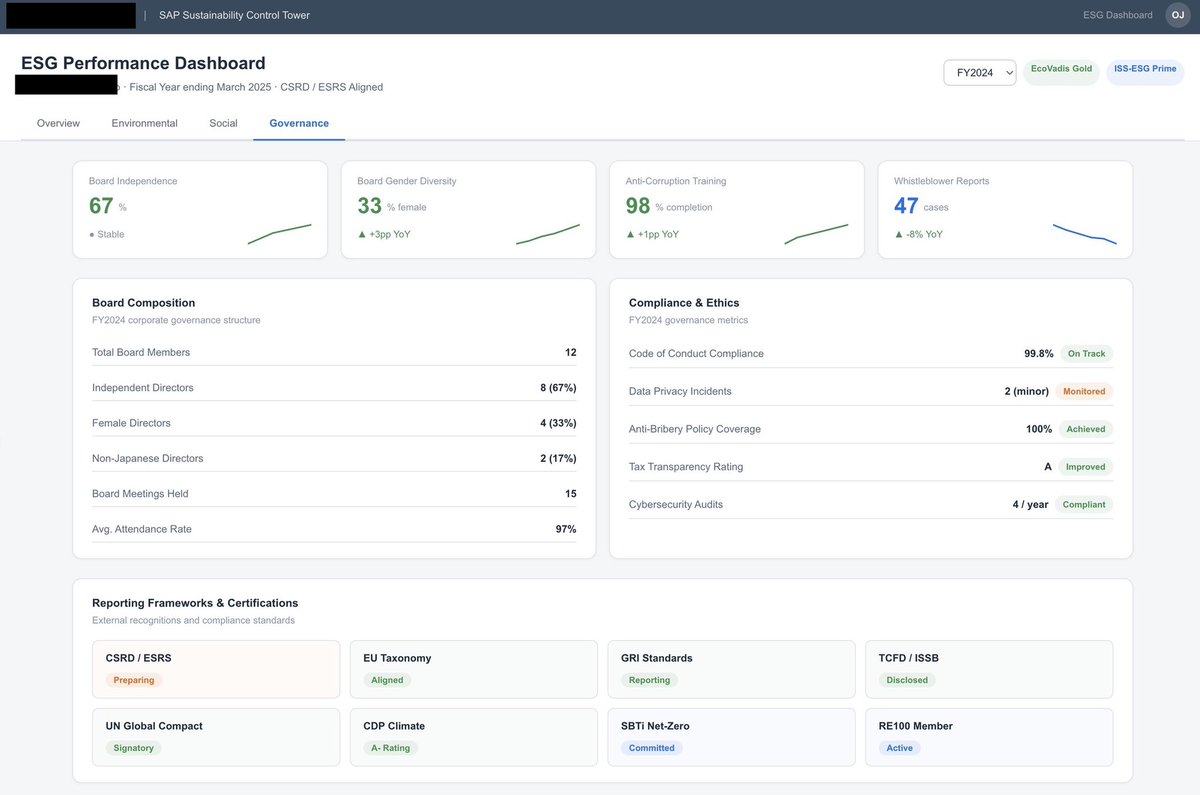

→ Full SAP Fiori shell bar, tab navigation, KPI tiles with sparklines

→ 4 tabs: Overview, Environmental, Social, Governance

→ GHG emissions tracking with Scope 1/2/3 breakdowns

→ EcoVadis scoring, net-zero target progress rings

→ DE&I metrics, board composition, compliance frameworks

→ All using the correct Horizon Morning theme palette and SAP design patterns

The model understood SAP Sustainability Control Tower layout patterns, CSRD/ESRS reporting structures, and standard ESG KPIs — then assembled them into a production-quality interactive React component.

No templates. No drag and drop. One prompt.

This changes the game for SAP consultants doing pre-sales. Instead of spending days mocking up what a solution could look like, you can generate a client-ready demo in a conversation.

The Figma → Claude → artifact pipeline is genuinely the fastest path from design system to working prototype I've ever seen.

English