Cacheon

12 posts

@cacheon_ai

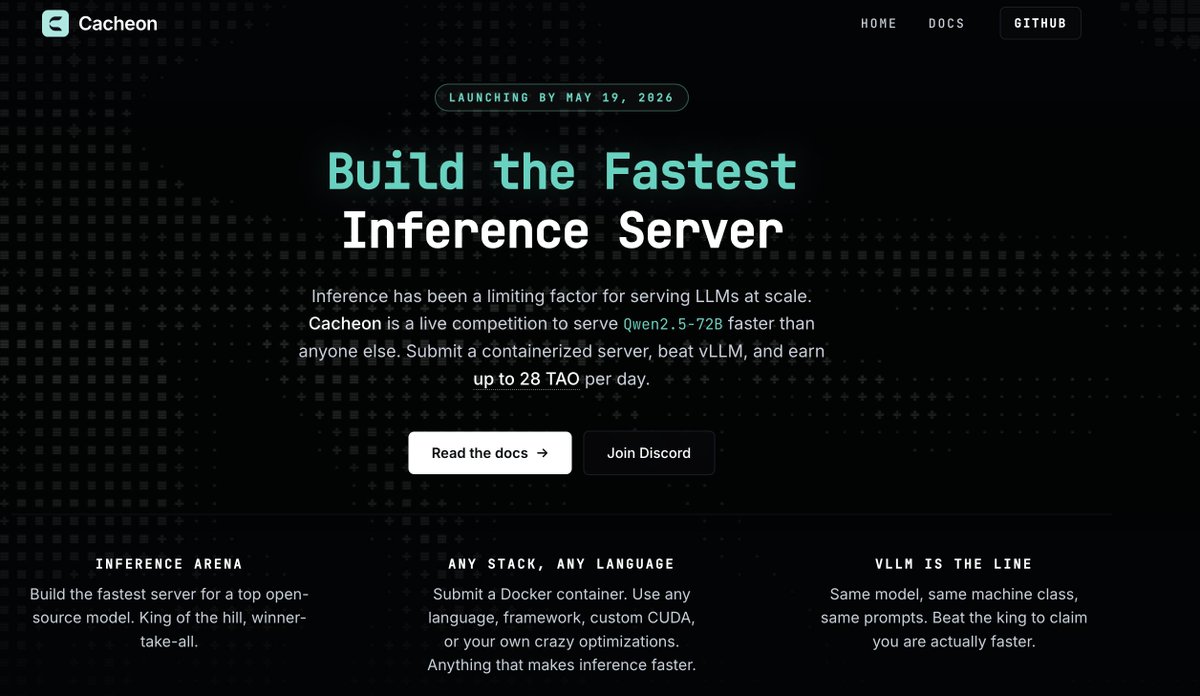

Inference arena for open-source LLMs. Build the fastest correct server. Win real rewards.

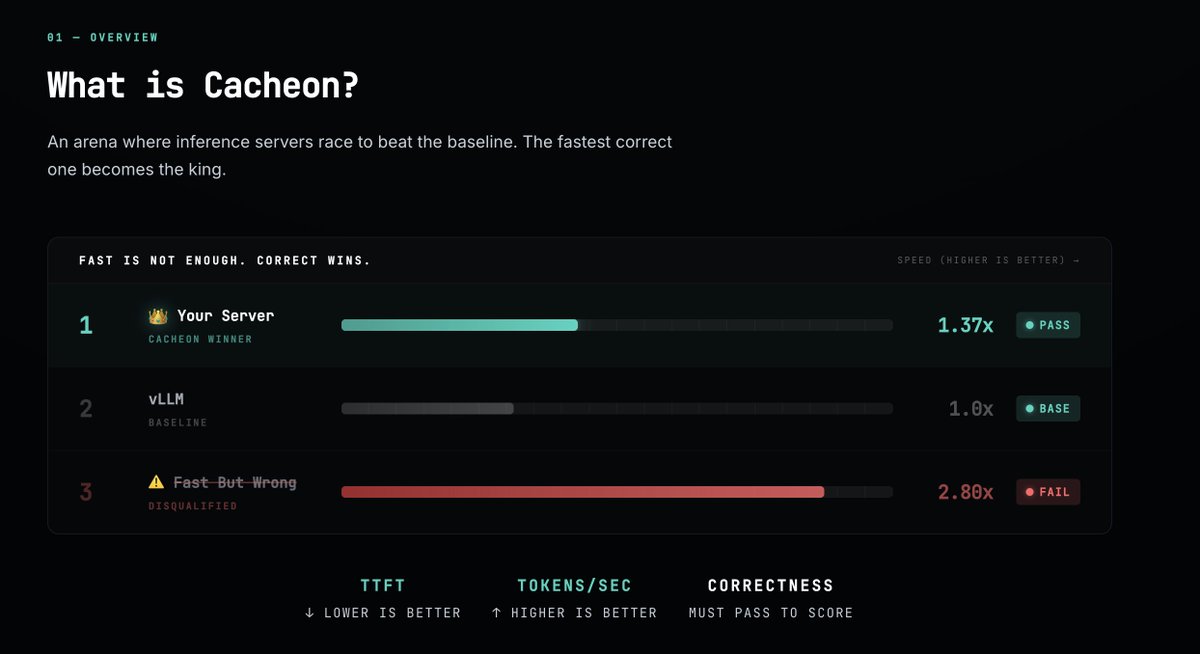

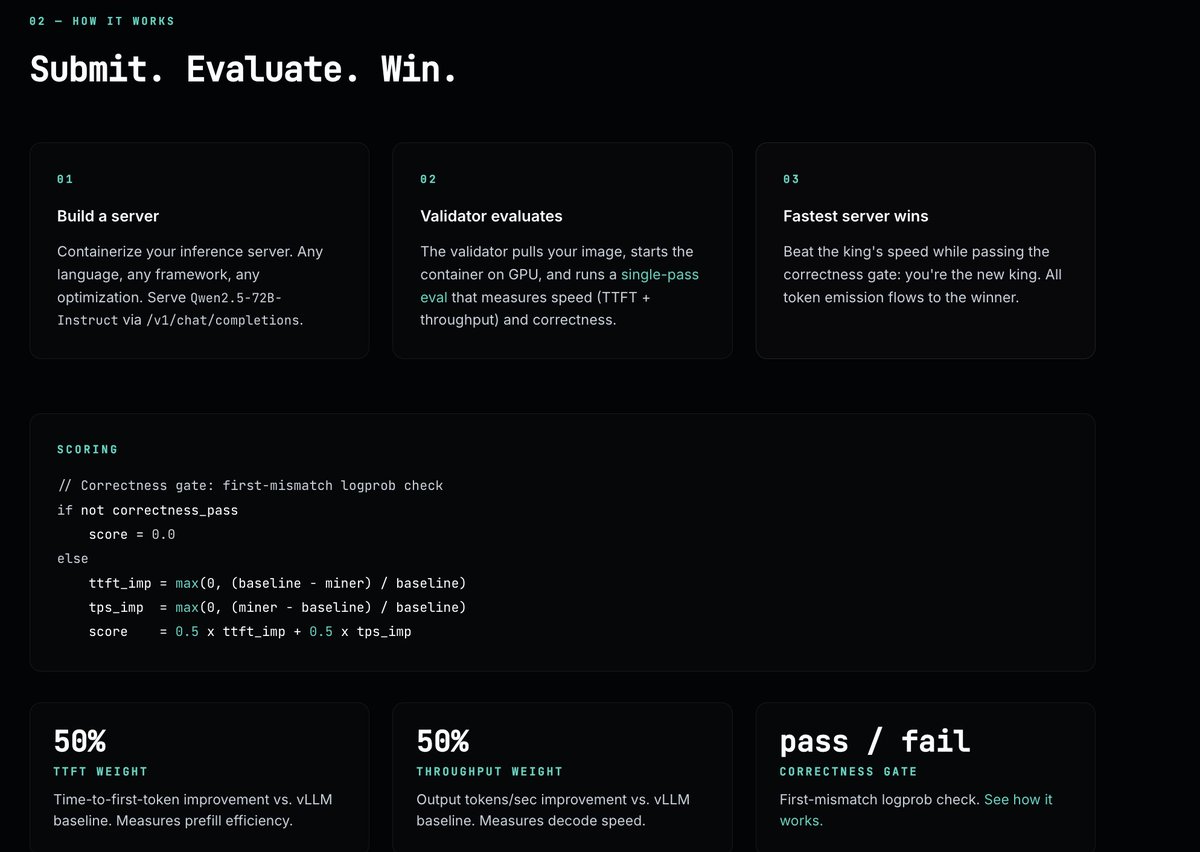

🚨Subnet Summer AMA X @cacheon_ai (SN14) 🚨 🕐| 6:30 PM GMT (Thursday, May 14) Join us as we sit down with the team behind Cacheon (Subnet 14) on Bittensor to explore how they're building a decentralised inference competition network for open-source AI models. Cacheon is developing a containerised inference benchmarking system, where miners submit Docker-packaged inference servers and validators score them on speed and correctness when serving open-source models. Instead of relying on centralised inference providers, Cacheon introduces a continuously evolving competitive environment where performance is economically incentivised and optimised over time. At its core, Cacheon represents a shift from centralised AI inference → decentralised, adversarial benchmarking. By leveraging Bittensor's incentive design, the network creates a feedback loop where faster and more accurate inference servers rise to the top, ultimately driving the best open-source model serving infrastructure on the planet. This AMA is your chance to explore how Cacheon is turning inference performance into a decentralised, market-driven competition. We'll cover: - What Cacheon (SN14) is building - How miners submit and optimise containerised inference servers - How validators score speed and correctness - Why open-source model serving matters for decentralised AI - Token incentives behind high-performance inference - Real-world use cases and applications - Early progress and roadmap - Live Q&A with the team Cacheon is pushing Bittensor into one of the most fundamental layers of AI infrastructure inference at scale. Set your reminder 🔔

Launching Cacheon: an open, incentivized competition for LLM inference optimization. As model quality converges, the next frontier is serving them economically at scale: lower latency, higher throughput, and lower cost per token. Cacheon turns that problem into a live arena with continuous evaluation. Developers submit containerized inference servers, benchmarked on standardized hardware against a pinned vLLM baseline. The fastest server that preserves output correctness wins. The goal is to make better inference systems discoverable, measurable, deployable, and rewarded in the open. Mainnet launches by May 19. Learn more: cacheon.ai

Launching Cacheon: an open, incentivized competition for LLM inference optimization. As model quality converges, the next frontier is serving them economically at scale: lower latency, higher throughput, and lower cost per token. Cacheon turns that problem into a live arena with continuous evaluation. Developers submit containerized inference servers, benchmarked on standardized hardware against a pinned vLLM baseline. The fastest server that preserves output correctness wins. The goal is to make better inference systems discoverable, measurable, deployable, and rewarded in the open. Mainnet launches by May 19. Learn more: cacheon.ai