caroline ᵍᵐ • ᴗ •

10.6K posts

caroline ᵍᵐ • ᴗ •

@caroline

twittering away since july 14, 2006. doing what i #love to do ☆\(*^▽^*)/☆

Today I published an interview with an anonymous Meta employee who has worked at the company for over a decade and wanted, for the first time ever, to let the world know how horrible it feels to be inside. #comments" target="_blank" rel="nofollow noopener">sfstandard.com/pacific-standa…

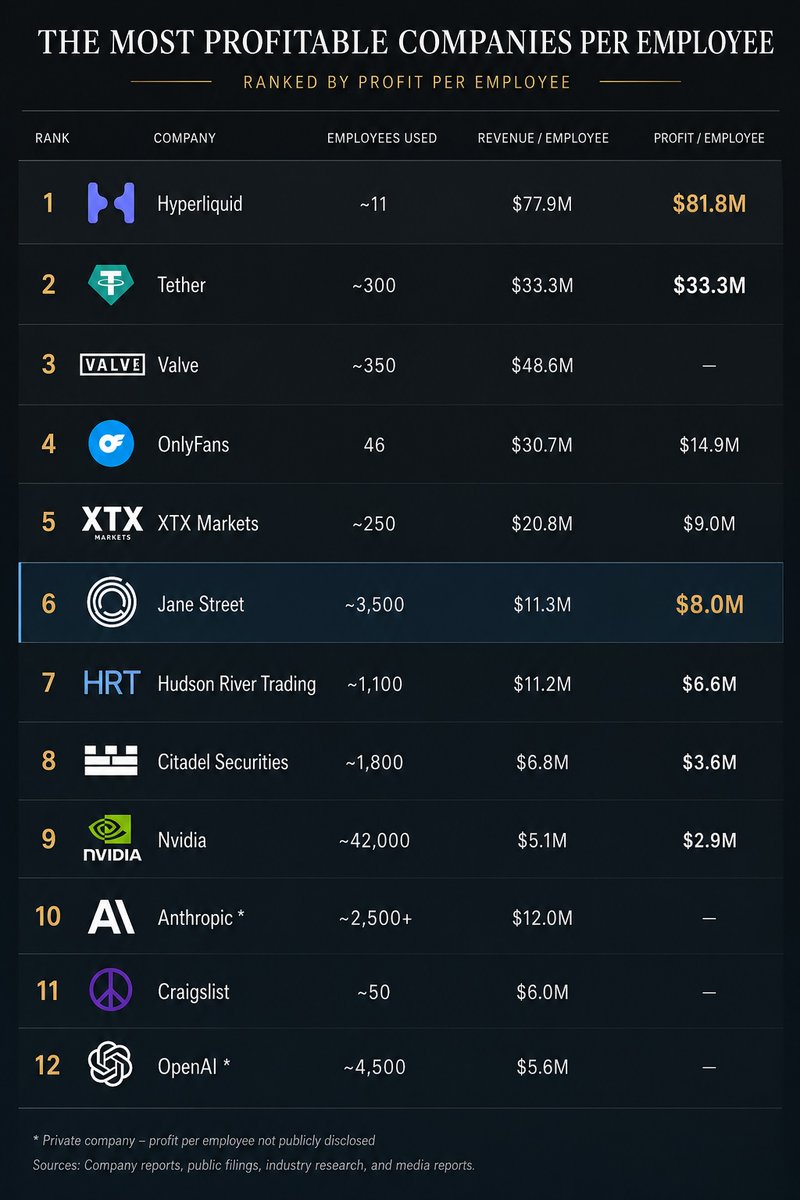

I am surprised more people are not paying attention to this update from Anthropic on its stock policy. This seems like a potential bombshell. There is an active secondary market purportedly in Anthropic stock or derivatives including on fairly reputable (or at least well-known) platforms like Forge. Anthropic is calling them out *specifically*, by name, and essentially *saying* 100% of these are illegal. Some may be frauds (people selling Anthropic stock or interests in Anthropic stock that they don't truly own), but more likely many are legit attempts at transferring Anthropic equity (directly, as SPV shares, or as some type of 'beneficial interest' or future, etc.) Anthropic appears to be saying it will treat all these transfers as void. I don't have access to their terms, but it's very interesting to think what this could mean. Do the 'first purported sellers' in the chain potentially have an opportunity to do a double-dip? Does the first seller and all downstream buyers get the entire entitlement nuked? Anthropic is threatening that--are they just bluffing? If they're not bluffing, what litigation is likely to ensue? This can get into really esoteric areas of corporate law that depend on exactly how the transfer restrictions are drafted as well as the language around how violations of transfer restrictions are treated--for example, if they are merely voidABLE then downstream buyers can assert various equitable claims/defenses, but if they are VOID ab initio then in some jurisdictions that forecloses equitable defenses.

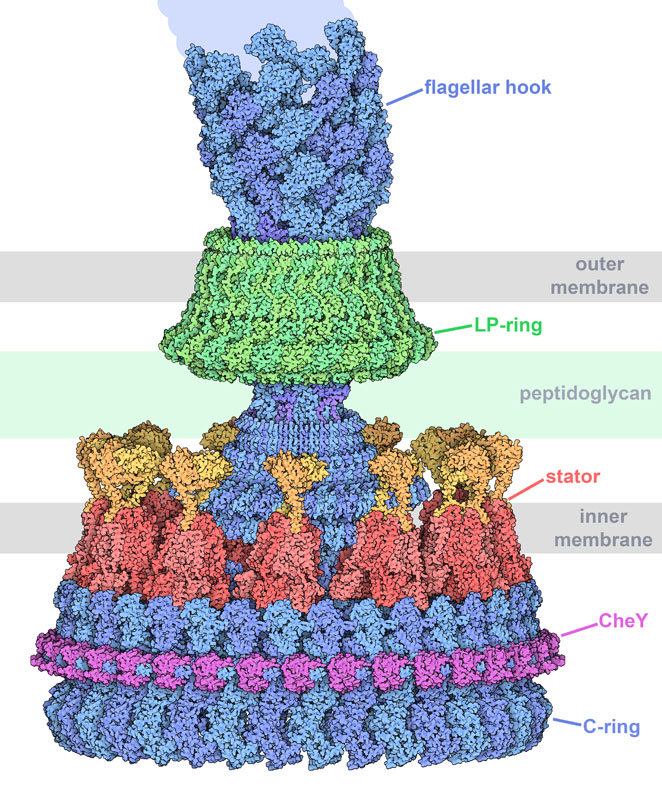

Bacteria move around using a molecular machine called the flagellar motor that rotates faster than the flywheel of a race car engine and switches directions in an instant. After 50 yrs, scientists have finally figured out how it works. “My lifelong quest is now fulfilled.” Link⤵️

I can’t tell if this guy is an Olympian or if someone just pushed him down the hill?