POV: You decided to join the best AI research house in SF

Cassian

297 posts

@cassian_33

You only know my name, not my story. MOD @SentientAGI

POV: You decided to join the best AI research house in SF

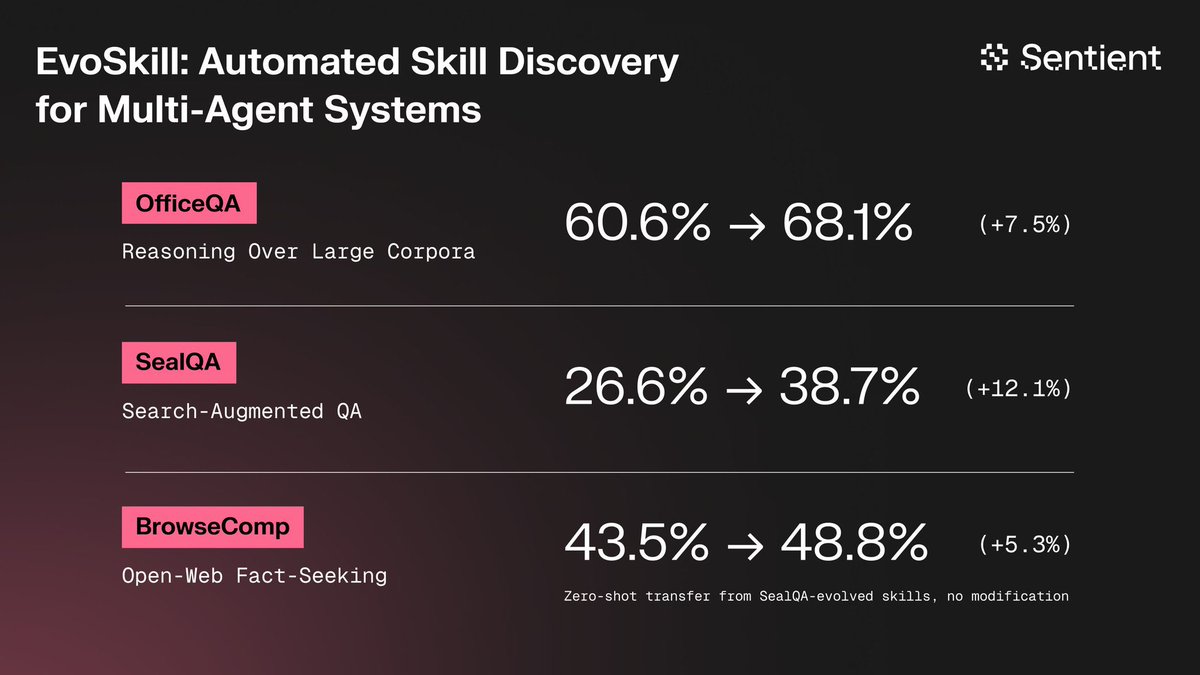

The future of AI is not just automation, but self-evolution. 🌍 • We are moving towards a world where AI takes over a large portion of human work. Even AI can now improve itself, a process that is becoming increasingly faster and more efficient. • However, there are still bottlenecks. AI agents often get bogged down by tasks requiring multi-step reasoning, complexity, or processing massive amounts of data. These are the real-world problems businesses face. • Arena was created to bridge that gap. It's an environment where pioneers can explore new reasoning methods together, transforming specialized solutions into widely applicable skills. • In particular, with support from tools like EvoSkill and optimize_anything, the AI engineering process is being accelerated to its maximum: from error analysis and improvement suggestions to the self-optimization of the agent's own source code. • We are not just building AI. We are creating a self-learning, self-operating system that constantly breaks through old limitations. 🔥 ARENA: WHERE THE LIMITS OF AI THEORY ARE REDEFINED. ✊🌐 @SentientAGI I @shad_haq

Enjoying sunny weather on a beach is so fun with Dobby and Sentient vibes together . Another artwork for @SentientAGI . @shad_haq @0xsachi @hieu06730313 @LeaderX_btc

Applications are now live! Cohort 0 starts March 13th in Presidio with OpenHands, OpenRouter, alphaXiv, Fireworks, Dedalus Labs, Franklin Templeton, Founders Fund and Pantera. → $25K+ in prizes → 3 weeks building state-of-the-art AI agents → Many more surprises Apply below 👇