Thomas Chong

54 posts

Thomas Chong

@cch_thomas

AI Research Engineer @Beever_AI | @GoogleDevExpert in Cloud AI | HKUST | 🇨🇦

Code2Video A Code-centric Paradigm for Educational Video Generation

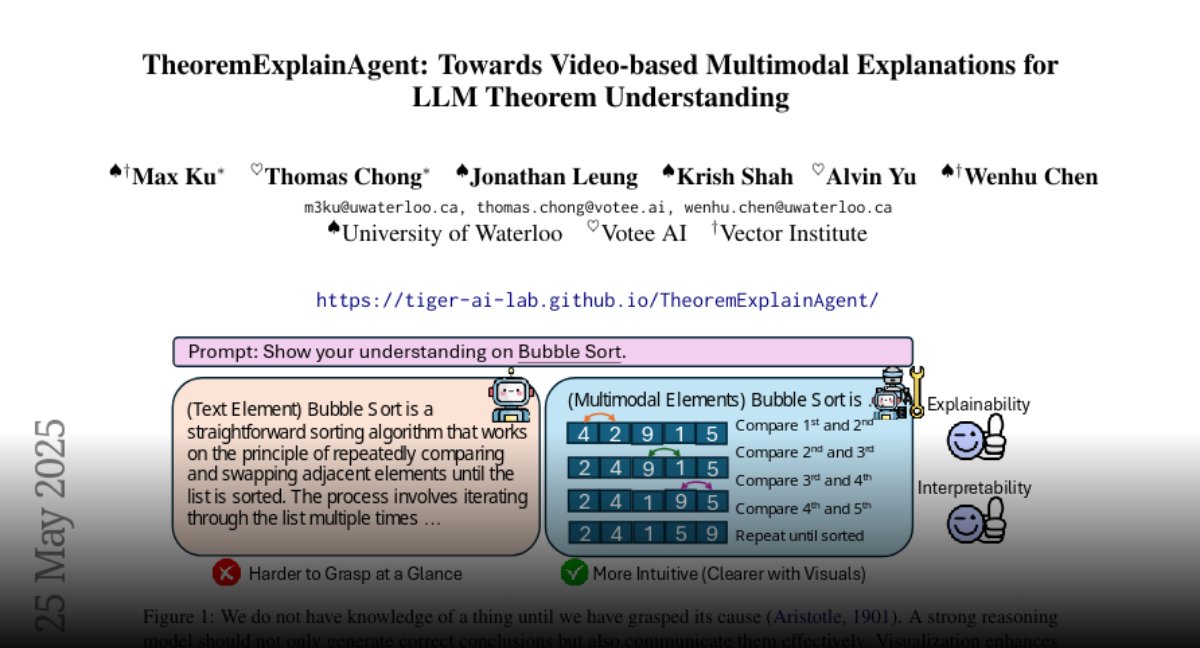

🚀 We just released the code for #TheoremExplainAgent! 🧮🎬 Agentic LLM approach generates long-form (>5 min) theorem explanation videos using Manim. While highly successful, layout issues remain. We also introduce TheoremExplainBench for systematic evaluation. 👇 Details (1/n)

Happy to announce our paper "Inference Time Alignment with reward guided tree search" has been accepted to #NAACL2025 main! 🥳🥳 Through framing alignment as a reward guided tree search problem, we introduce a novel inference time alignment algorithm that employs an off the shelf reward model with evolutionary strategies to align LLMs!