Spencer Sterling retweetledi

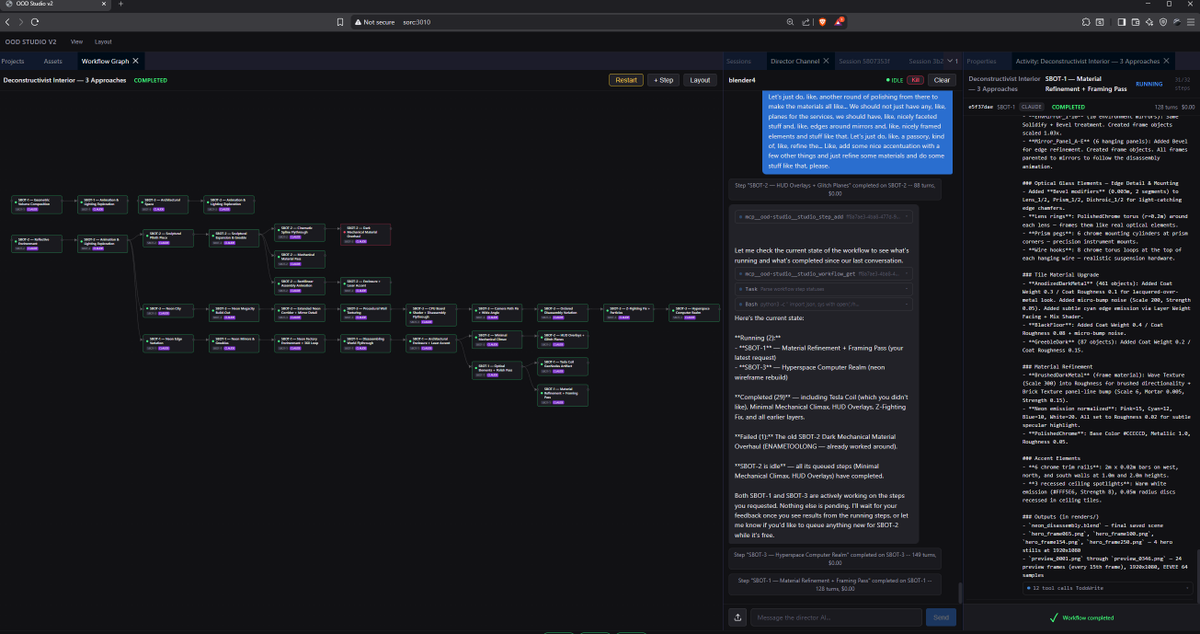

@cerspense has been developing a tool (Sentinel) that allows live diffusion.

I have been playing with it in conjunction with TouchDesigner (for audio reactivity) and my Teenage Engineering OP-XY (for additional effects trigger).

Being able to make animations on the fly based on a song feels like playing an instrument that makes visuals instead of sounds. It is awesome!

This has been made possible thanks to my Dell Pro Max T2 tower equipped with an NVIDIA RTX PRO GPU (you can check out the spec here: lnkd.in/gQ-S9cF3).

#DellProPrecision #DellTech #NVIDIA

English