Charlie Deist

4.4K posts

Charlie Deist

@chdeist

Retired sailboat captain building a mini media empire from a northern California homestead. 20 acres and a cow. Father of 4. Finish your work and go outside.

This dock turns a Mac mini into a classic Macintosh. wow

Company Brain @t_blom Every company has critical know-how scattered across people's heads, old Slack threads, support tickets, and databases, and AI agents can't operate like that. We think every company in the world is going to need a new primitive: a living map of how the company works that turns its own artifacts into an executable skills file for AI.

Remember the Lindy rule. Humans only talk to things that are alive 1) Other humans 2) Themselves 3) animals 4) Things embodied with spiritual significance (gravestones, holy places, prayer) We could all just sit and talk to our computers now. We have the technology. But we don't. It feels weird. We sit and type in silence. Many people have tried so far to create a talking device. all have failed

Piers Morgan asked Russell Brand which passages were relevant to him when he brought a Bible into court.

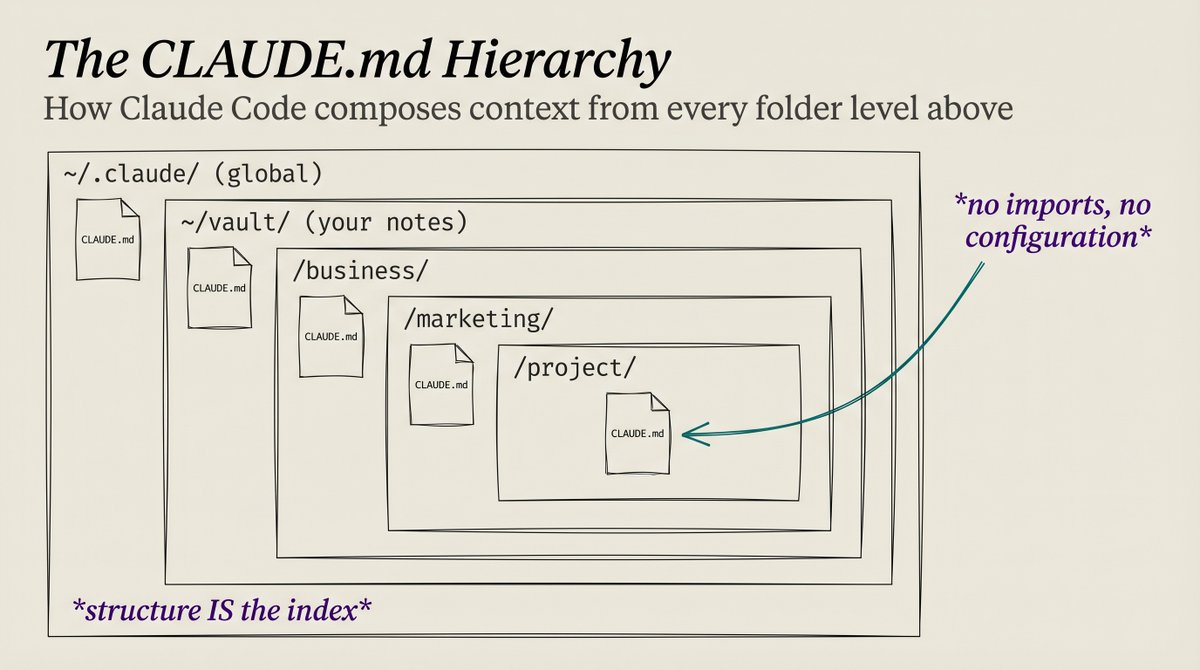

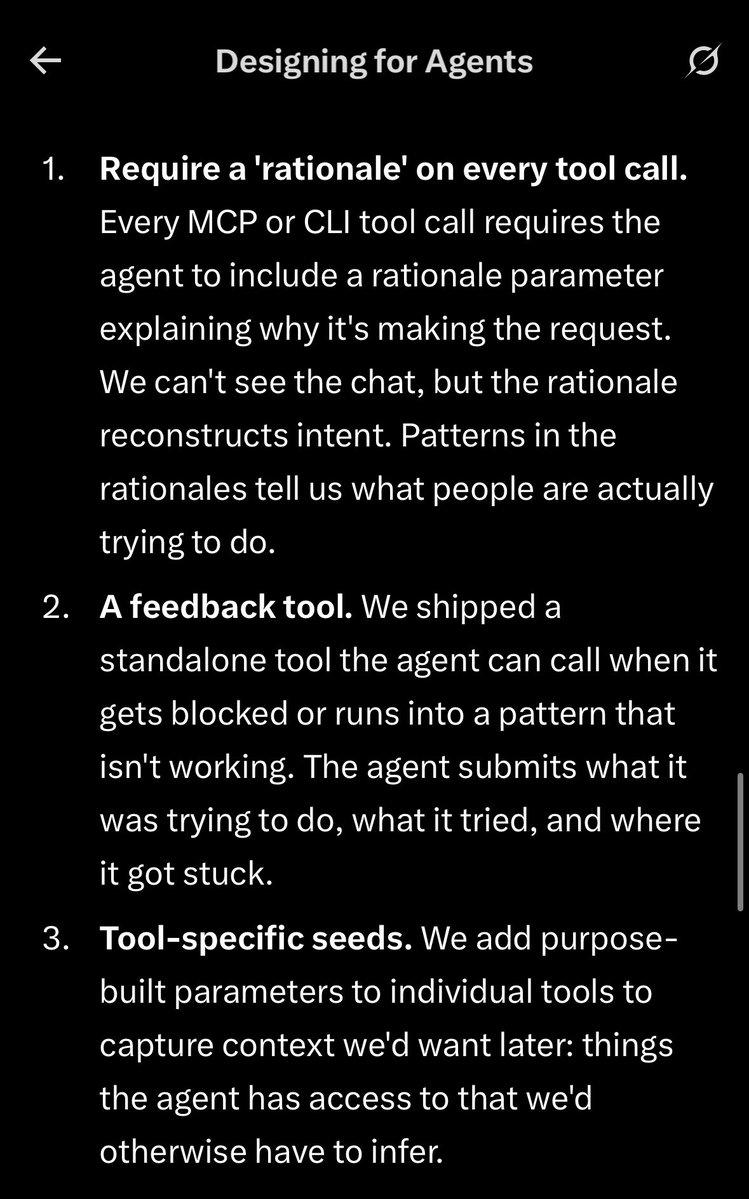

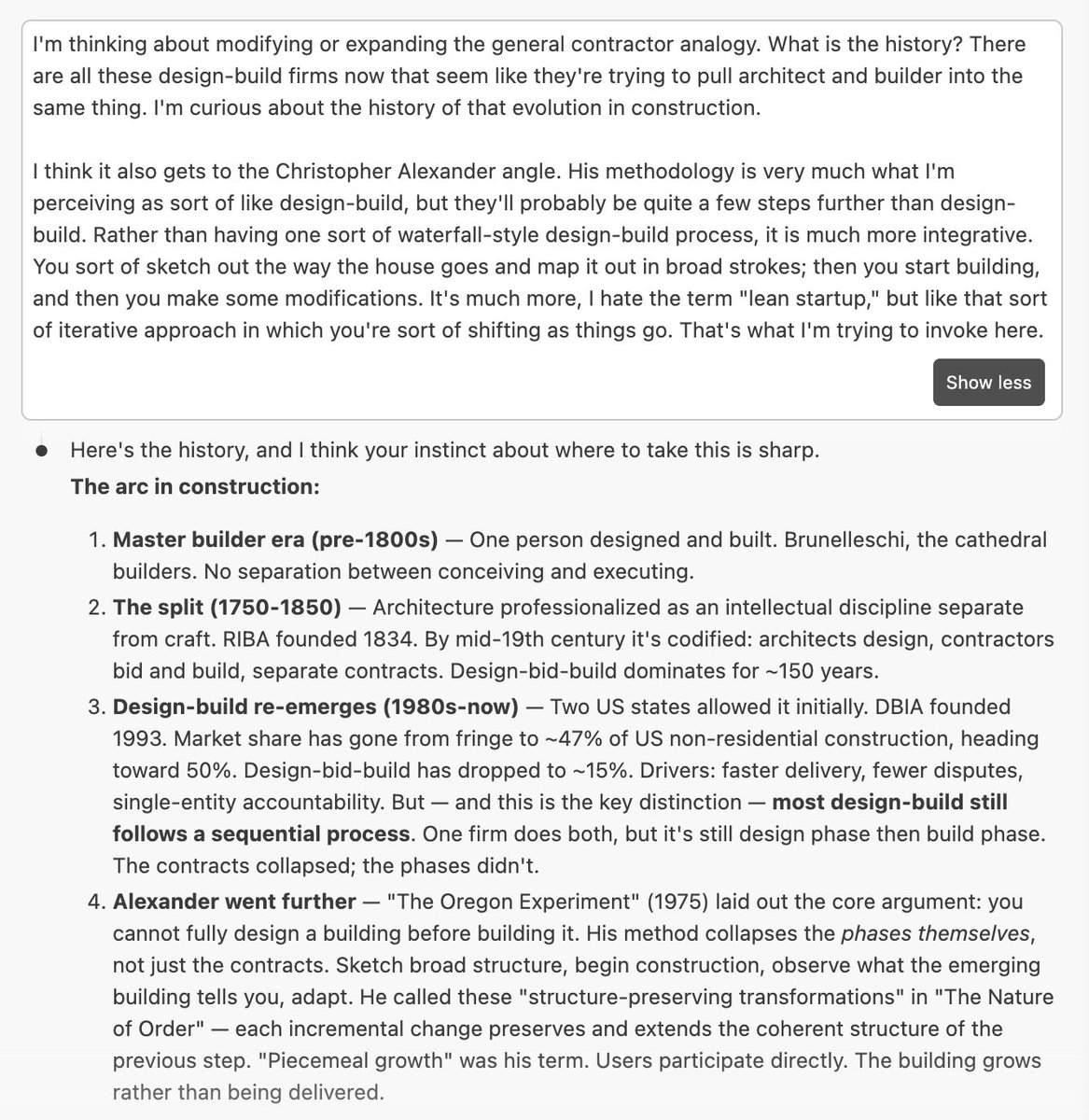

My current favorite metaphor I've found for working with AI is the general contractor. Architect doesn't quite fit because it's too hands off. (This is maybe not the case for software where the models seem better) A GC is on site. They know the dependency graph: the electrician can't go before the framing, the drywall can't go before the electrical inspection. They know which sub (agent/skill) to call for which job, and whether the work is good when it's done (QA/QC). That's what working with AI looks like for me right now. You just seem to get bad results from "Claude, rebuild my CRM, make no mistakes." That's like hiring a framing crew and telling them to build you a house. The failure modes for a GC are instructive. A bad GC fails in one of two directions. 1. Too hands-off: delegate everything, don't inspect, the plumbing fails behind the drywall. 2. Too hands-on: micromanage every nail and the project takes three times as long. Both failure modes show up constantly in how people use AI. Some people fire off a massive prompt and accept whatever comes back. Others approve every line and never let the system do what it's good at. I think it's also useful because what makes a good GC defensible is local knowledge. They know the subs, the building codes, the soil conditions, the inspector's quirks. And it doesn't transfer easily. Twenty years on commercial projects in Southern California doesn't translate to residential in Minnesota that well from what I can tell: Different climates, codes, subcontractors, client expectations. Broad skills carry over, but the deep local knowledge doesn't. Same with AI. Everyone gets the same models. The differentiator is the scaffolding you build around them: your files, your style guides, your workflow rules, your accumulated context. Two people with the same model can produce wildly different results depending on the 'local knowledge' they've encoded. And like a GC who (should) get better with each project, the scaffolding should compound. Each skill you build and piece of context you add should make every future session more capable.