cheaty

8.6K posts

cheaty

@cheatyyyy

23, sde @ stealth startup, performative nerd

people are underestimating existing llms for svg capabilities by a lot. llms excel at creating much more sophisticated svgs and animation with a little bit of care and refinement all of the below interactions / animation are bespoke svgs component i've created from scratch. all of them created with @claudeai opus 4.6, the best model ever.

Today we introduce Physera, a research and product lab rethinking applied intelligence. We are working at the intersection of model efficiency and behavioural simulations while building environments that are multimodal. We are a team of applied researchers and engineers who believe that the important problems in AI today are not about capability but making that capability reliably useful across multimodality. We are heads down building systems that perceive, reason, and decide as humans do, under the constraints humans face. To learn more or collaborate: physera.ai/?v

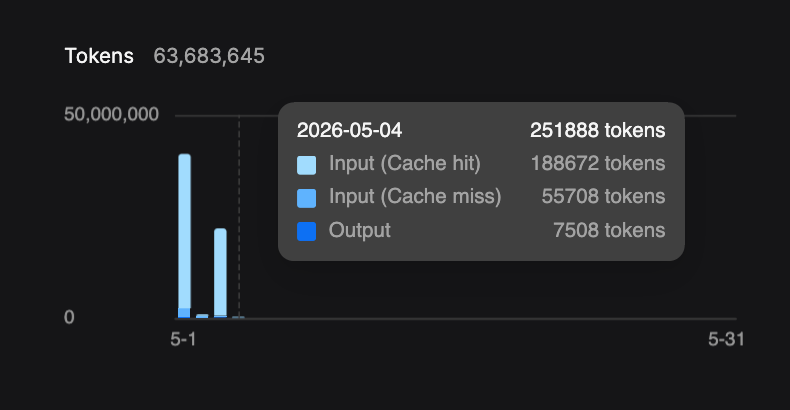

honestly i didn't expect to feel any different with this release compared to just other frontier open models but man like all my conversations are above 400k tokens now? you know that "locked in" zone you feel right before claude/codex compacts? it's that but forever lol also look at cache write vs read cost, jesus christ, this model makes me religious

@SynthPotato I know you’re joking but the game genuinely exists

Today, we’re taking a step toward truly galactic-scale capabilities. 🚀 We’re partnering with @SarvamAI to bring sovereign AI into orbit aboard India’s first orbital data centre satellite, a pathfinder mission bringing datacenter-class GPUs and high-performance remote sensing together in space. Built and operated by Pixxel, with Sarvam providing the AI backbone, the demonstrator marks a step toward making orbital data centres real, operational, and scalable from India. May the 4th be with us all! ✨

What are we obviously not getting right with Codex?

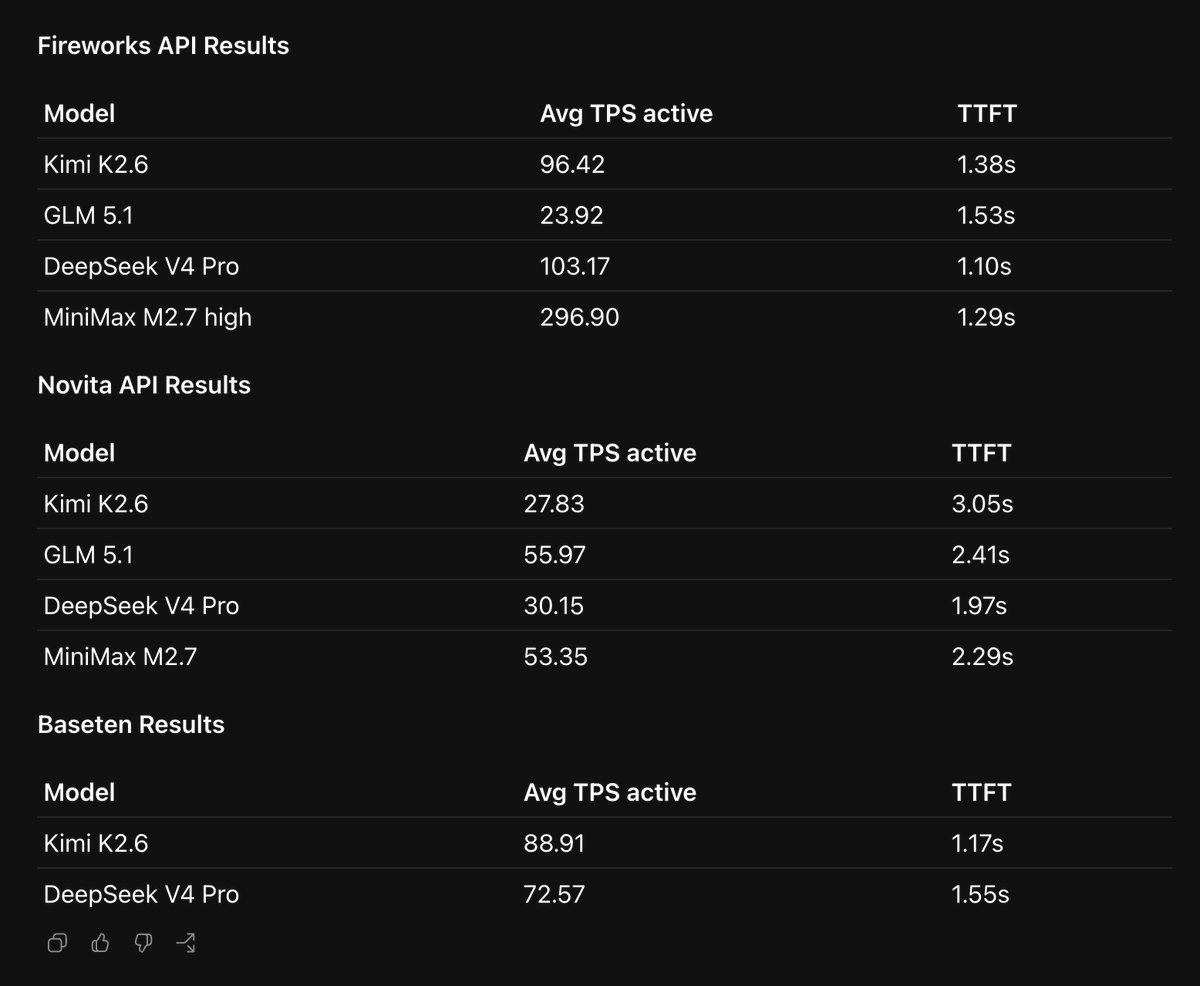

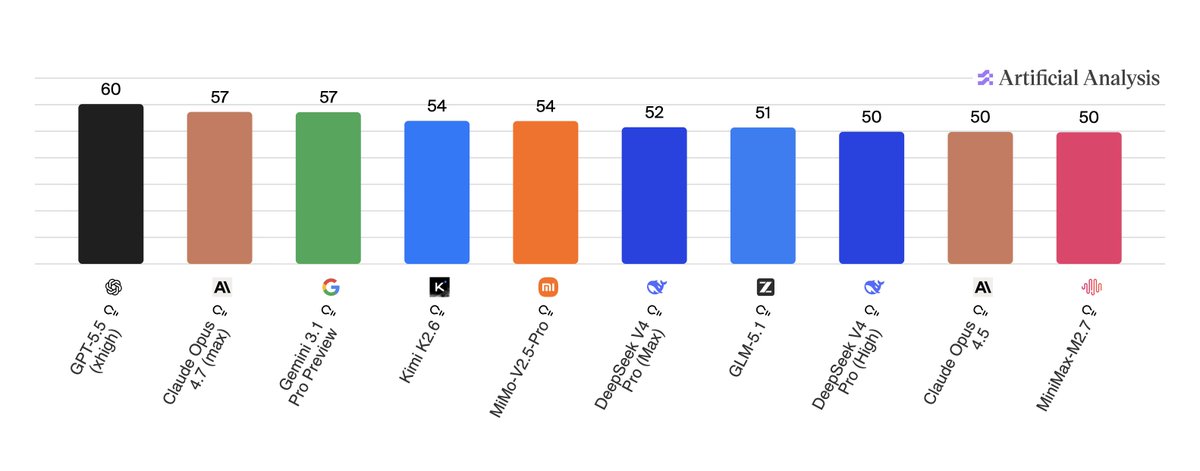

@scaling01 the token usage being higher is totally immaterial. open models can run 10-100x faster

the genius of DeepSeek going so hard on cache economics – on-disk, 96% hits, $0.0028-0.0035/M, days of storage – is also that teh frontier doesn't really have technical solutions to mog him. A GB is a GB, an SSD is an SSD. China can make SSDs, even if it can't make Blackwells.

+35% speed gain for Qwen3.6-27B (FP16) on vLLM! pointed claude to my RTX 6000 Pro + vLLM setup and asked it to find the optimal MTP.