1/ today we're releasing muse spark, the first model from MSL. nine months ago we rebuilt our ai stack from scratch. new infrastructure, new architecture, new data pipelines. muse spark is the result of that work, and now it powers meta ai. 🧵

Chen Zhu

96 posts

@chenzhucs

@Meta. Past: xAI, Google Brain/Deepmind, Nvidia, UMD

1/ today we're releasing muse spark, the first model from MSL. nine months ago we rebuilt our ai stack from scratch. new infrastructure, new architecture, new data pipelines. muse spark is the result of that work, and now it powers meta ai. 🧵

1/ today we're releasing muse spark, the first model from MSL. nine months ago we rebuilt our ai stack from scratch. new infrastructure, new architecture, new data pipelines. muse spark is the result of that work, and now it powers meta ai. 🧵

Introducing Grok Code Fast 1, a speedy and economical reasoning model that excels at agentic coding. Now available for free on GitHub Copilot, Cursor, Cline, Kilo Code, Roo Code, opencode, and Windsurf. x.ai/news/grok-code…

Introducing Grok Code Fast 1, a speedy and economical reasoning model that excels at agentic coding. Now available for free on GitHub Copilot, Cursor, Cline, Kilo Code, Roo Code, opencode, and Windsurf. x.ai/news/grok-code…

Join @xAI and help build a purely AI software company called Macrohard. It’s a tongue-in-cheek name, but the project is very real! In principle, given that software companies like Microsoft do not themselves manufacture any physical hardware, it should be possible to simulate them entirely with AI.

Can they just release Grok 4 so my boyfriend can come home?

Thanks to @LichangChen2 , we also add some mored advanced reward modeling technique like ODIN and RRM in our reward modeling repo.

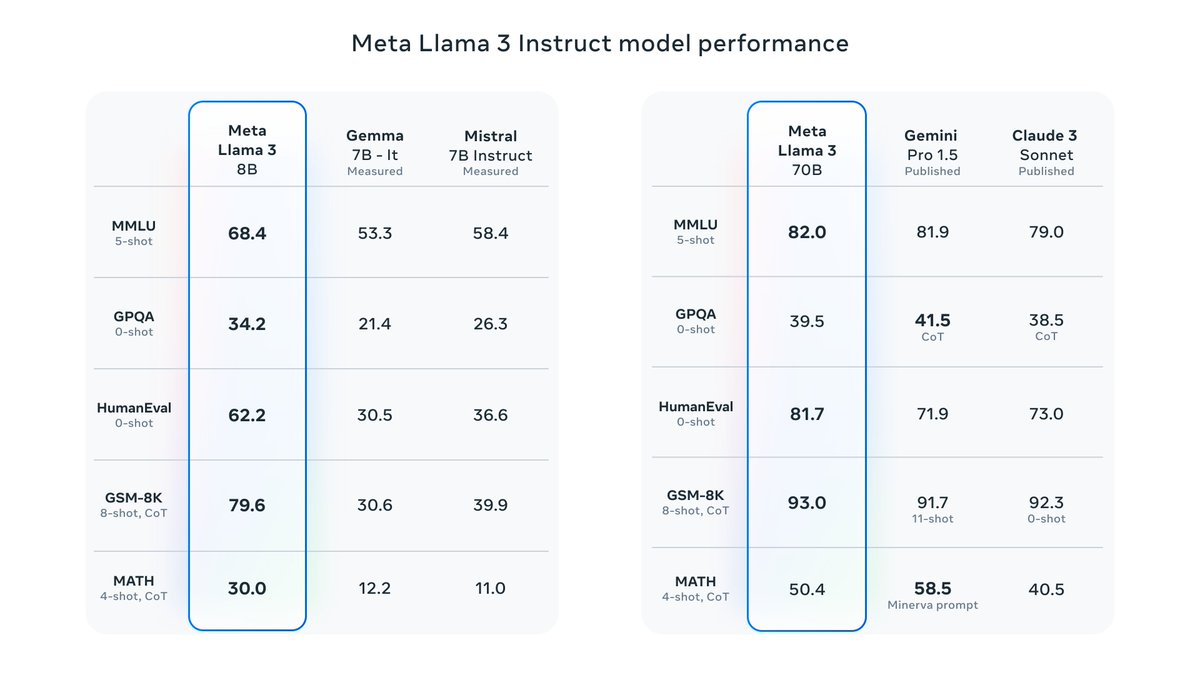

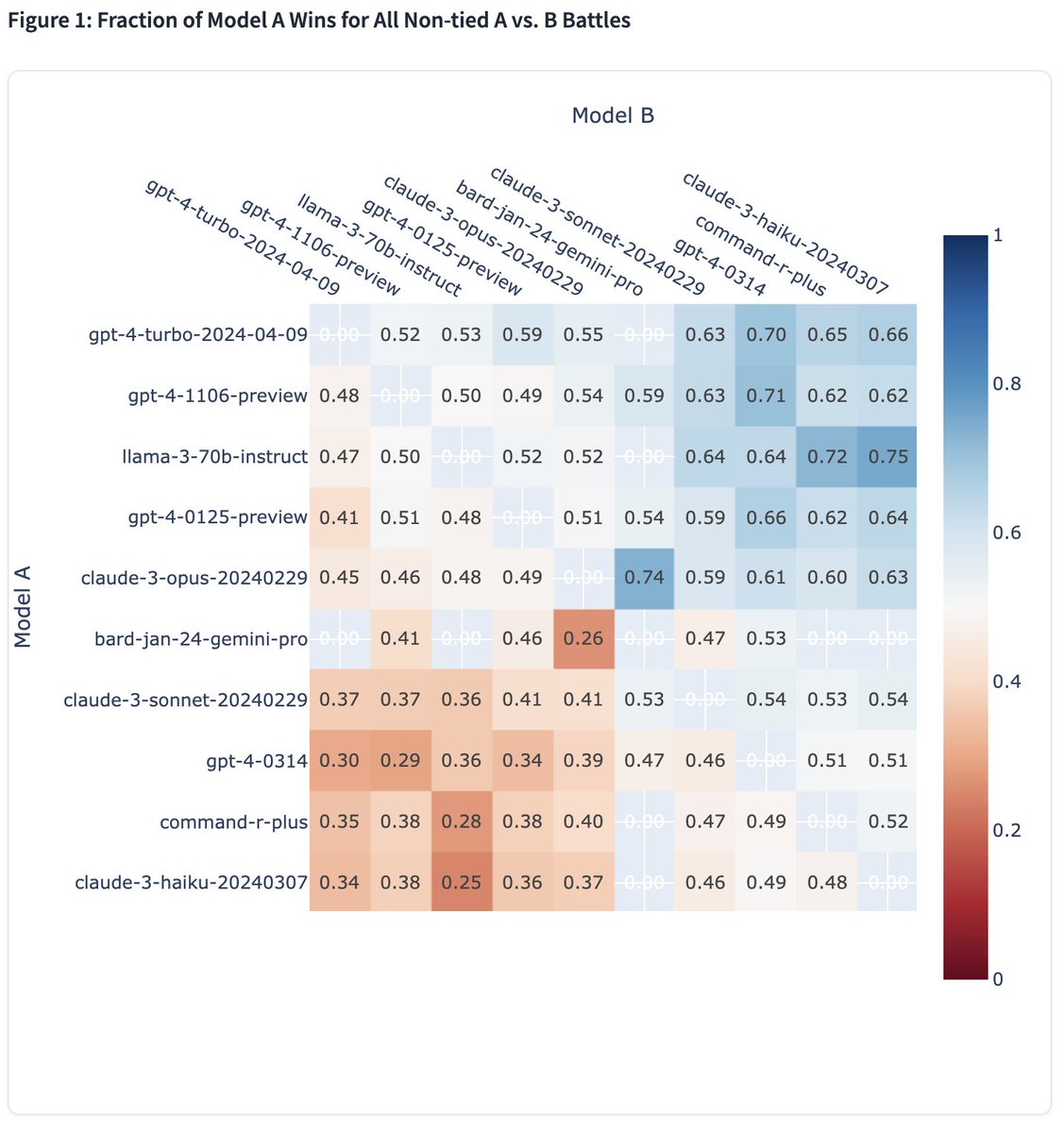

It’s here! Meet Llama 3, our latest generation of models that is setting a new standard for state-of-the art performance and efficiency for openly available LLMs. Key highlights • 8B and 70B parameter openly available pre-trained and fine-tuned models. • Trained on more than 15T tokens, 7x+ larger than Llama 2's dataset! • Improved tokenizer with vocabulary of 128K tokens for better performance. • State-of-the-art performance across industry benchmarks. • New capabilities, including enhanced reasoning and coding. • 3x more efficient training than Llama 2. • New trust and safety tools with Llama Guard 2, Code Shield, and CyberSec Eval 2. • Integrated into Meta AI, and available in more countries across our apps. • And, just the beginning with more models and new capabilities coming soon! Visit the Llama 3 website to read more and download the models. llama.meta.com/llama3