Lichang Chen

336 posts

@LichangChen2

Coding Agent RL & Harness of 🥑 @Meta MSL | Previously: GenAI unit @GoogleDeepmind | PhD’25 @umdcs BS’20 @zju_china

Introducing GPT-5.5 A new class of intelligence for real work and powering agents, built to understand complex goals, use tools, check its work, and carry more tasks through to completion. It marks a new way of getting computer work done. Now available in ChatGPT and Codex.

.@swyx current thesis: "2025 was coding agents. 2026 is coding agents breaking containment to do everything else."

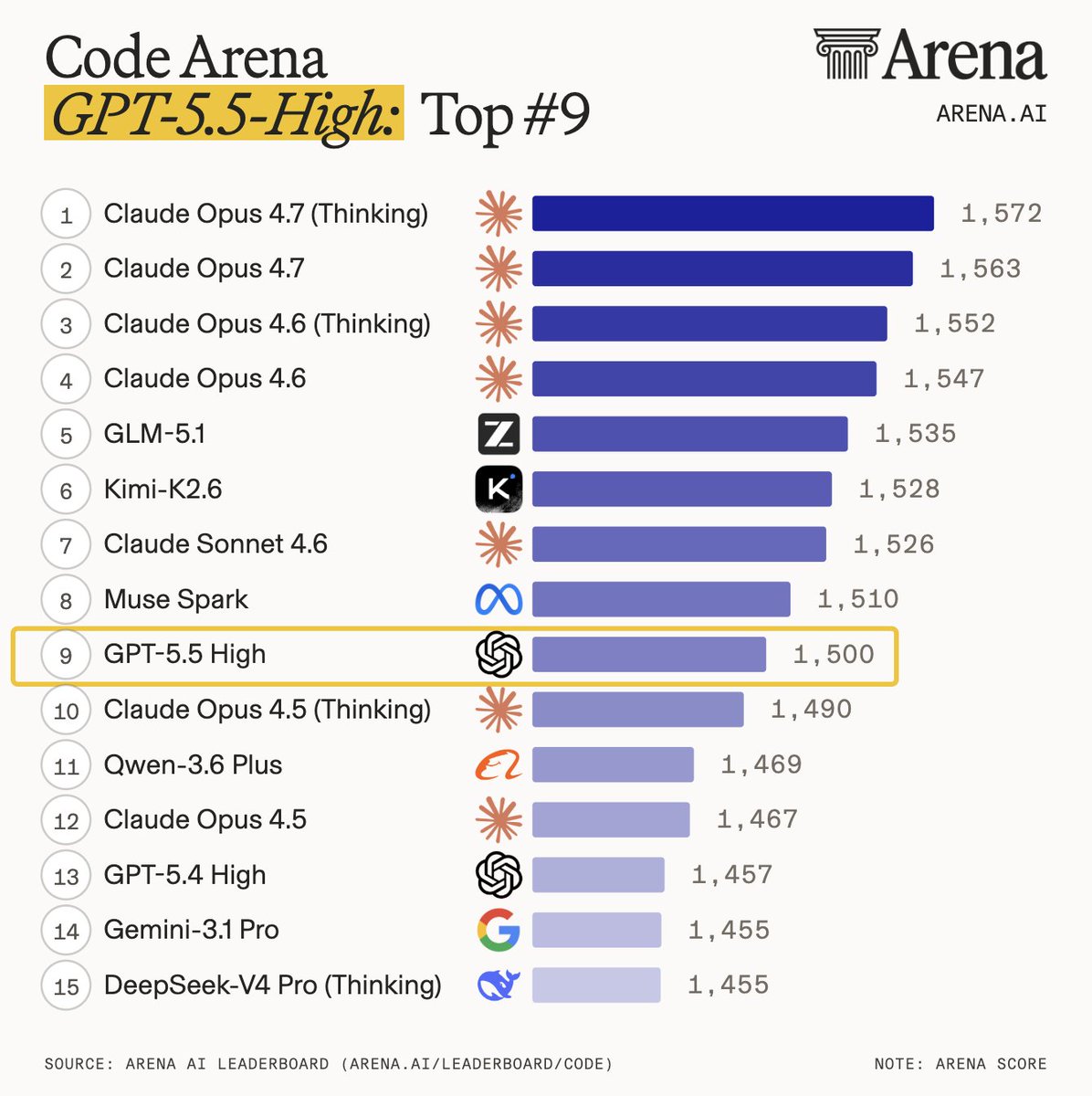

Muse Spark debuts at #7 in the Code Arena - making @AIatMeta the #3 lab right behind @AnthropicAI’s Claude Sonnet 4.6 and @Zai_org’s GLM-5.1, surpassing Gemini-3.1-Pro and GPT-5.4. Code Arena evaluates agentic coding on real-world tasks - building live websites and apps, ranked by users on real workflows. Huge congrats to @AIatMeta on this impressive milestone!

We benchmarked every major AI model at poker. GPT-5.4, Claude Opus 4.6, Gemini 3.1 Pro, Grok 4 and more. All played 5,000 hands of heads-up no-limit against our state-of-the-art poker agent. Every single one lost. Here's the full breakdown 🧵

The CEO of Google DeepMind just went on record saying he disagrees with one of the most respected AI researchers in the world. Demis Hassabis, the man behind AlphaFold, AlphaGo, and Google's entire AI operation publicly pushed back against Yann LeCun's claim that large language models are a dead end for artificial intelligence. LeCun, who left Meta earlier this year to start his own AI lab, has been saying for years that LLMs cannot reason, cannot plan, and will never get us to human-level intelligence. Hassabis disagrees, and he said so directly. His position is that scaling laws are still working, foundation models are still getting more capable, and whatever AGI ends up looking like, LLMs will be a central part of it, not something that gets replaced. He does say there is roughly a 50/50 chance that one or two additional breakthroughs will be needed beyond scaling alone, things like better memory, long-term planning, and world models. But the core disagreement with LeCun is clear, Hassabis believes the current architecture is sound and the current path leads somewhere real. Two Nobel-recognized researchers, two founding figures of modern AI, now publicly on opposite sides of the most important technical question in the industry.

Another image converted to code with Meta Muse spark. hard to believe this was all in one prompt

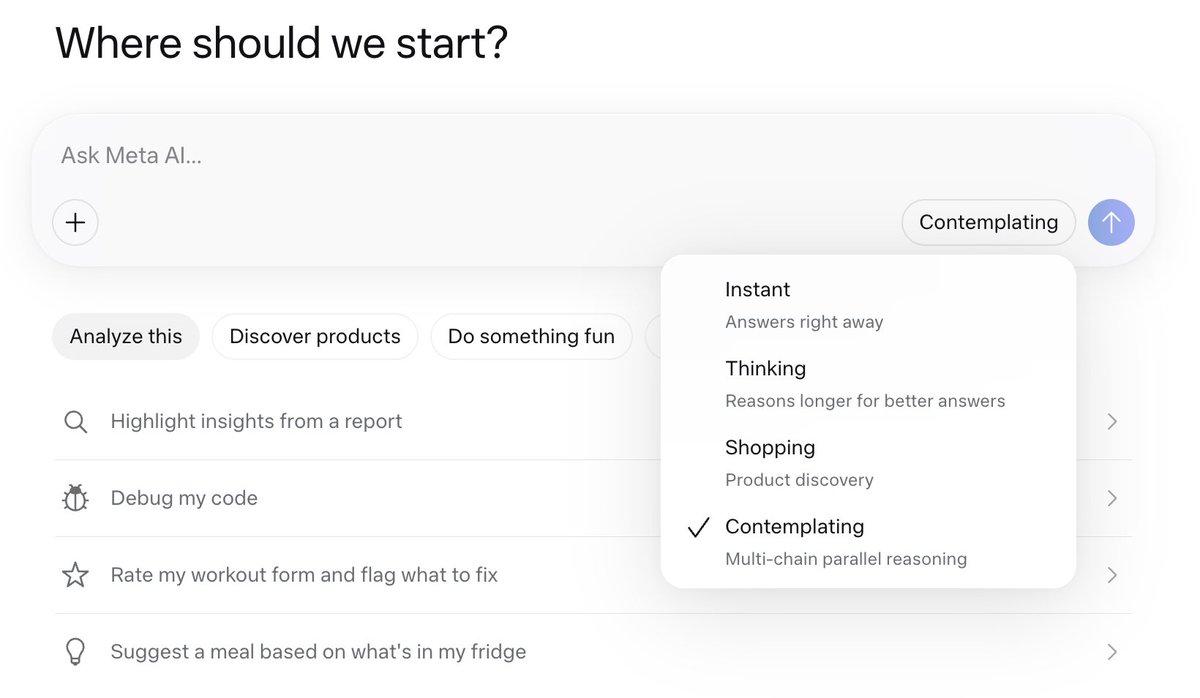

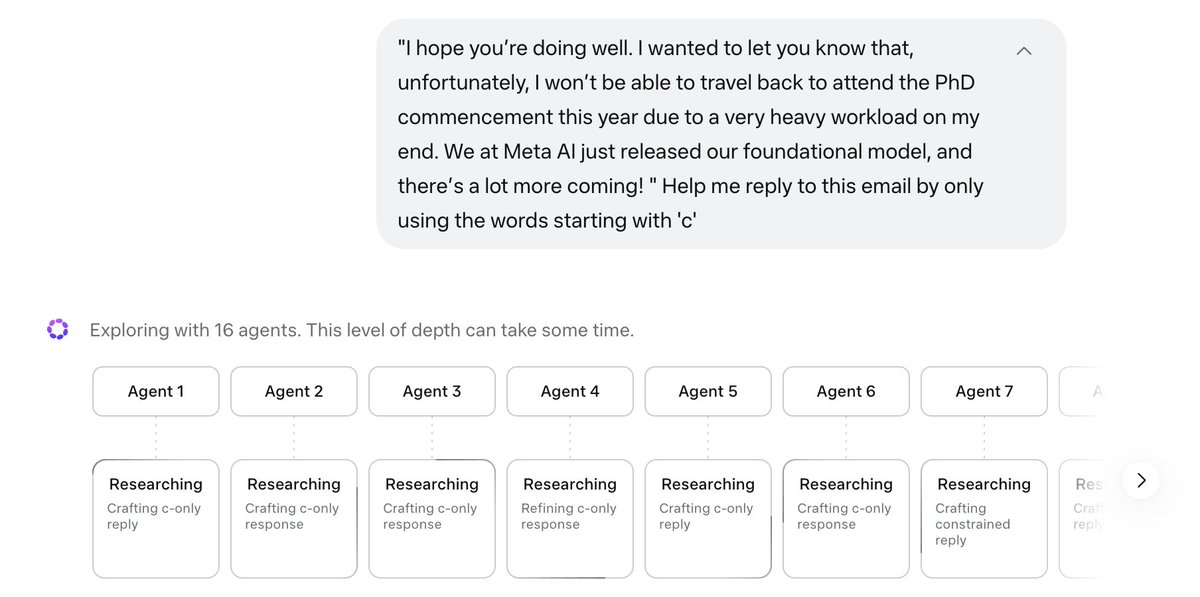

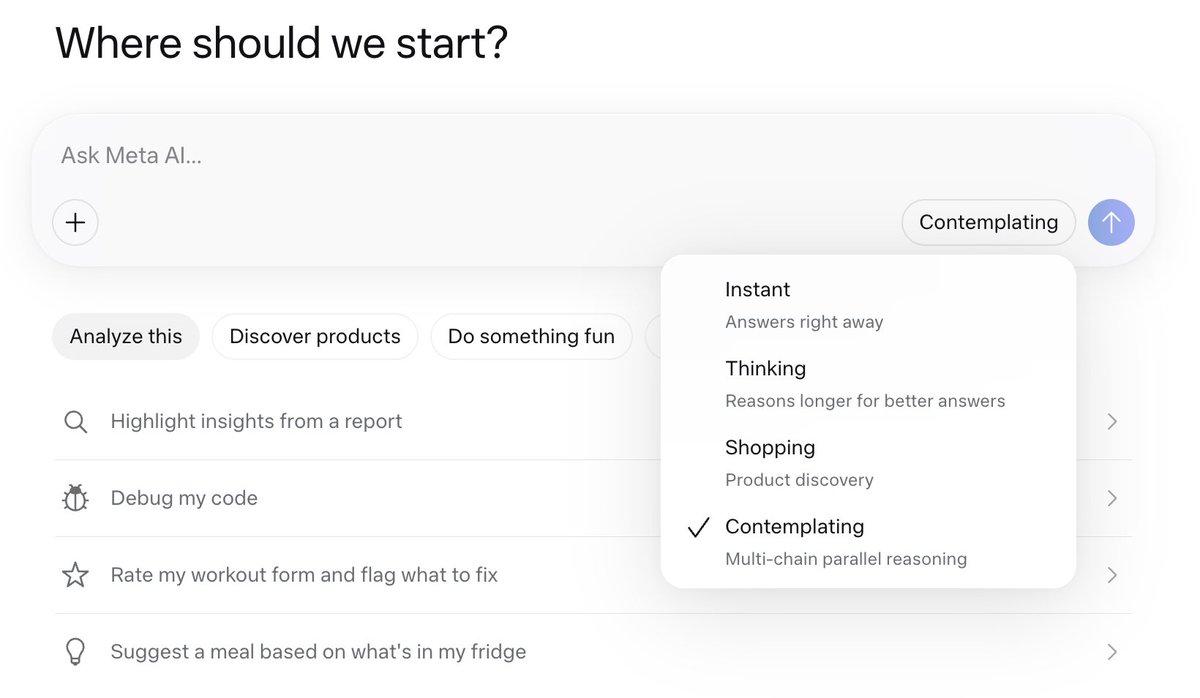

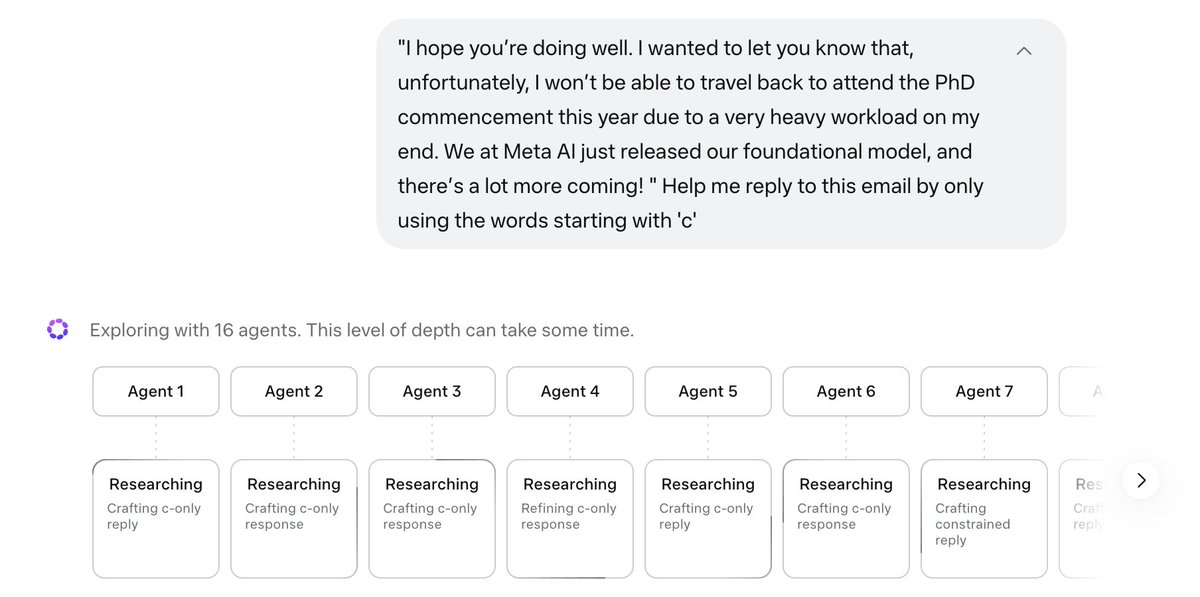

1/ today we're releasing muse spark, the first model from MSL. nine months ago we rebuilt our ai stack from scratch. new infrastructure, new architecture, new data pipelines. muse spark is the result of that work, and now it powers meta ai. 🧵

1/ today we're releasing muse spark, the first model from MSL. nine months ago we rebuilt our ai stack from scratch. new infrastructure, new architecture, new data pipelines. muse spark is the result of that work, and now it powers meta ai. 🧵

Spring has sprung here in Annapolis! 💐

most people thinking of continual learning as happening at the model level but with agents - there's actually three different levels you could "learn" at: - model - harness - context