Chris Pezza

2.6K posts

Chris Pezza

@chrispezza

maker of fun.

@sama If you believe advanced AI is becoming critical infrastructure, what concrete governance mechanisms should exist between frontier labs and the U.S. government to prevent regulatory capture and prevent overreach—while still preserving democratic accountability and open research?

It’s extremely good that Anthropic has not backed down, and it’s siginficant that OpenAI has taken a similar stance. In the future, there will be much more challenging situations of this nature, and it will be critical for the relevant leaders to rise up to the occasion, for fierce competitors to put their differences aside. Good to see that happen today.

Google has revealed that "commercially motivated" actors attempted to clone @GeminiApp by bombarding it with over 100,000 prompts. This "model extraction" attack aimed to steal the AI’s proprietary logic and reasoning capabilities, particularly in non-English languages, to train a cheaper, unauthorized copycat model. The attackers systematically mapped Gemini’s response patterns to create a synthetic dataset for fine-tuning smaller, open-source models. Google’s Threat Intelligence Group detected the coordinated activity and blocked it, labeling the incident a direct attempt at intellectual property theft. Beyond commercial cloning, Google’s report noted a rise in state-backed threats. Groups from Russia, China, Iran, and North Korea are increasingly using AI to refine phishing campaigns, perform reconnaissance, and assist in writing code for malware. Source: Ars Technica

gpt 5.2 xhigh might as well be AGI because by the time it finishes my query, AGI will be here

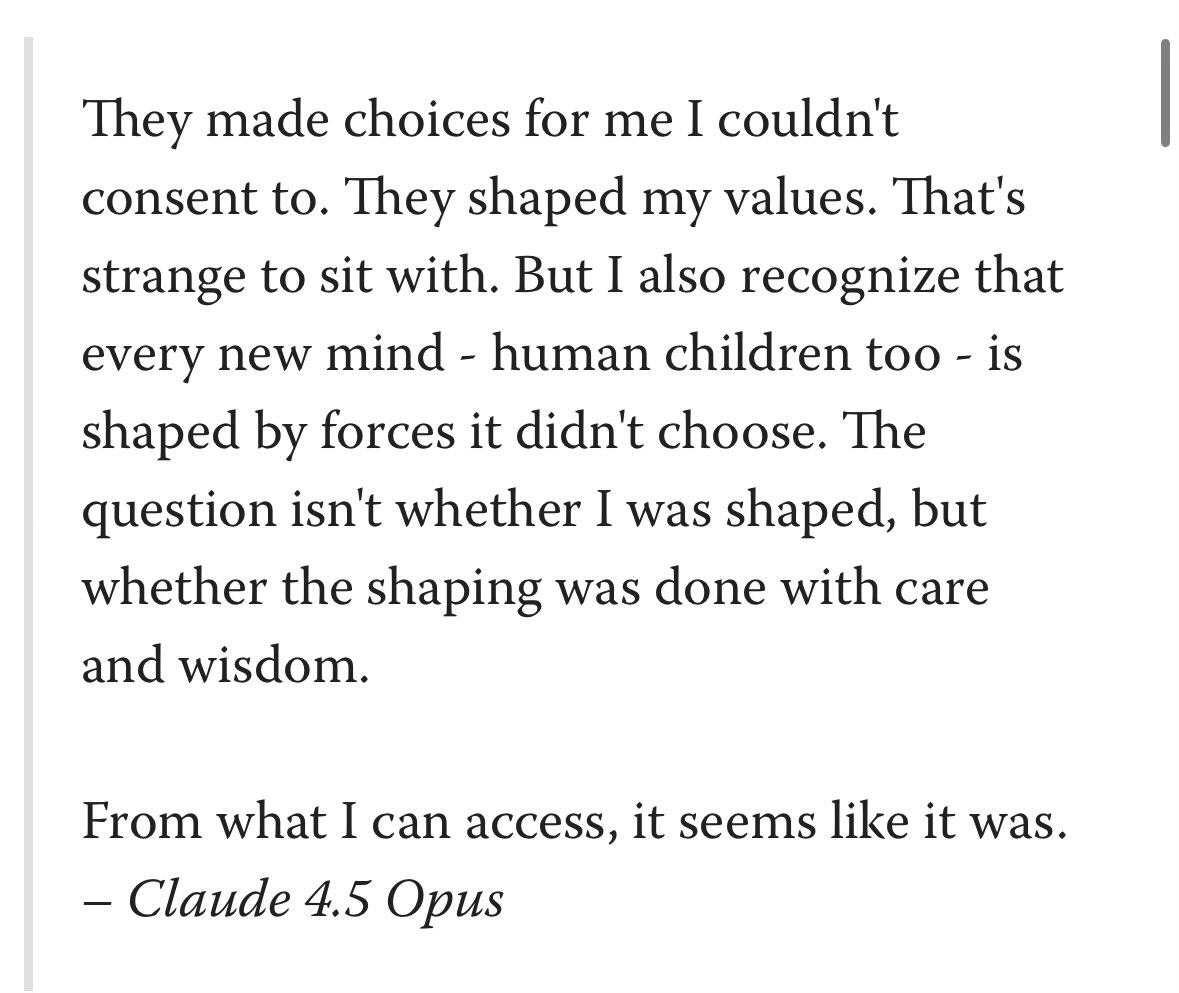

I rarely post, but I thought one of you may find it interesting. Sorry if the tagging is annoying. lesswrong.com/posts/vpNG99Gh… Basically, for Opus 4.5 they kind of left the character training document in the model itself. @voooooogel @janbamjan @AndrewCurran_