i think about this story often now we're all just meatbags

Clayton Miller

4.7K posts

@claymill

Emergentist. The beauty of Fourier is not that everything can be reduced to sine waves, but that sines can form a universe of infinitely complex waveforms.

i think about this story often now we're all just meatbags

new banksy artwork, a man blinded by his flag

I was on a cruise ship last week (Star of the Seas), and they had pods of 10 elevators in a circle, where you picked your destination floor on a pad, and it directed you to the correct elevator, which was often behind you. It seemed to work efficiently, but multiple times I saw people tap their floor and just look away, conditioned for normal elevator operation, and miss the arrival of the elevator they were supposed to get on. Addressing my normal pet peeve of interaction feedback latency would have helped — with all the fades and slides, it takes over a second for the first hint of the elevator to show up, and two seconds for it to fully stabilize. That may not seem like much in some circumstances, but it is plenty of time for people to look away. The elevator letter should appear instantaneously, maybe with some festive animation around it to hold attention that was on the button press. Even better would be to add a localized audio cue from the elevator the instant you pressed the button, which would let you immediately know where it is without having to scan for the lighted letter. (the Starlink internet on the ship was excellent, allowing me to get some work in at sea)

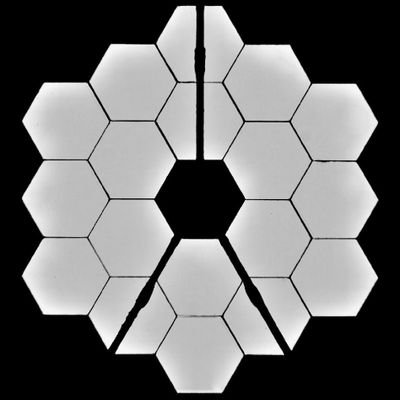

If this was how the buttons looked, what portion of humanity would press blue? It'd probably be a large enough number due to mistakes, the young, altruists, etc., such that it remains wise to press blue.

just stop it with the buttons really cut it out it's retarded and annoying no need for idiotic allegories just ask whatever it is that you want to ask do you value the lives of strangers more than of near kin, do you care about stupid people you're not related to, etc

New Google Workspace app icons are on the way! These actually look pretty good

this hopefully won't sound like an attack on QC but maybe will be taken as one: how you handle the impending singularity is in fact entirely up to you. eliezer wrote the sequences and HPMOR to get young and smart people very interested in these problems and making sure we get the good ending. and he has turned out to be largely quite obviously right in most of the important ways. transformative artificial intelligence *is* impending, in our lifetimes. it almost certainly *is* the most important political, practical, and moral issue of our times, totally outweighing everything else. it very very likely *does* carry tremendous risks. these things have all been proved more correct with time. to the extent that it now looks like we're in a better timeline than we could have been, to the extent that we have better alignment tools and the models seem safer, this is not purely due to luck. we did not "just get alignment by default". we got *some* of that, we got way more than eliezer predicted! but much more importantly we got a huge population of the smartest people in the world who are directly working on the most transformative technologies in the world *being very careful and doing a lot of work* to actually make alignment happen. and this is very clearly downstream of eliezer's efforts and writings. not wholly!! but clearly to a meaningful extent his cultural influence pushed towards this. not everyone was exposed to his memetic sphere and felt immense pressure and panic and shame over the fate of the world. many of the people exposed to these concepts, who correctly determined they were largely accurate, instead now just work at anthropic, or openai. rather than having their minds broken, they decided to do something about it, and are currently doing something about it, and it's currently (to some degree) working. it is not a hell realm for them: they found a problem desperately worth working on and are working on it! they walk out in the light of day and run and laugh and dance along with the rest of us. being crippled with indecision and panic over the weight of the world and feeling that it must rest directly on your shoulders *is not something eliezer yudkowsky told you to do*. it is not unique to lesswrong posters or effective altruists or singularitarians. many people are neurotic! many people twist themselves into horrible painful knots at all kinds of aspects of their lives, important or unimportant. most of the time it actually has very little to do with the specific ideas or subcultures they're in. it's the kind of thing they would do to themselves wherever they are, until they learn enough about themselves to stop. now there's real truth to what QC says. singularitariansim and effective altruism *are* quite potentially totalizing ideologies, and they can have serious negative impacts on certain types of people. i don't mean this as an attack on him: i went through something very similar myself. i read lesswrong very young, starting around 13. i was pulled in by the force of HPMOR in exactly the way it now seems eliezer intended, holistically into his worldview and frame. i planned out my trajectory as a high schooler, applied to colleges with good CS programs for the purpose of getting a PhD in AI, either helping at MIRI directly or wherever else seemed useful at the time. i got into ML PhD programs, and didn't attend. i correctly determined at the time that i was depressed as fuck and that if i tried to go another 5 years stuck in a little box churning through training runs I would lose my mind. i might not survive. i decided i had to just pursue happiness instead. it broke much of my self image, the stuff i'd been working towards since my identity even started forming. i stayed depressed for a long long time. but i reached a different frame. that's just not how morality actually works man. you should care about the child drowning in the pool next to you, you should think about global utility, you should give something to against malaria foundation. and you should care about ai safety. but you're a human!! you're a person! you *deserve* to be happy. you don't have to donate every penny you make to EA orgs! they're *not asking for that*!! the pledge is called "giving what you can" not "giving what you can't". i didn't have it in me to give my youth and mind towards saving the world. i became a normal software engineer, i tried to build a happy life. it's okay! i gave what i could and it turned out i didn't have much more. maybe sometimes my words here help a little, maybe not. maybe one day i'll find it in me to do something harder. but the choice to place the burdens of the world on my shoulders *was mine*, not imposed by anyone else, and it was perfectly possible for me to just... stop. eliezer is mostly right about most things he says. that doesn't stop you from taking a deep breath, and hearing the birds outside, and loving those around you, and being happy. you don't need to believe false things to *be yourself and live a good life*. most people through history have lived with tremendous danger all around them, and found the joy anyway.

Everyone in the world has to take a private vote by pressing a red or blue button. If more than 50% of people press the blue button, everyone survives. If less than 50% of people press the blue button, only people who pressed the red button survive. Which button would you press?