Sabitlenmiş Tweet

clockworkio

340 posts

clockworkio

@clockworkio

https://t.co/BXAeZpbDtP builds software that optimizes GPU clusters for fault tolerance, deterministic performance and increased utilization.

Palo Alto, California Katılım Nisan 2021

94 Takip Edilen78 Takipçiler

𝟗𝟐% 𝐨𝐟 𝐭𝐡𝐞 𝐜𝐨𝐬𝐭 𝐝𝐢𝐟𝐟𝐞𝐫𝐞𝐧𝐜𝐞 𝐛𝐞𝐭𝐰𝐞𝐞𝐧 𝐆𝐏𝐔 𝐜𝐥𝐨𝐮𝐝 𝐩𝐫𝐨𝐯𝐢𝐝𝐞𝐫𝐬 𝐡𝐚𝐬 𝐧𝐨𝐭𝐡𝐢𝐧𝐠 𝐭𝐨 𝐝𝐨 𝐰𝐢𝐭𝐡 𝐆𝐏𝐔 𝐩𝐫𝐢𝐜𝐢𝐧𝐠.

It’s goodput: how much of your cluster is doing useful work vs recovering from failures.

SemiAnalysis modeled a 5,184 GB300 NVL72 cluster:

• TorchPass: 6% goodput expense

• Checkpointless: 10.53%

• Checkpoint restart: 20.91%

At Llama 3 scale, checkpoint restart can leave ~80% of GPUs idle after repeated failures.

As @SemiAnalysis_ put it, TorchPass is “the closest we've seen to what Frontier Labs are using at this scale.”

Infrastructure efficiency is becoming the real AI scaling advantage.

#AIInfrastructure #GPUClusters #Goodput #FaultTolerance #semianalysislinuxwebinar

English

Plug your workload into SA's free calculator: j_size, MTBF, $/GPU-hr, b_radius. Swap the FT framework. Watch goodput compute.

🧮 na2.hubs.ly/H050NN-0 📄

na2.hubs.ly/H050Pnp0 🔗

🔦 na2.hubs.ly/H050Q5N0

English

SA released the calculator free. Load "Large LLM Pretrain," swap the FT framework, watch goodput recompute.

🧮 na2.hubs.ly/H04-T0H0 📄

na2.hubs.ly/H04-Tff0🔗

na2.hubs.ly/H04-TbK0

English

@SemiAnalysis_ benchmarked fault-tolerant training frameworks on a 5,184-GPU GB300 pretrain.

Same hardware, same $/GPU-hr, three FT stacks.

Goodput expense swings from 6.14% to 20.91% of TCO based on the stack alone. 🧵

GIF

English

At cluster scale, failures aren’t edge cases—they’re constant.

The real question isn’t if training fails.

It’s which tradeoffs you’re making when it does:

Restart → lose progress

Migration → resume same step

Per-step FT → change semantics

Most teams choose without clear data.

We break down the tradeoffs.

→ na2.hubs.ly/H04NbQH0

English

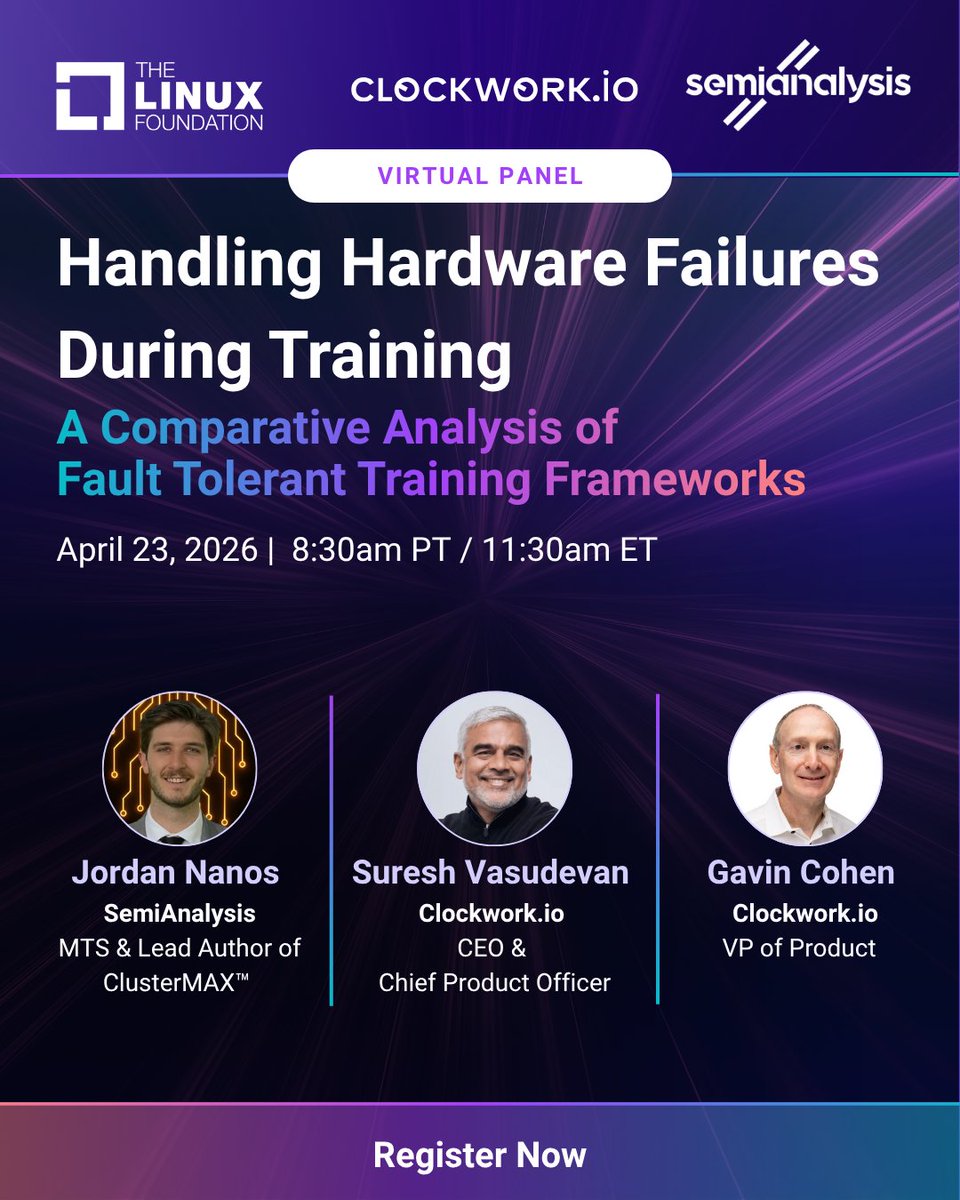

Just wrapped #PyTorchCon in Paris. The conversation everyone's having: infrastructure isn't keeping up with training scale.

Join us on April 23 when @JordanNanos of @SemiAnalysis_ and the @clockworkio team (@SysdigSuresh, CEO and Gavin Cohen, VP of Product) compare fault-tolerant training strategies head-to-head in a live virtual panel hosted by @linuxfoundation

Register: na2.hubs.ly/H04NbQH0

#PyTorch #DistributedTraining #MLOps

English

We'll be at PyTorch Conference Europe in Paris next week 🇫🇷

Come by our booth to chat more.

📖na2.hubs.ly/H04HDQ00

#PyTorchEurope #pytorcheu #pytocheu2026 #FaultTolerance #MLOps #DistributedTraining

English