Jonathan Rudderham

3.6K posts

Jonathan Rudderham

@codeRunnerUK

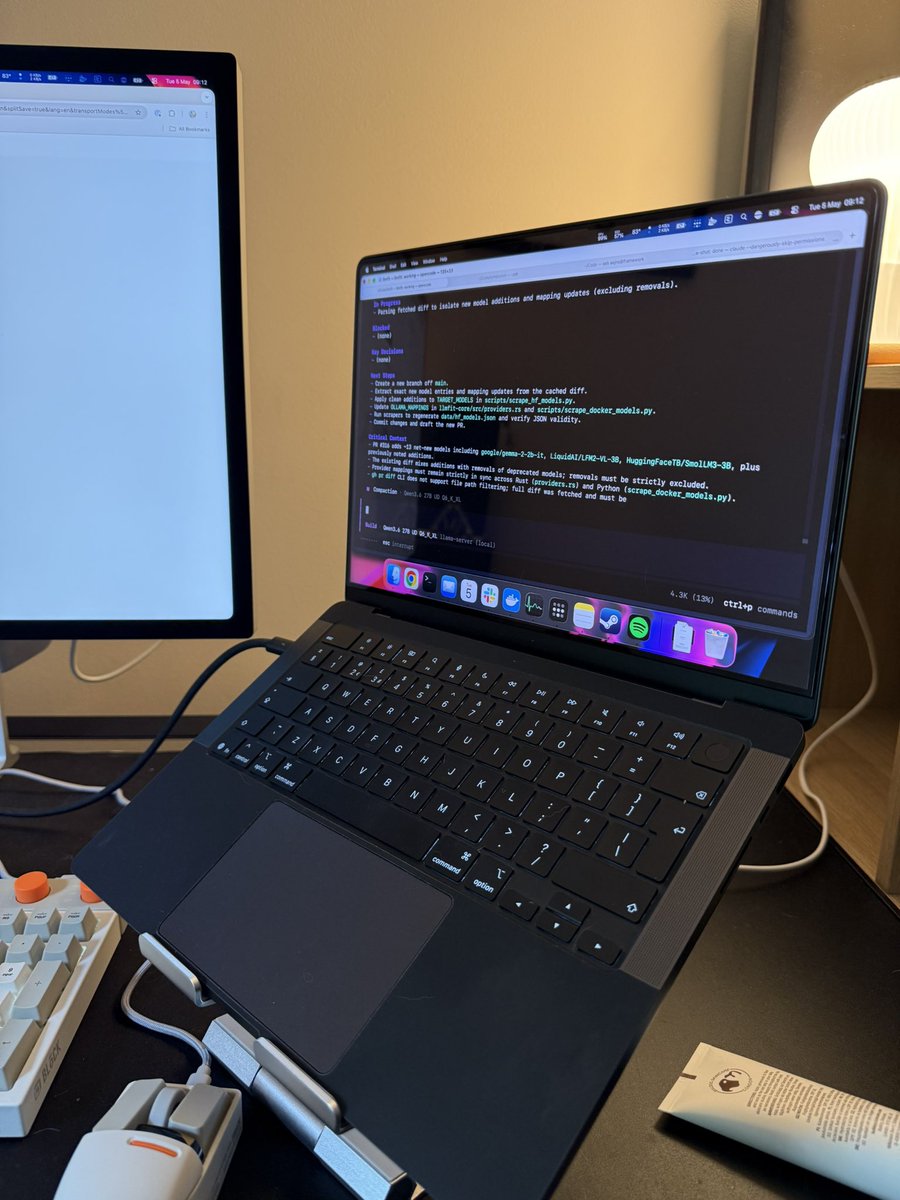

Go local, or go loco.

Kent, UK Katılım Şubat 2009

114 Takip Edilen251 Takipçiler

@anon_opin Well, you could use either. I guess it’s just six of one and half a dozen of the other.

English

@ivanfioravanti Oh great. “MTP”. Just what we need. Another cryptic acronym that no one understands.

English

@mrdoornbos 2K? You had 2K? Luxury! Lol. (We did get the 16K RAM pack.)

English

@codeRunnerUK Same thing. Just NTSC and 2k of RAM on board. I should find a ZX81 for my collection.

English

@bestofStarTrek I was skeptical at first but he won me over. He didn’t always have the best episodes but I thought that, by the time of the final series with those multi-episode stories, they had something special going on. I wish it had continued.

English

@AlexJonesax I don’t know what “you do you” means. I’m just offering my experience. It’s entirely up to the person reading if they take it or leave it. (Not that anyone really has any choice until Apple gets their fingers out and brings out the next Mac Studio. Lol.)

English

@codeRunnerUK Hey, you do you - no need to justify purchase to me; sounds smart. Great model yes.

English

I think it's the capacity not being sufficient for demand which is artificially pushing the prices higher than most people can reasonably afford. This is not a problem that's going to solve itself or go away any time soon. We're kind of hoping for the bubble to burst and for AI data centres to flood the market with their cast-off/unused RAM as they try to make their money back.

What I'm hoping for is that a company like Apple has another "M-series moment" in that, not wanting to be held ransom by a supplier, they develop their own fabrication plant and their own RAM suited to Apple products and their M-series chips. Then they can set their own prices, and position them strongly in a market that is currently struggling badly.

No one else is really likely to do this, because they're afraid the bubble might burst and any money they've put into a fabrication plant will be money wasted. But a company like Apple, that's already shown they have a longer term vision (via Apple Silicon) could really do it.

Maybe that's the reason for the delay to the M5 Ultra, and the unavailability of the base Mini, the Studios, etc. Maybe they're not ready yet to put their own RAM into mass-production?

Yeah, it's only a pipe dream but ... well, it could happen.

English

I really hope the upcoming energy crisis doesn’t affect personal hardware prices.

This is my last week at my company, but I'm still hearing a lot about supply chain issues from the field.

We're seeing supply bottlenecks for countless raw materials, and logistics costs are skyrocketing.

It’s not just DRAM.

Hardware requires countless components, and some are already facing shortages.

Data centers demand massive amounts of energy, and even water is becoming scarce.

It's becoming increasingly likely that we'll see price hikes across the board—not just for hardware, but for Cloud AI as well.

English

@durreadan01 I remember when this was first hyped in August last year. I said “If that comes out at £599, I’m buying one on the spot!” It came out at £599 and I bought … a used M1 MBA for £380 … lol. But I did then get a Neo for a relative. That counts, right?

English

@tomfgoodwin I tried “don’t think or reason, just respond”, and it started a reasoning process saying “I won’t show my thinking”. Lol.

English

@RhysSullivan Hmm, I thought it might respond "You are conscious". Lol.

English

@jun_song People say "local LLMs are slow" or "you need a GPU for speed (won't mention the lack of vRAM)". In truth, everything is a compromise. What is your privacy worth? For me, it's worth slower tokens/second. It's a compromise I'm prepared to make.

English

2026년 2월 16일, Alibaba는 Qwen3.5를 발표했습니다.

엄청난 성능의 소형모델들, 진정한 로컬LLM 붐의 시작이였습니다.

이전까지의 로컬LLM은, 아주 비싼 컴퓨터에서만 구동되지만 무료모델보다 성능이 낮은 장난감 취급을 받았습니다.

오픈소스는 돈이 되지 않는다며, 연구도 제대로 이루어지지 않았었습니다.

이 기점을 시작으로 사람들은 눈을 뜨기 시작했습니다.

프론티어 랩들은 구독플랜의 사용량을 사전고지없이 줄였고, 토큰사용량을 늘렸습니다.

그로 인해 $20 플랜은 그저 체험판이 되어버렸고, 대부분 $200 플랜을 구독하기 시작했죠.

API 가격은 차이없는 업데이트마다 비싸졌고, 그 결과로 기업들도 오픈소스로 눈을 돌렸습니다.

많은 사람들은 제대로 된 이유도 모른채 계정이 정지당했고, 고객지원조차 받지 못했습니다.

그 와중 우리의 데이터는 넘어가고, 학습에 사용되었습니다.

전부 현재진행형으로 일어나고 있는일입니다.

이상함을 느끼는 많은 사람들이 로컬LLM으로 넘어오고 있습니다.

많은 연구원분들도 합류해서 기술을 발전시키고 있고, 고작 3개월도 안된 시간에 엄청난 발전을 이루었습니다.

Qwen3.6 27b는 고작 2개월만에 Qwen3.5 397b를 이겼습니다.

그리고 이제 최신 Sonnet 수준을 맥북에서 구동 가능해졌습니다.

컴퓨팅 병목에 걸려서 벤치마크만 올라가고 바뀌는게 없는 클라우드 AI와는 비교가 안되는 속도로 발전했습니다.

이 모든분들께 진심으로 감사드립니다.

오픈소스는 승리할것입니다.

한국어

I generally think about my story universe which, by consequence, means I'm thinking "I wonder if I can get my local LLM to do this..." and then enhance the process. But I've been dabbling with AI/local LLM since the end of 2024, so it all feels a bit commonplace these days.

I do like the advances - particularly in image and video generation. Both of those were a bit rubbish on the Mac until just a couple of months ago.

English

Can we have a simple way to turn off thinking on Qwen3.5/3.6? I ask 9B a simple question about a chapter in my story and it spends ages churning out "but, wait" reasoning/thinking, for almost 4,000 tokens before giving a short paragraph as a response. This is not "reasoning". It's "dancing around the houses, pretending to think".

English

@MarioBojic "Trust your feed"? I wouldn't trust the EU to sit the right way on a toilet seat. (Thank to Rowan Atkinson, for that one.)

English

@ianpauldukes Well he keeps telling the rest of us that we won't need money when AI makes everything for free. Makes you wonder why he needs $10 trillion. Not suspicious at all.

English

You can't hoard $10 trillion for yourself without taking it away from everyone else. He's telling the world he plans to impoverish us all. Real supervillain shit.

Watcher.Guru@WatcherGuru

JUST IN: Elon Musk says his goal is to reach a $10 trillion net worth. "$10T or bust"

English

Neither like nor dislike. I like that open source models bring the ability for a humble creative person to realise their vision without the traditional hurdle of requiring resources, money, and a thick skin.

I write my own stories, and I don't care if no one ever reads them. Over the years I've played around with cover photos - and the evolution from just "free" images to stock photos to graphics apps combining photos to images generated from "moderated" frontier AI, to using open weight free AI image generation/video generation is a progression I particularly appreciate.

30 years ago I just had text. Today, I'm bringing my scenes to life as short videos, and as full-cast audiobooks. Tomorrow I could be making my own movie from my story. I think this is a good thing.

English

It’s crazy how much AI polarises people. On the one side it’s like god’s gift to the world that’ll save us all, make us all rich, and give free unicorns to all; while on the other it’s “stringing together a bunch of words”.

Meanwhile, those outside of our little AI bubble still think it’s Arnie saying “I’ll be back” while shouting “No, I want to talk to a real person!” while trying to get customer support.

English

LOL

Billionaire AI promoter suddenly realizes WHAT EVERYONE HAS BEEN SAYING FOR YEARS.

Get it through your fucking heads:

—LLMs are a slot machine for tokens.

—Chatbots are a fuzzy search engine on a database.

—They can never be AGI, physically or mathematically.

End of story.

Mark Cuban@mcuban

I’m coming to the conclusion that the biggest challenge for Enterprise AI, and AI in general , as of now, is that it’s still impossible to make sure that everyone gets the same answer to the same question, every time. Which is a great response to the doomers. AI doesn’t know the consequences of its output. Judgement and the ability to challenge AI output is becoming increasingly necessary, and valuable. Which makes domain knowledge more valuable by the second. Am I wrong ?

English

750GB/s doesn’t seem like that big of a leap given the emphasis on AI that’s going on right now. Sure, since the 68 of the M1 we’ve seen massive improvements and, certainly, my M4 Max does far better than my M4 Pro (273x546), but it looks more like incremental improvements. The 614 of M5 Max must be already be barely noticeable compared to the M4 Max’s 546. Would 750 of the M6 Max really be that much quicker than the 614 of the M5 Max?

Good to see improvements, but it’s looking like we're better off waiting several generations before replacing your current system. I would be far more interested in seeing 192GB unified RAM at an affordable price.

English