Conor Durkan@conormdurkan

I like the Bayesian framing of reward-based post-training (i.e. reward-maximization with a KL penalty).

Up to an additive constant, reward functions are log-likelihoods, and the pre-trained model is a prior. Then the posterior target is the product of the likelihoods and prior (the prior KL-weighting can equivalently sharpen or smooth your likelihoods). Rewards can be hard for math or code verification, or soft for subjective preference.

This means post-training (of this kind at least) optimizes KL(model || posterior), whereas pre-training optimizes KL(data || model). It also means post-training is mode-seeking (as opposed to mode-covering like pre-training), so those rewards better be well calibrated.

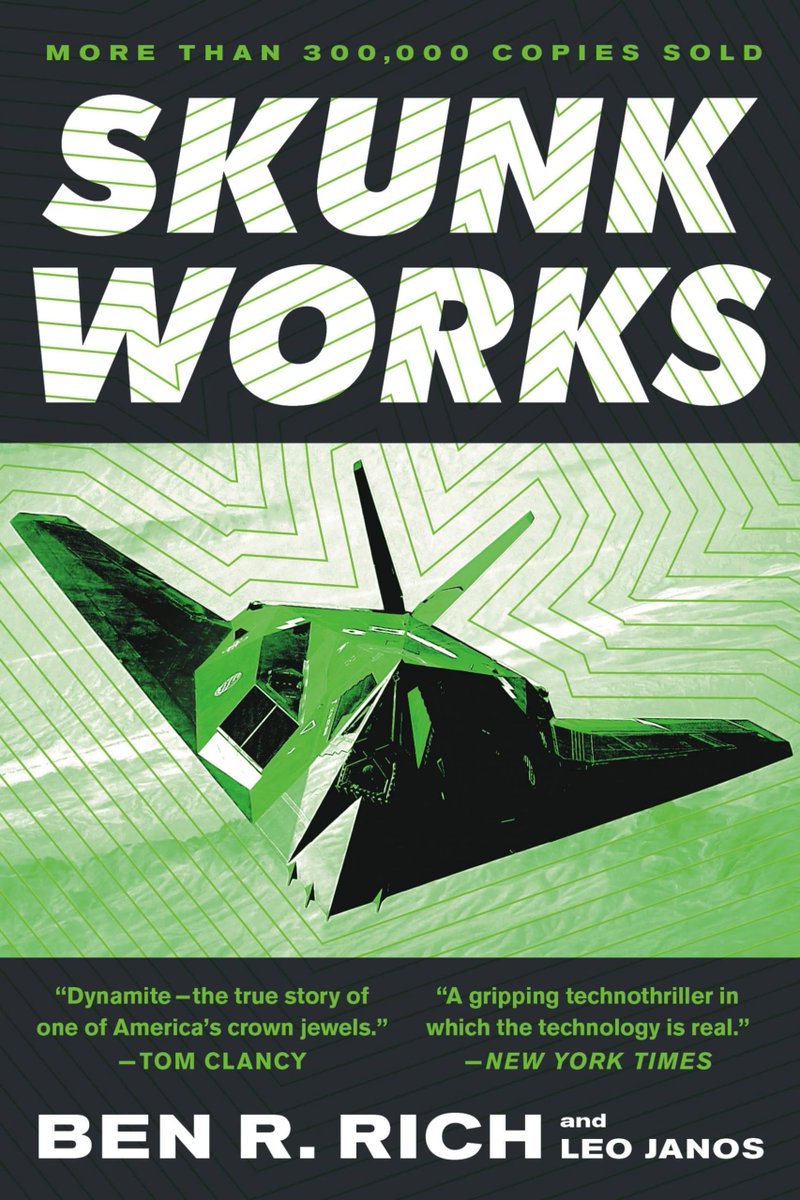

(Figure from 'RL with KL penalties is better viewed as Bayesian inference', link below along with other useful references)