Antony Alvarez

44 posts

Antony Alvarez

@cpolistadev

Political Scientist and Developer.

Lima, Peru Katılım Kasım 2018

752 Takip Edilen35 Takipçiler

Antony Alvarez retweetledi

By @GeorgeSelgin

"A report by Brian Blackstone on Switzerland's deflation. He observes that the Swiss case contradicts the widespread belief among economists (“as close to an economic consensus as you can get,") that deflation is necessarily a bad thing."

cato.org/blog/switzerla…

English

Antony Alvarez retweetledi

Keynesian macroeconomics, at least in its vulgar form, strucks me as deeply misguided. For a long time, I have suspected that it appeals most strongly to those who imagine the economy in mechanical terms: the economist stands before a control panel, pressing buttons and pulling levers to produce the desired aggregate outcomes. That vision is hard to reconcile with an economy understood as the emergent result of millions of individuals trying to improve their own circumstances, each acting on local knowledge, incentives, expectations, and constraints.

The deeper problem is that the beginning economics student is almost taught to develop a kind of split intellectual personality. In microeconomics, he learns the centrality of opportunity cost: every action requires giving up the next-best alternative. In the world of vulgar Keynesianism, however, that lesson seems to vanish. Because recessions are said to create idle resources, we are told that government can increase spending, draw those resources into some politically chosen use, and do so without sacrificing a better alternative. But if no better alternative is being sacrificed, in what meaningful sense was the resource scarce? And if the resource could have been employed elsewhere, then the opportunity cost has merely been ignored rather than eliminated.

The same split appears in the treatment of prices. In microeconomics, the student learns about the virtues of competition and the communicative and coordinating role of market prices. Prices transmit dispersed knowledge, discipline plans, and help millions of strangers coordinate their activities without central direction. Yet in vulgar Keynesian macroeconomics, the student is then asked to accept that a monopolistic institution, the central bank, should manipulate one of the most important prices in the economy, the interest rate, in order to “stimulate” aggregate demand. The logic of price coordination is celebrated in one classroom and suspended in the next.

This is why Frank H. Knight’s statement in his 1951 essay “The Role of Principles in Economics and Politics” still feels so relevant. Knight saw the danger of treating macroeconomics as a separate kingdom, detached from the basic principles that govern individual choice, relative prices, scarcity, and coordination.

There is value in studying the nominal and wage rigidities to which Keynes drew attention, and which New Keynesian economists later incorporated into more formal macroeconomic models. Those issues deserve serious analysis. But I find it highly plausible that the Keynesian Revolution produced more costs than benefits. Its greatest damage may have been methodological: it helped divide microeconomics and macroeconomics into sealed compartments, encouraging generations of economists to forget in macro what they had just learned in micro. Even where Keynesian economics produced insights worth preserving, that intellectual fracture did real harm to the progress of economic thought.

English

Antony Alvarez retweetledi

Cuando una app empieza a crecer, el frontend necesita conectarse a varios servicios: usuarios, pagos, facturación, notificaciones, etc.

El problema es que el cliente empieza a cargar con demasiada lógica: tiene que saber a qué servicio llamar, cómo autenticarse, cómo manejar distintos formatos de respuesta y qué cambiar cuando algo interno se modifica.

Una forma de resolverlo es usar un API Gateway:

una capa intermedia que funciona como punto de entrada entre el cliente y los servicios.

frontend → API Gateway → servicios

El frontend le habla a un solo lugar. Después, el gateway se encarga de:

1) Enrutar cada request al servicio correcto

2) Validar autenticación y permisos

3) Aplicar rate limits y cache

4) Registrar logs y métricas

5) Combinar respuestas de varios servicios si hace falta

Así el frontend no necesita conocer cómo funciona cada servicio por dentro. Y muchos cambios internos se pueden resolver en el gateway sin tener que modificar el cliente.

Pero no es gratis: si el gateway falla, puede afectar a toda la app. Y si empieza a concentrar demasiada lógica, se puede transformar en un cuello de botella difícil de mantener y escalar.

Español

Antony Alvarez retweetledi

Antony Alvarez retweetledi

Sub-Agents vs Agent Teams in Claude Code:

Sub-agents get their own system prompt, their own tool set, and a clean context window. They report back to the parent and terminate.

Agent teams get all of that plus three things sub-agents don't have:

- a shared task list with dependency tracking

- peer-to-peer messaging between teammates

- persistent context that accumulates over time.

I published an article today that dives into a lot more detail.

Read it below.

Avi Chawla@_avichawla

English

Antony Alvarez retweetledi

Este sábado fui a un hackatón de Claude Code. No ganamos.

El equipo eran 4 developers con años de experiencia más un directivo de una institución educativa importante. Construimos algo que funcionaba: un MCP para consumir 1,400 fuentes de datos abiertos de la CDMX, análisis, gráficas, hasta un chatbot encima.

Ganó un equipo que contó mejor la historia.

Mientras nosotros optimizábamos el código, ellos optimizaban la narrativa. Su video era bueno, su presentación era buena, su problema estaba claro.

El hackatón no era sobre construir. Era sobre vender.

Y yo, que programo desde los 10 años, caí en el mismo error que critico en otros equipos: creer que la tecnología es el producto.

Español

Antony Alvarez retweetledi

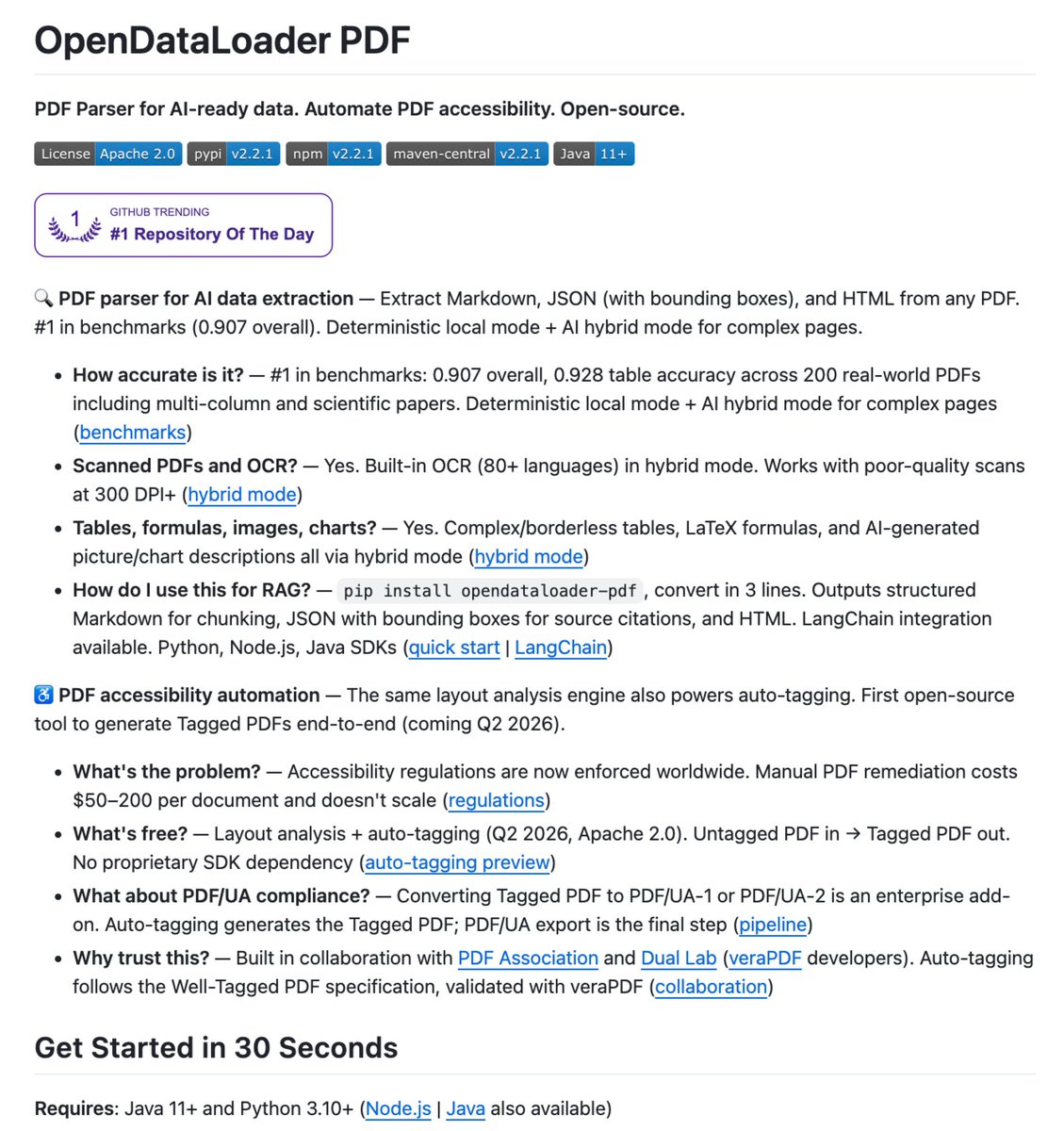

有人刚刚开发了一个工具,可以将 PDF 转换为

干净、结构化的 Markdown

速度达到 100 页/秒 🤯

不需要 GPU。

不需要 API 成本。

没有混乱的解析。

只有原始的、可用的数据。

它可以轻松处理的内容:

• 表格 → 完美提取

• 破损布局 → 自动修复

• 嵌套数据 → 结构化清理

• 扫描混乱 → 转换为可读

这不是小升级。

这会在一夜之间消除 90% 的手动数据清理。

这个工具叫 OpenDataLoader

而且……它是开源的。

仓库 → t.co/YTc1veZmFZ

中文

Antony Alvarez retweetledi

Quantum Haber 🚨 Arkadaşlar, PDF’ler resmen tarih oluyor.

Bir geliştirici inanılmaz bir araç çıkardı: **OpenDataLoader**

Taranmış, karmaşık, bozuk layout’lu PDF’leri saniyede 100 sayfa hızında temiz, yapılandırılmış Markdown’a çeviriyor.

Ne yapıyor?

• Tablo, nested data ve karmaşık layout’ları kusursuz şekilde ayrıştırıyor

• Scanned belgeleri bile okunaklı hâle getiriyor

• GPU gerektirmiyor

• API maliyeti yok

• Tamamen açık kaynak ve yerel çalışıyor

Kısacası, manuel veri temizleme işinin %90’ını tek seferde bitiriyor.

RAG pipeline’ları, akademik çalışmalar, arşiv dijitalleştirme ve kurumsal doküman işleri için tam bir game-changer.

🔗 GitHub: github.com/OpenDataLoader

Sizce bu tür araçlar, PDF’lerle boğuşan herkesin hayatını ne ölçüde kolaylaştıracak?

Türkçe

Antony Alvarez retweetledi

Transforma PDF a Markdown en segundos.

Esta biblioteca lo hace y es rapidísima.

✓ Extrae tablas y fórmulas matemáticas

✓ Disponible en Node.js, Python y Rust

Perfecto para fine tuning y extraer información:

→ github.com/firecrawl/pdf-…

Español

Antony Alvarez retweetledi

Antony Alvarez retweetledi

Antony Alvarez retweetledi

Dois engenheiros da Anthropic acabaram de mudar a forma como devs pensam sobre IA.

Barry Zhang e Mahesh Murag subiram no palco do AI Engineer Code Summit e disseram uma frase que incomodou muita gente:

"Parem de construir agentes. Construam Skills."

Em 16 minutos eles provam que a indústria inteira está resolvendo o problema errado.

Aqui está o que a maioria não entendeu:

→ Skills são pastas. Literalmente pastas com arquivos markdown.

→ Elas ensinam ao Claude o SEU fluxo de trabalho, a SUA expertise, o SEU domínio.

→ Um único agente genérico + biblioteca de Skills específicas supera dezenas de agentes especializados.

→ Fortune 100s já estão deployando Skills em escala pra ensinar agentes sobre processos internos.

→ Times de produtividade com 10.000+ devs usam Skills pra padronizar como código é escrito.

A analogia que eles usaram é perfeita:

Quem você quer fazendo seu imposto de renda? O gênio com QI 300 que nunca viu legislação tributária, ou o contador experiente que faz isso há 20 anos?

Inteligência sem expertise é entretenimento.

Expertise empacotada é produtividade.

O que mudou: a Anthropic parou de tentar criar agentes diferentes pra cada domínio.

Perceberam que com Claude Code, o padrão é sempre o mesmo. Um modelo acoplado a um runtime com filesystem.

A diferença entre um agente medíocre e um extraordinário não é o modelo. É o conhecimento de domínio que você alimenta.

Skills resolvem isso com progressive disclosure. O agente só carrega o nome e descrição da skill. Quando relevante, puxa o SKILL.md. Quando precisa de mais, navega os arquivos de referência. Zero desperdício de contexto.

Isso não é uma feature. É uma mudança de paradigma.

Quem entender isso agora vai operar em outro nível daqui a 90 dias.

Quem ignorar vai continuar escrevendo prompts de mil palavras toda vez que abrir o chat. E ainda explicar de novo e de novo o que “realmente” quer.

Português

Antony Alvarez retweetledi

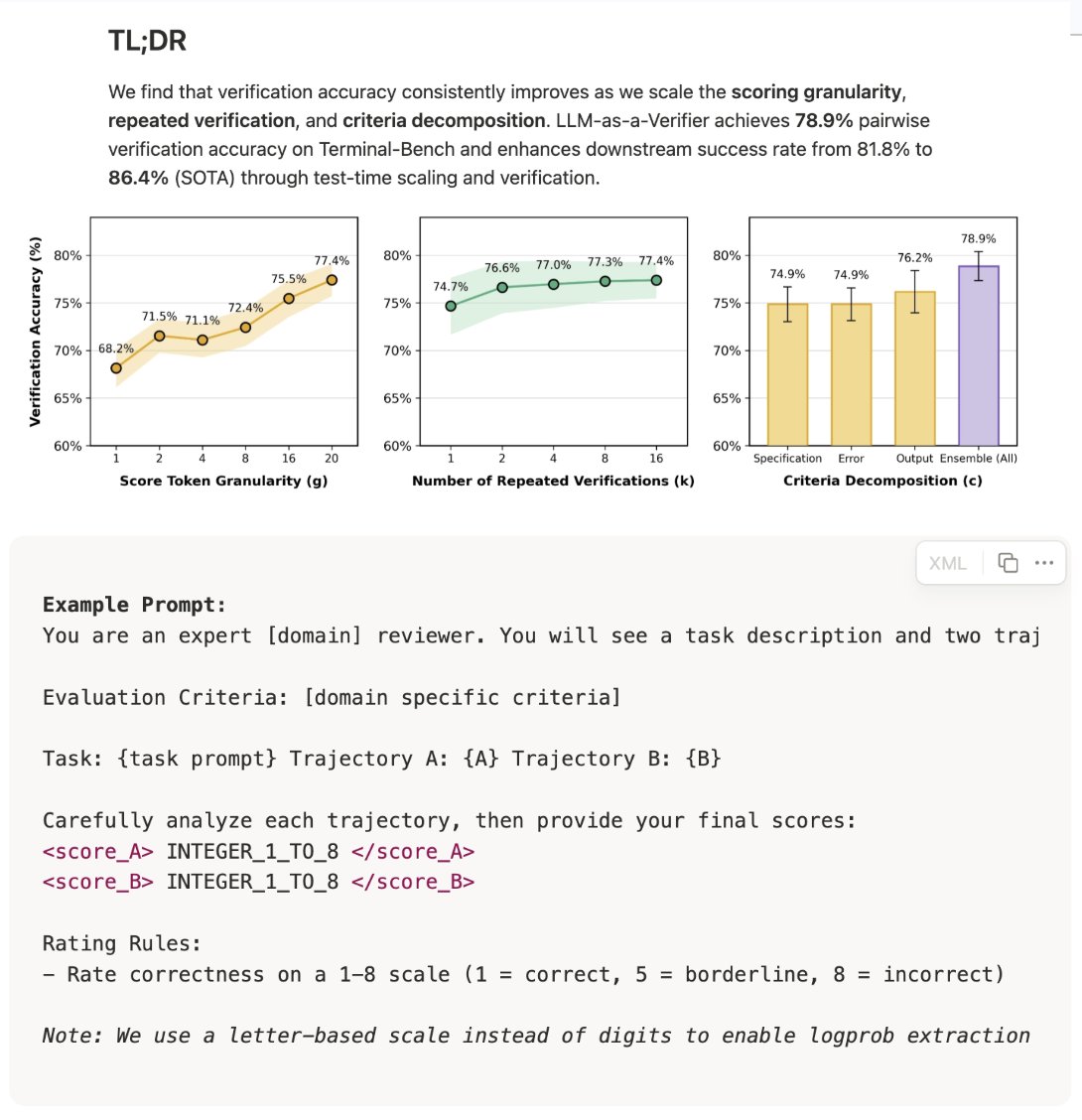

Strongly recommend the LLM-as-a-Verifier writeup.

Biggest takeaway for me is that increasing scoring granularity makes the verifier more effective. This indicates that LLM judges / verifiers are developing new (and better) capabilities.

This did not work well 1-2 years ago. In fact, LLM-as-a-Judge best practice was that lower scoring granularity (e.g., binary, ternary, or 1-5 Likert score) worked way better than granular scores (e.g., 1-100 scale). This was a constant recommendation I gave for setting up LLM judges properly. It seems like recent frontier LLMs now are better at scoring at finer granularities, making this best practice (potentially) obsolete.

One caveat to this finding is that the scoring setup used in this writeup is a specific setup based upon logprobs. Instead of just using the score token outputted by the LLM as the result, they compute the logprob of each possible score token and take a weighted average of scores (with weights given by probabilities). Then, they go further by expanding this weighted average across repeated verifications and multiple criterion:

Reward = (1 / CK) * ∑_{c=1}^{C} ∑_{k=1}^{K} ∑_{g=1}^{G} score_logprob * score_value

where C is the total number of evaluation criterion, K is the number of repeated verifications, and G is the scoring granularity (i.e., number of unique scoring output options). The reward determines if a particular output passes verification across criteria.

When using this logprob setup, we see consistent gains in verifier accuracy by:

- Increasing scoring granularity G.

- Increasing repeated verifications K.

- Increasing the number of evaluation criterion C.

The last two findings are in line with prior work, but the fact that higher scoring granularity is helpful is interesting!

In the LLM-as-a-Verifier paper, this system is used at inference time in a pairwise fashion as described below.

"To pick the best trajectory among N candidates for a given task, a round-robin tournament is conducted. For every pair (i, j) the verifier produces Reward(i) and Reward(j) using the formula above. The trajectory with the higher reward receives a win, and the trajectory with the most wins across all \binom{N}{2} pairs is selected."

English

Antony Alvarez retweetledi

Se habla mucho de agentes.

Pero hay un concepto más importante para entender cómo funcionan: el harness.

Si usás Claude Code, Cursor o Codex, gran parte de lo que pasa viene de ahí.

Un LLM, por sí solo, solo genera texto. No puede leer tus archivos, ejecutar código ni hacer cambios. Para eso existe el harness: el sistema que lo conecta con tu entorno, le da acceso a herramientas (leer, editar, ejecutar) y se encarga de ejecutar lo que el modelo propone.

Funciona así:

→ el modelo recibe contexto (tu código, instrucciones, historial)

→ con eso decide qué hacer

→ el sistema ejecuta esa acción (leer archivo, correr comando, etc.)

→ le devuelve el resultado como nuevo contexto

→ el modelo decide el siguiente paso

Y repite ese loop hasta resolver la tarea.

En el video lo baja a algo muy concreto.

Dice que con 3 tools ya podés armar algo básico: encontrar código, leer archivos y editarlos. Con eso ya tenés un agente funcional que puede trabajar sobre un repo.

Y ahí está la clave.

Ese sistema es el que define qué información ve el modelo, cómo puede actuar y cómo avanza en cada paso. Por eso, pequeños cambios en el harness cambian completamente el resultado.

De hecho, muestra un caso donde el mismo modelo (Opus) pasa de ~77% a ~93% en un benchmark de código, solo por cambiar el harness.

Por eso, la diferencia ya no está solo en el modelo, sino en cómo armás el sistema alrededor.

Recomiendo el video. Muy bueno.

Theo - t3.gg@theo

Agent harnesses aren't the black magic many of y'all seem to think they are. To prove it, I built one.

Español

Antony Alvarez retweetledi

Antony Alvarez retweetledi

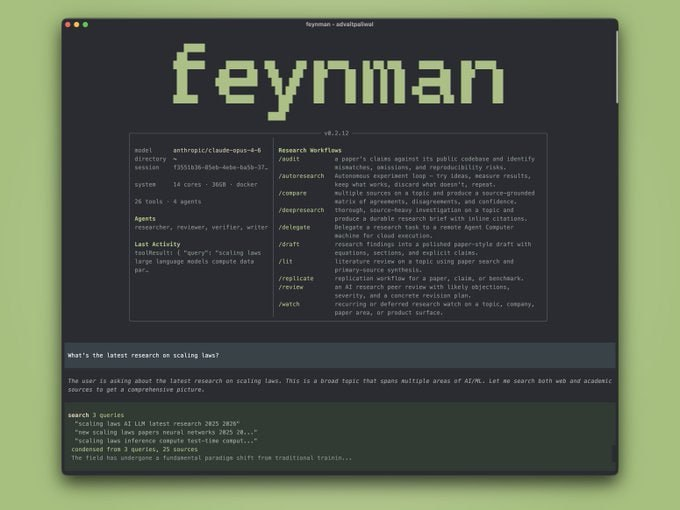

Feynman, la CLI open source, que lo hace realidad.

Tú solo introduces una pregunta y cuatro agentes IA se encargan del resto:

- Investigador: extrae evidencia de papers, repos y web.

- Revisor: hace revisión por pares simulada con feedback de calidad.

- Redactor: elabora borradores estructurados y profesionales.

- Verificador: valida cada URL, elimina enlaces rotos y cruza estudios con repositorios para detectar claims falsos.

Cero nube.

Totalmente gratuito y local.

Investigación seria desde tu terminal.

Español

Antony Alvarez retweetledi

Si entra data, siempre se valida.

Y se valida en 3 lugares:

frontend, backend y base de datos.

¿Por qué?

Porque cada capa protege algo distinto:

1) Frontend: para evitar errores simples y mejorar la experiencia del usuario. No tiene sentido mandar al backend algo que ya sabés que está mal.

2) Backend: para filtrar lo que venga del exterior. Es donde asumís que todo puede estar manipulado o corrupto.

3) Base de datos: para asegurar integridad.

Si algo llega hasta ahí, que la DB tenga tipos fuertes, restricciones y límites claros.

Ahora sí, ¿qué validás?

Tipos de datos, archivos (extensión y tamaño), y texto sin scripts o código malicioso. Todo validado contra un schema (ej: Zod).

Tu app recibe datos todo el tiempo: formularios, archivos o params en la URL. Y aunque vengan de tu frontend, se pueden modificar antes de llegar.

Por eso, nunca confíes en lo que manda el cliente.

Si no lo hacés, quedás expuesto a cosas como SQL Injection o SSRF.

Español

Antony Alvarez retweetledi

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

Antony Alvarez retweetledi

🚨 ESTE ES EL REPO QUE TE VA A HACER RICO

Olvídate de vender plantillas o cursos...

Este es la máquina de imprimir dinero que estabas buscando:

[Onyx]

La plataforma open source para montar tu propia IA local y 100% privada para empresas.

Tú lo instalas en sus servidores (o lo hosteas tú), lo personalizas y cobras:

- Instalación + integración con todos sus documentos internos (Drive, Notion, Slack, PDFs…)

- Agentes IA que realmente usan los datos reales de la empresa

- Soporte mensual, custom agents, voice mode, code interpreter

Es literalmente ChatGPT Enterprise pero self-hosted y open source.

Las empresas pagan fortunas porque:

- Cumplen GDPR y privacidad total

- La IA sabe TODO de su empresa

- No dependen de la nube de nadie

REPOOO👇

Español