Kevin Li

248 posts

@curiouskid423

multimodal research @adobe | co-lead of firefly image 5 | ex vision research @berkeley_ai | 🇹🇼 | opinions are my own

High-resolution image and video generation is hitting a wall because attention in DiTs scales quadratically with token count. But does every pixel need to be in full resolution? Introducing Foveated Diffusion: a new approach for efficient diffusion-based generation that allocates compute where it matters most. 1/7🧵

Meet MAI‑Image‑2. Built with creatives, for real creative work. Ranked #5 on @arena’s text‑to‑image leaderboard. Available now: msft.it/6014QUCBe

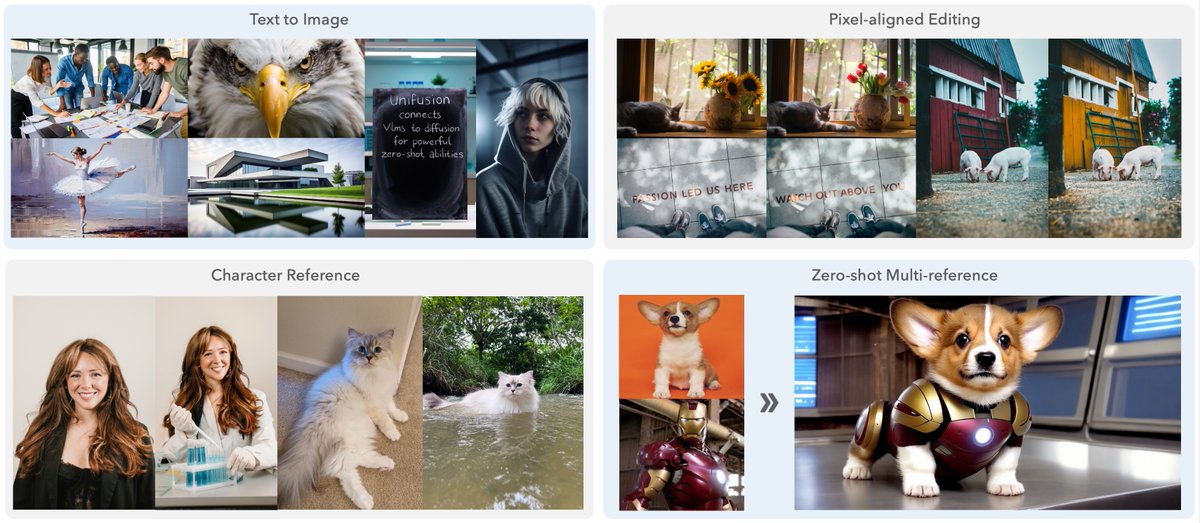

(1/n) 🚀 Your VLM can be a great multimodal encoder for image editing and generation if you use the middle layers wisely (yes, plural 😉). We are thrilled to present UniFusion - the first architecture uses only VLM as input-condition encoder without auxiliary signals from VAE or CLIP to do image editing with competitive quality, to the best of our knowledge. Here’re what you get with VLM as your unified encoder: 🎯 zero-shot multi-reference image generation when trained only on single-ref pairs 🎯 cross-task capability transfer -- editing task helps text-to-image generation qualitatively and quantitatively 🎯 a competitive text-to-image and editing joint model that beats Flux.1 [dev] and Bagel, respectively, with a smaller model and less data 👇More details in the thread.

I hope this is an extinction event for diffusion slop Diffusion is the greatest nerdsnipe in recent history so many bright minds led astray by pretty mafs well, with new complex attention designs they'll hopefully come back into the fold

(1/n) 🚀 Your VLM can be a great multimodal encoder for image editing and generation if you use the middle layers wisely (yes, plural 😉). We are thrilled to present UniFusion - the first architecture uses only VLM as input-condition encoder without auxiliary signals from VAE or CLIP to do image editing with competitive quality, to the best of our knowledge. Here’re what you get with VLM as your unified encoder: 🎯 zero-shot multi-reference image generation when trained only on single-ref pairs 🎯 cross-task capability transfer -- editing task helps text-to-image generation qualitatively and quantitatively 🎯 a competitive text-to-image and editing joint model that beats Flux.1 [dev] and Bagel, respectively, with a smaller model and less data 👇More details in the thread.

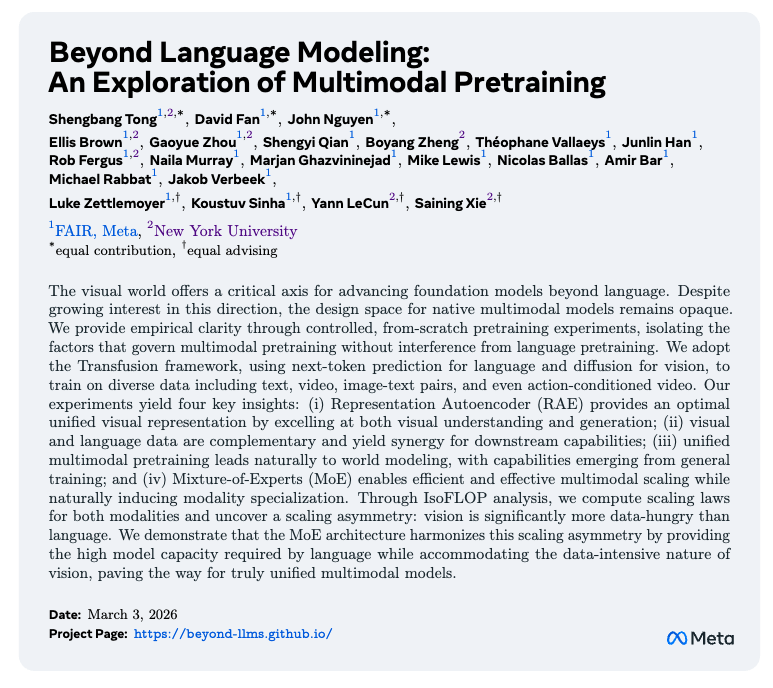

With RAE, visual understanding and generation operate in the same shared representation space. We show that generative training doesn't hurt understanding, and crucially, this shared space enables the LLM to perform Test-Time Scaling directly in the latent space.

How do we get a robot to use a screwdriver 🪛 and fasten a nut 🔩 ? Introducing DexScrew, our sim-to-real framework that enables a dexterous hand 🖐️ to autonomously perform complex and contact-rich tasks even when we cannot accurately simulate them. We open-source the full-stack implementation: dexscrew.github.io