Dan Busbridge

165 posts

Dan Busbridge

@danbusbridge

Machine Learning Research @ Apple (opinions are my own)

Uncertainty quantification (UQ) is key for safe, reliable LLMs... but are we evaluating it correctly? 🚨 Our ACL2025 paper finds a hidden flaw: if both UQ methods and correctness metrics are biased by the same factor (e.g., response length), evaluations get systematically skewed

We propose new scaling laws that predict the optimal data mixture, for pretraining LLMs, native multimodal models and large vision encoders ! Only running small-scale experiments is needed, and we can then extrapolate to large-scale ones. These laws allow 1/n 🧵

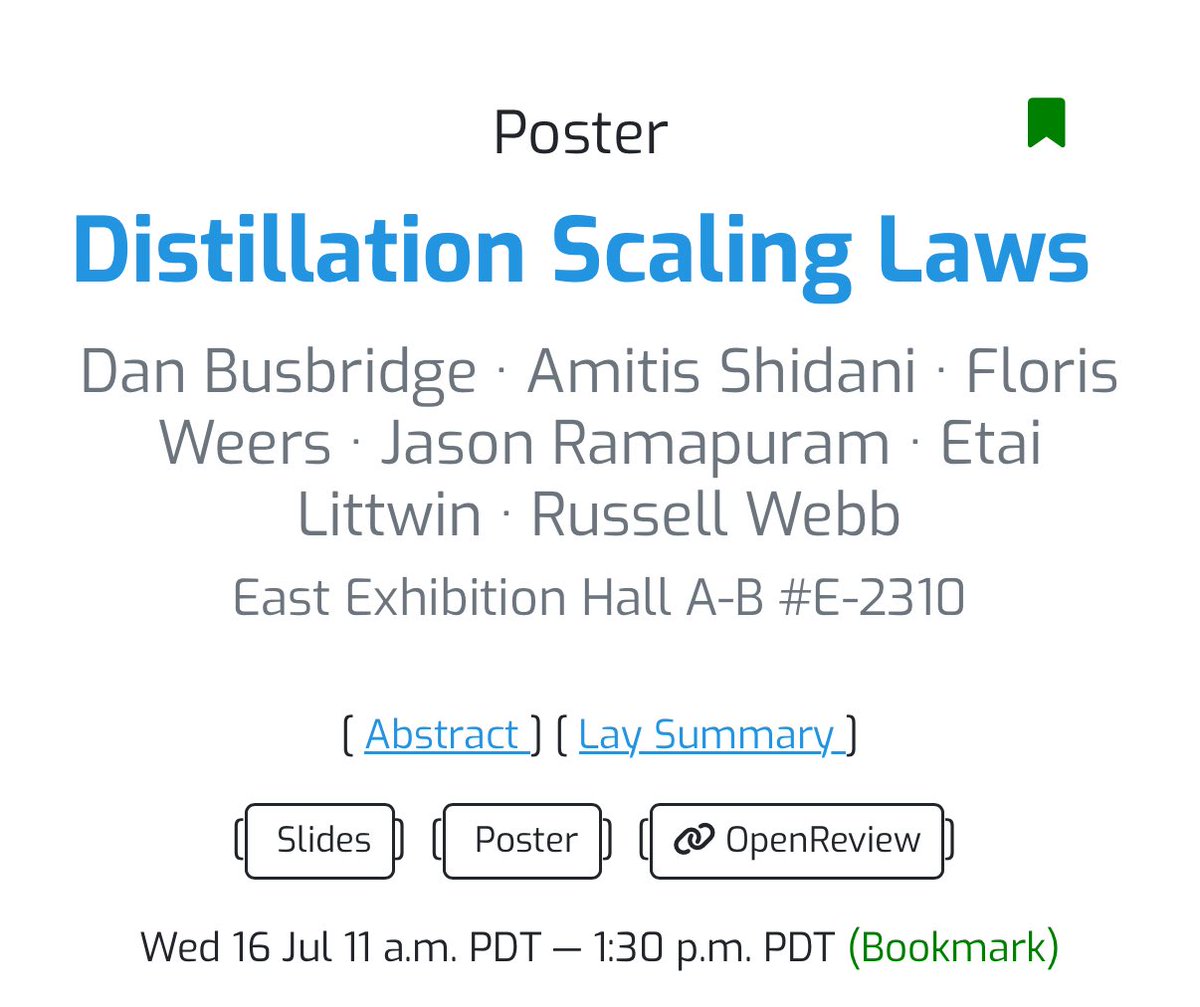

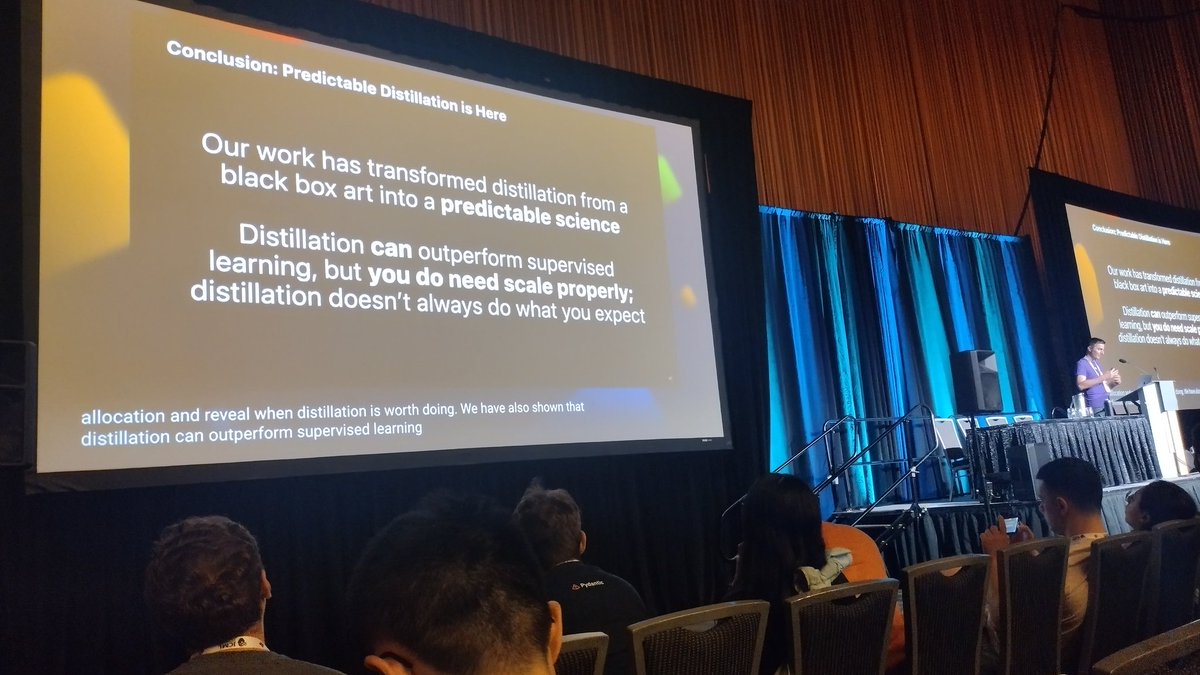

Happening in 30 minutes in West Ballroom A - looking forward to sharing our work on Distillation Scaling Laws!

Happening in 30 minutes in West Ballroom A - looking forward to sharing our work on Distillation Scaling Laws!

Excited to be heading to Vancouver for #ICML2025 next week! I'll be giving a deep dive on Distillation Scaling Laws at the expo — exploring when and how small models can match the performance of large ones. 📍 Sunday, July 13, 5pm, West Ballroom A 🔗 icml.cc/virtual/2025/4…

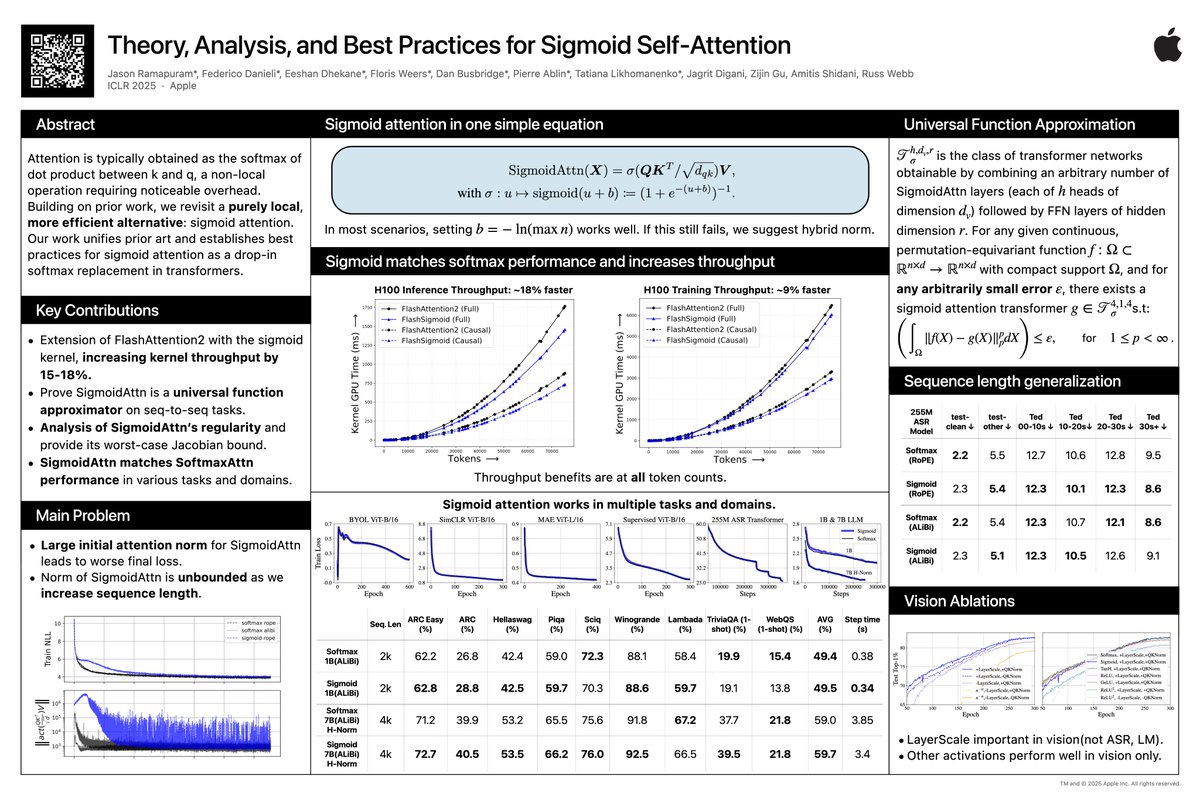

Small update on SigmoidAttn (arXiV incoming). - 1B and 7B LLM results added and stabilized. - Hybrid Norm [on embed dim, not seq dim], `x + norm(sigmoid(QK^T / sqrt(d_{qk}))V)`, stablizes longer sequence (n=4096) and larger models (7B). H-norm used with Grok-1 for example.

We release a large scale study to answer the following: - Is late fusion inherently better than early fusion for multimodal models? - How do native multimodal models scale compared to LLMs. - How sparsity (MoEs) can play a detrimental role in handling heterogeneous modalities? 🧵