Daniel Bigham

13.6K posts

Daniel Bigham

@danielbigham

Software developer (@uwaterloo) passionate about AI + NLU. Canadian. Enjoys following SpaceX, Tesla, etc. Appalled by disparity between rich and poor.

Waterloo, Ontario, Canada Katılım Mayıs 2009

966 Takip Edilen1.2K Takipçiler

"What will people do when robots take the jobs" Apparently 1.2 million of us will be watching them sort packages 🤣

Brett Adcock@adcock_brett

Watch a team of humanoid robots running a full 8-hr shift at human performance levels. This is fully autonomous running Helix-02 x.com/i/broadcasts/1…

English

@gigafactories We put our order in about a week ago for one of these cars made in Berlin, shipped to Canada. Thanks for all of your work!

English

This Mother's day, get your mamacita a Figure humanoid robot to clean your house 🌺

@adcock_brett @Figure_robot

Figure@Figure_robot

We taught two F.03 robots to clean a room and make a bed in under 2 minutes - fully autonomous.

English

@NoLimitGains I'm a Canadian home owner, and if prices are weakening, I see that as a good thing. Homes are too expensive.

English

@TSLAFanMtl I hadn't watched this yet -- thanks for the nudge. Looks phenomenal.

English

Tesla will have a lot of humanoid robot competition in China, but also much closer to home. Figure 03 is impressive, and Figure 04 will be an even bigger leap from 03 than 03 was from 02.

Lots of similarities in strategy between Figure and Tesla, across the board. Given the size of the market (which we may think of almost infinite, at this point), there will be space for more than 1 player - especially if the Western world prioritizes American robots over Chinese ones.

English

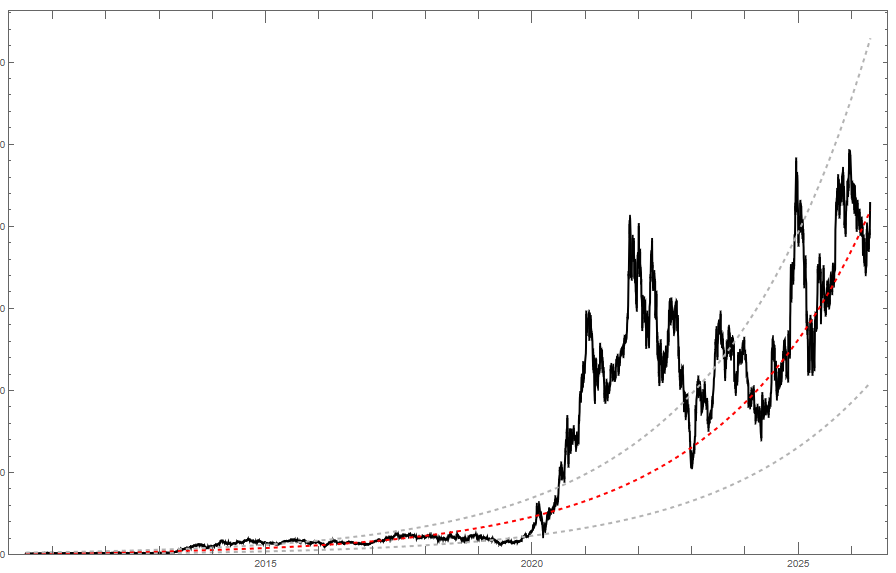

Although smooth growth curves are an overly simplistic view of fair value, I think they're a useful tool. The curve I plotted a year or so ago suggests that Tesla's fair price is roughly $420 currently, meaning that that the stock is trading around 1.02 it's fair value. The current stock price feels very reasonable to me. (But yes, if one knows with close-to-certainty that robotaxi will scale fast and be a huge cash cow, and similarly for Optimus, then I think we can agree that the stock is worth far more.)

English

@BlueJays @SlangsOnSports And we didn't even get to see him release the ball! Darn.

English

And to respond to this part:

"Or is there examples where an individual might be morally obligated to become that “authority”?"

I do think there are times when it is good and right to intervene. For example, if a Christian principal were to see a school shooter walk through the doors of their school and has the opportunity to "violently" stop them, I absolutely think that's the right thing to do, ideally as unviolently as possible, but potentially, yes, using lethal force.

English

For non-violent behavior, I think it's "keep forgiving, if paired with their honest remorse" and "forgive (slightly different use of the word) even if they don't repent".

Above I'm using two different flavors of forgiveness:

1. In the first case, it's a forgiveness that attempts to restore one's trust in the person, as well as to not hold something over them, and releases negative emotion such as anger.

2. In the second case, it's a forgiveness that may not fully restore trust (or at all), but doesn't hold something over them, and again, releases negative emotion such as anger.

Repeat violent behavior, especially if it not is not paired with strong remorse, I feel falls more into (2). It is too high-stakes to simply always "restore full trust". There may be times when our relationships with others need to change in very real ways to offer appropriate protection to the vulnerable.

English

Genuine question for my Christian friends:

Im trying to use Grok to help me find examples in the Bible and understand how Jesus would approach handling repeat violent criminals for example.

My basic understanding at this point is that the individual believer should more or less infinitely forgive the offender and trust the “authorities” to justly handle the situation or let God judge.

Is that the end of it?

Or is there examples where an individual might be morally obligated to become that “authority”?

English

@realBigBrainAI People keep conflating intelligence with consciousness. They are orthogonal.

English

Stephen Wolfram, founder of Wolfram Research, explains how LLMs are quietly dismantling our deepest assumptions about consciousness:

He argues that large language models have done something philosophy and neuroscience couldn't:

"In terms of consciousness, I have to say, the idea that there's sort of something magic that goes beyond physics that leads to sort of conscious behavior, I kind of think that LLMs kind of put the final nail in that coffin."

His reasoning is that LLMs keep doing things people assumed they couldn't:

"There were all these things where it's like, oh, maybe it can't do this, but actually it does. And it's just an artificial neural net."

Wolfram then challenges a core assumption about conscious experience: the feeling that we are a single, continuous self moving through time.

"I think our notion of consciousness is a lot related to the fact that we believe in the single thread of experience that we have. It's not obvious that we should have a persistent thread of experience."

He points out that physics doesn't actually support this intuition:

"In our models of physics, we're made of different atoms of space at every successive moment of time. So the fact that we have this belief that we are somehow persistent, we have this thread of experience that extends through time, is not obvious."

Then Wolfram offers a striking origin story for consciousness itself.

@stephen_wolfram suggests it traces back to a simple evolutionary pressure: the moment animals first needed to move.

"I kind of realized that probably when animals first existed in the history of life on Earth, that's when we started needing brains. If you're a thing that doesn't have to move around, the different parts of you can be doing different kinds of things. If you're an animal, then one thing you have to do is decide, are you going to go left or are you going to go right?"

That single binary choice, he argues, may be the seed of everything we now call awareness:

"I kind of think it's a little disappointing to feel that this whole wanted thing that ends up being what we think of as consciousness might have originated in just that very simple need to decide if you are an animal that can move. You have to take all that sensory input and you have to make a definitive decision about do you go this way or that way."

The takeaway is unsettling but clarifying.

If LLMs can produce complex behavior from simple rules, then consciousness may not be a mystical add-on to physics.

It may just be what happens when a layered enough system has to make a decision.

English