how to spot someone who is "using AI for marketing" but hasn't moved a single metric:

- copy pastes model output directly into the blog with light editing

- says "we're an AI-powered marketing team" because of a ChatGPT subscription

- automated the cold outreach sequence that was already getting 0 replies so now gets them faster

- produces 4x more content that performs 4x worse

- measures AI ROI entirely in hrs saved with zero correlation to revenue

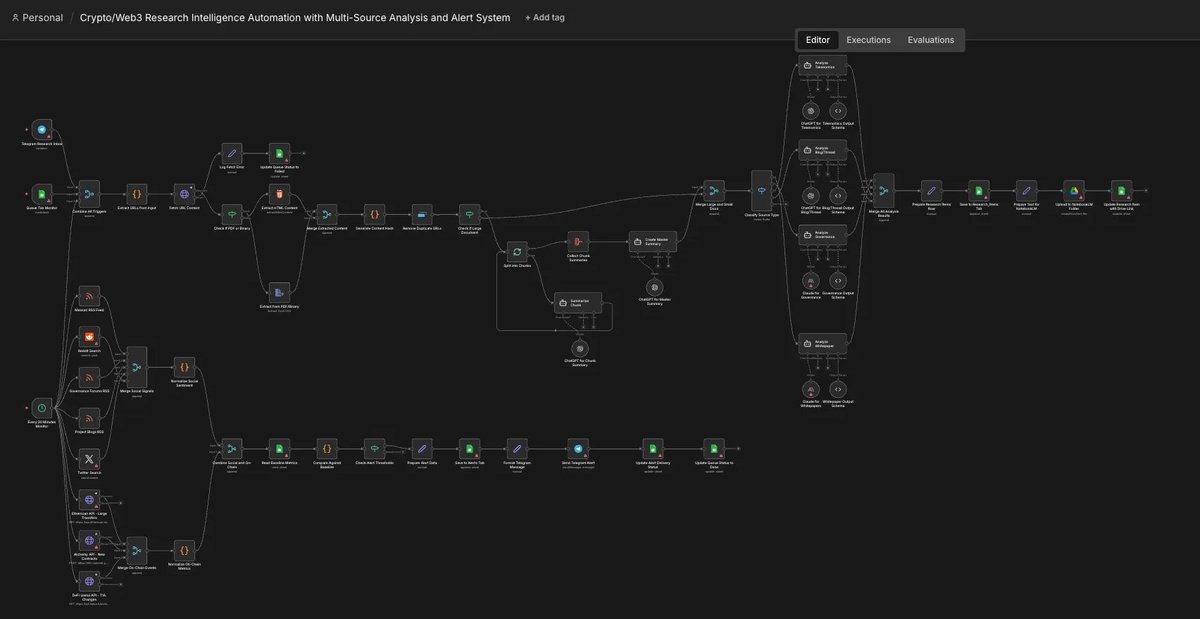

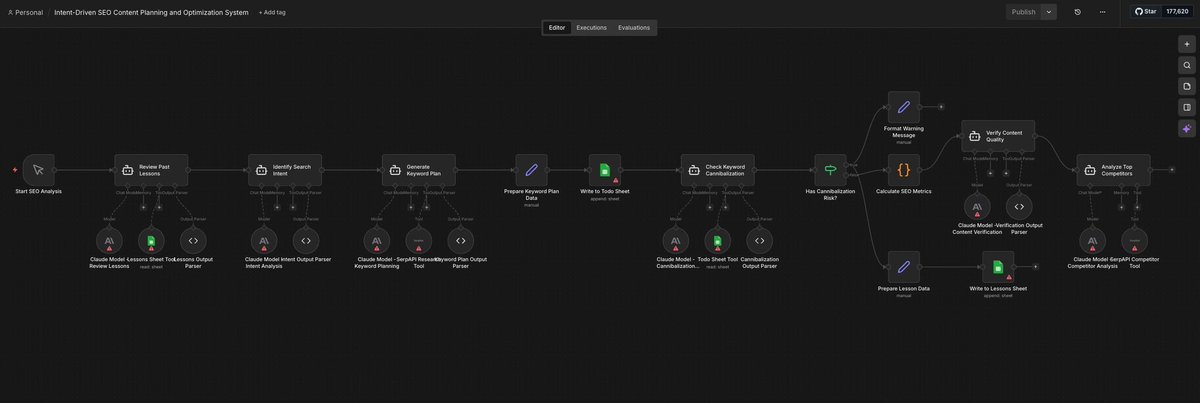

- calls a 3-5 step zapier or n8n automation an AI powered growth engine

- announced AI would replace the content team, then shipped content that tanked organic traffic

- every prompt is under 10-20 words and the output quality reflects that

- leverage AI across all marketing touchpoints is on the roadmap with no implementation plan beneath it

the point is AI didn't rescue bad marketing strategy. it just gave it a faster publishing cadence.

tbh, the marketers actually winning with AI aren't using it to do more of the same thing. they're using it to do things that weren't possible before.

so what's the most overhyped AI marketing move you've seen this year?

English