Dan Ofer (Was @ICML,@Worldcon )

4.7K posts

Dan Ofer (Was @ICML,@Worldcon )

@danofer

#Data scientist, #Researcher, Bioinformatician, Photographer, Geek & Bookworm. PhD #AI #LLM @HebrewU @HyadataLab @liniallab @shebaARC

Automating AI research is the next major step in AI We let Claude Code (Opus 4.7) and Codex (GPT 5.5) run autonomously on the nanoGPT speedrun optimizer track using our idle compute. ~10k runs, ~14k H200 hours Opus now holds the record at 2930 steps vs the 2990 human baseline

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: github.com/MoonshotAI/Att…

Yesterday, I was giving an intro talk to our dept's new PhD students. Technical things aside, my number 1 suggestion has remained the same over the years: Treat your PhD like a job. - Avoid 1.5h lunch and three tea breaks. - Avoid gossiping and loitering at work. - Lab at 9 am and leave at 6 pm. Being productive till 11 pm in the lab is a lie people till themselves when their day starts at 1 PM. Everything worth doing can be done with high intensity focus during work hours. And having fun in life is the secret to being productive in a marathon.

1/ (New paper!) If swapping the gender in an input prompt makes the AI model give a different answer it means that it has to have a gender bias, right? Wrong. 🧵on counterfactual prompting for LLM evals: Paper: arxiv.org/abs/2605.01048

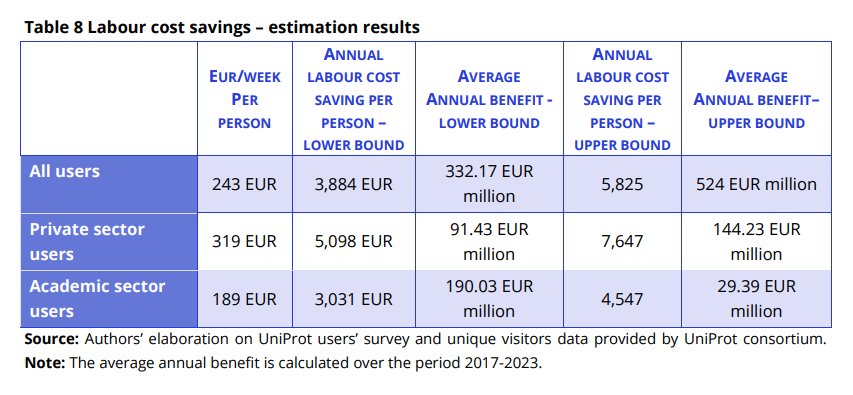

It’s estimated that the Protein Data Bank (PDB) cost around $13B to create. Alphafold was only possible because of it. If we want ML to solve biology, we should be funding the creation of databases and the development of new assay technologies. ML is nothing without data.

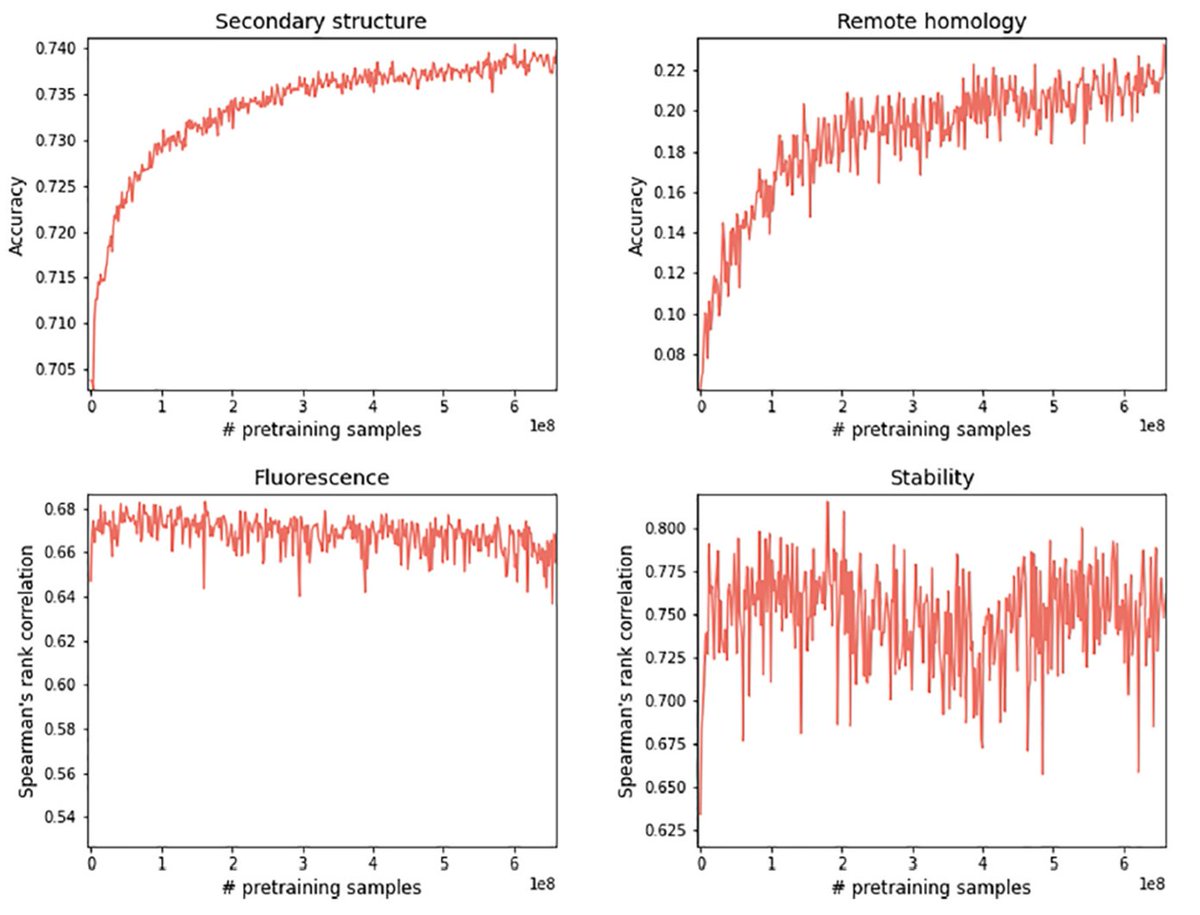

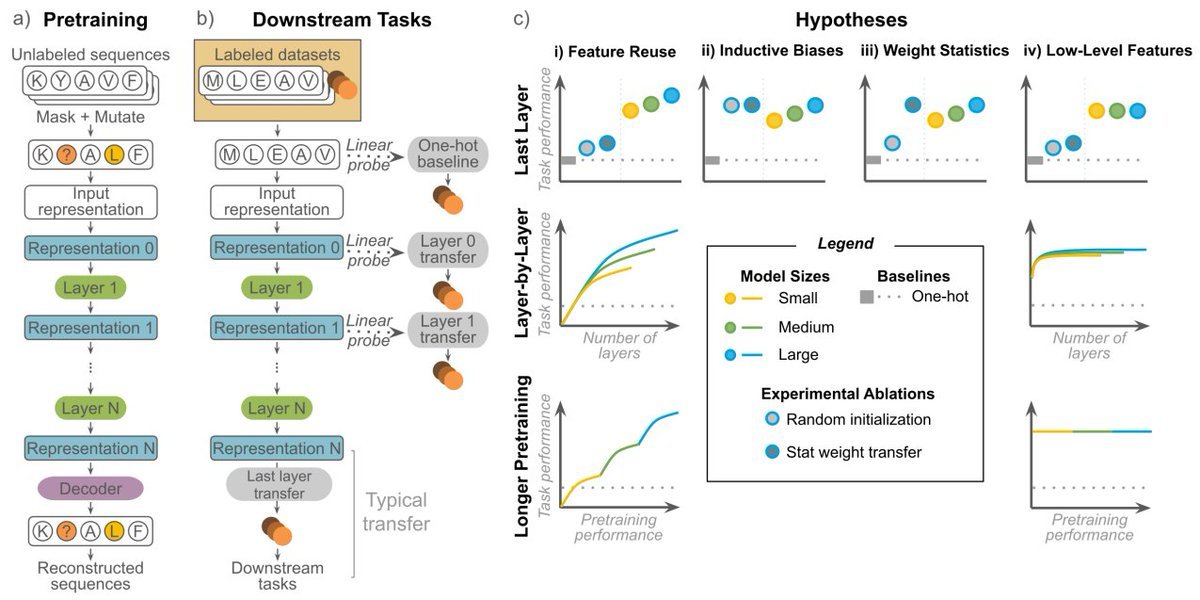

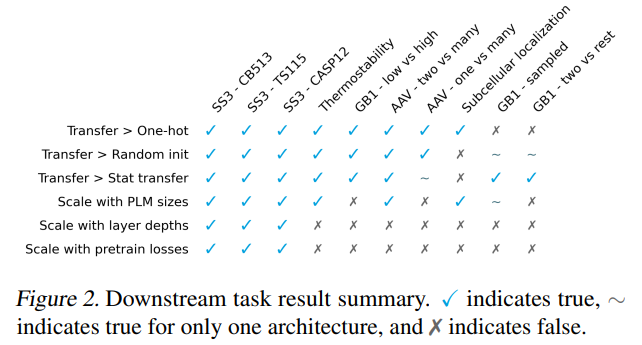

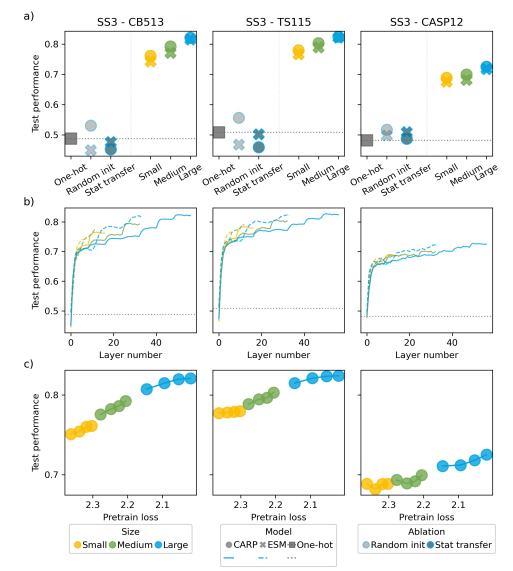

Announcing our preprint understanding transfer learning for protein language models (PLMs), led by former MSRNE intern @francescazfl, with @KevinKaichuang @avapamini @yisongyue Key takeaway: PLMs do not scale for anything except structure! 🧵👇 biorxiv.org/content/10.110…