junglanml

8.7K posts

junglanml

@danrandow

social sense-making in a meat bag. emerging decentralised governance nerd. On Team @HackHumanityCo

Just published our NEAR Staking in 2026 guide. We break down how it works now - epochs, seat prices, why 2.5% inflation boosts real yields, and how @meta_pool $stNEAR keeps you flexible while earning. Worth a read whether you’re new or already staking. Check it out 👇

IronClaw now has its own handle. IronClaw is the secure agent harness for the age of always-on AI. Open-source, built in Rust, deployable on @near_ai Cloud, IronClaw ensures your credentials never reach the model. Follow along for demos, security pro tips, new skills, and more.

@alice_und_bob Become just Alice and post photos of bare feet

I voted with 263k veNEAR `FOR` @NEARGovernance's Constitutional Documents it's a batch of 6 documents, discussed individually on gov.near forum for a long time, including via open community calls the documents were built in a real group effort, with a lot of feedback (that were taken into consideration and used to get to this final form) for example, my feedback on the COI policy in a previous version, the COI policy mirrored what is done in some other communities, like Arbitrum, and had, imo, a highly academic approach, far from reality, trying to enforce policies that are not only not enforceable in crypto, but could even be weaponized by bad actors the new COI policy (together with the other documents) are much better now and I believe they constitute one of the best constitutional documents for onchain governance that I know of they are attached to reality, not academy and they will contribute to making HoS one of the best governance protocols in crypto, as long as it is able to see higher participation thresholds and more staked $NEAR (to veNEAR) the documents are: + HSP-008 Constitution + HSP-009 Proposals and Voting Procedures (PVP) + HSP-010 Screening Committee Charter (ScrCC) + HSP-011 Mission Vision Values (MVV) + HSP-012 Conflict of Interest Policy (COIP) + HSP-013 Code of Conduct (CoC)

As @HackHumanityCo we have worked comprehensively to produce the NEAR - House of Stake Constitutional Documents. These Constitutional Documents are meant to support a credible path of progressive decentralization: one that works with current legal, technical, and operational realities, while moving House of Stake toward greater autonomy over time. The goal is to build a governance system that can operate responsibly now, earn legitimacy through good decisions, and evolve to better serve the NEAR ecosystem. To get here has been a co-creative process of many cycles with all key stakeholders involved to set House of Stake up for success. If you are a @NEARProtocol stakeholder that has locked NEAR to veNEAR in House of Stake you can vote here: gov.houseofstake.org/proposals/25

just made a swap on near.com was 100x easier than any other platform i've used to date

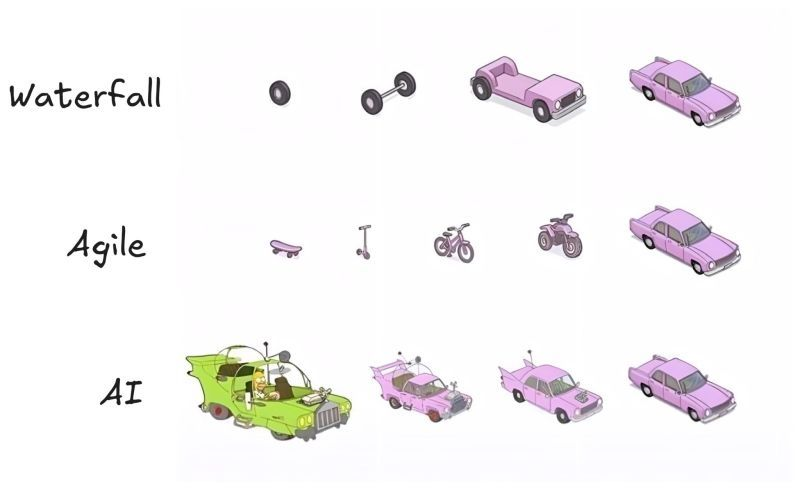

Anthropic's own researchers just proved that using AI to learn new skills makes you 17% worse at them. and the part nobody's reading is more important than the headline. the paper is called "How AI Impacts Skill Formation." randomized experiment. 52 professional developers. real coding tasks with a Python library none of them had used before. half got an AI assistant. half didn't. the AI group scored 17% lower on the skills evaluation. Cohen's d of 0.738, p=0.010. that's a real effect. and here's what makes it sting: the AI group wasn't even faster. no significant speed improvement. they learned less AND didn't save time. but the viral framing of "AI bad for learning" misses what actually matters in this paper. the researchers watched screen recordings of every single participant. they identified 6 distinct patterns of how people use AI when learning something new. 3 of those patterns preserved learning. 3 destroyed it. the gap between them is enormous. participants who only asked AI conceptual questions scored 86% on the evaluation. participants who delegated everything to AI scored 24%. same tool. same task. same time limit. the difference was cognitive engagement. the highest-scoring AI users actually outperformed some of the no-AI group. they asked "why does this work" instead of "write this for me." they generated code then asked follow-up questions to understand it. they used AI as a thinking partner, not a replacement for thinking. the lowest-scoring group did what most people do under deadline pressure: pasted the prompt, copied the output, moved on. they finished fastest. they learned almost nothing. and here's the finding that should concern every engineering manager alive: the biggest score gap was on debugging questions. the skill you need most when supervising AI-generated code is the exact skill that atrophies fastest when you let AI do the work. the control group made more errors during the task. they hit bugs. they struggled with async concepts. they got frustrated. and that struggle is precisely what built their understanding. errors aren't obstacles to learning. they ARE learning. removing them with AI removes the mechanism that creates competence. participants in the AI group literally said afterward they wished they'd "paid more attention" and felt "lazy." one wrote "there are still a lot of gaps in my understanding." they could feel the hollowness of having completed something without understanding it. that's not a productivity win. that's debt. this paper isn't an argument against using AI. it's an argument against using AI unconsciously. Anthropic publishing research showing their own product can inhibit skill formation is the kind of intellectual honesty the industry needs more of. the practical takeaway is simple: if you're learning something new, use AI to ask questions, not to skip the work. the struggle is the product.

In an industry where Web3 narratives shift by the season, some chase the next headline. Others pause, and ask what future they truly want to help build. A year later, we met Tommi @alice_und_bob again in Hong Kong. Having recently concluded his formal role with the @Web3foundation, he is stepping into a new chapter in both life and career — continuing to deepen his engagement with the @Polkadot ecosystem while also feeling the pull of the rapidly advancing AI wave. His focus remains on on-chain governance and public infrastructure, even as he explores new possibilities with a greater sense of independence. This wasn’t merely a conversation about “what’s next” in a career. It became a deeper reflection on where Web3 is actually heading, how technology is meant to be used, and what kind of culture and products @Polkadot 's “Second Age” truly demands. From AI reshaping the developer paradigm, to why on-chain applications remain trapped in financial narratives; from infrastructure reaching maturity, to adoption that still lags behind — Tommi revisits the industry’s most fundamental question with rare clarity: If the technology is finally ready, are we ready to build something that truly matters? Read PolkaWorld’s latest interview below. 0:00 See you again 0:54 Current Chapter 2:11 The AI-Driven Shift in Development 4:19 Technical Maturity vs. Market Recognition 6:47 Hub Liquidity & Developer Migration 9:34 Has Web3 dApp Innovation Stalled? 12:24 Driving Adoption on @Polkadot Hub 16:44 Builder Culture as the Deciding Factor 18:35 Polkadot’s Core Value — Freedom 22:20 The Hard Reset 29:13 Why Still Believe in @Polkadot 31:20 Hong Kong as an Industry Connector 34:53 Bear Market Mindset: Cycles, Reality, and Tech Long-Termism 38:53 After AI Took the Spotlight: Is Web3 Left Behind or Embedded in the Future? 42:02 The Uncertainty Heading into 2026